EMERGING TECH

EMERGING TECH

EMERGING TECH

EMERGING TECH

EMERGING TECH

EMERGING TECH

There is no shortage of studies linking social media use with depression, but Facebook Inc. believes that social media can also be used to promote mental health.

Today the company announced a few new features aimed at tackling suicide on the social network. “Facebook is in a unique position — through friendships on the site — to help connect a person in distress with people who can support them,” Facebook explained in a blog post. “It’s part of our ongoing effort to help build a safe community on and off Facebook.”

Facebook has allowed users to report posts with suicidal content since at least 2011. In February 2015, the social network greatly expanded its suicide prevention tools and began providing reported users with access to suicide hotlines and other support information. The site also made it easier for users to reach out to their at-risk friends to offer help and support.

Now, Facebook wants to beef up its suicide prevention features with artificial intelligence. The company revealed today that it will start using AI to spot users who could be at risk of harming themselves.

Facebook said it has been developing AI that uses pattern recognition to track a number of risk factors for suicide. If the AI spots a post with suicidal content, it will flag the post and make reporting options for it more prominent for other users. Facebook’s Community Operations team will also review posts that have been flagged by the AI and will determine if they should provide suicide prevention tools to that user, even if no one has actually reported the posts.

Facebook Chief Executive Mark Zuckerberg addressed suicide directly in his recent update to Facebook’s mission statement, saying, “There have been terribly tragic events — like suicides, some live streamed — that perhaps could have been prevented if someone had realized what was happening and reported them sooner.”

Zuckerberg added, “Artificial intelligence can help provide a better approach.”

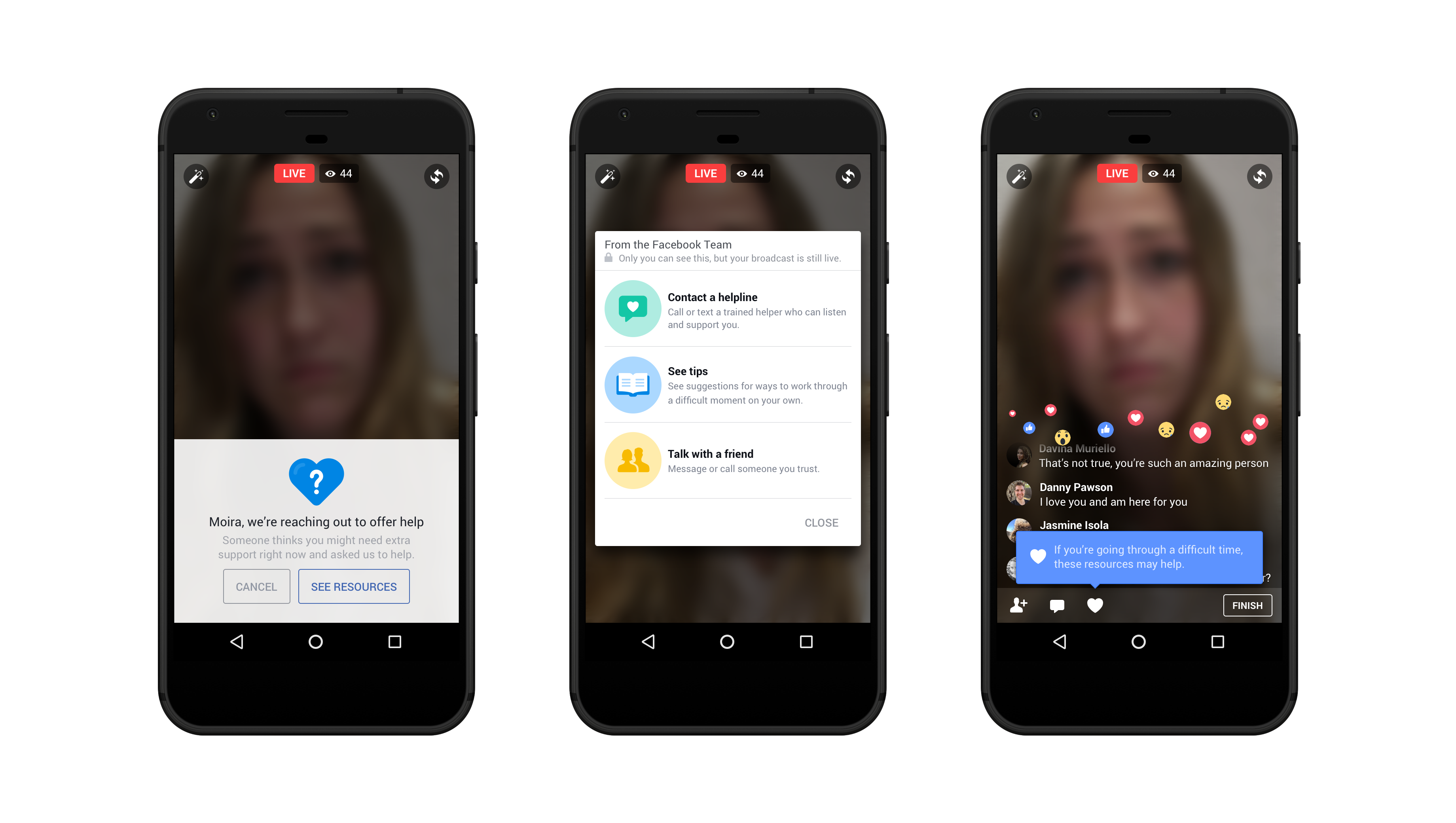

In addition to the new AI, Facebook will also be making it easier for users to communicate with its crisis support partners, which include organizations such as Crisis Text Line, the National Eating Disorder Association and the National Suicide Prevention Lifeline. Facebook said that it is testing out a new communication option that would allow users to contact these organizations using Facebook Messenger.

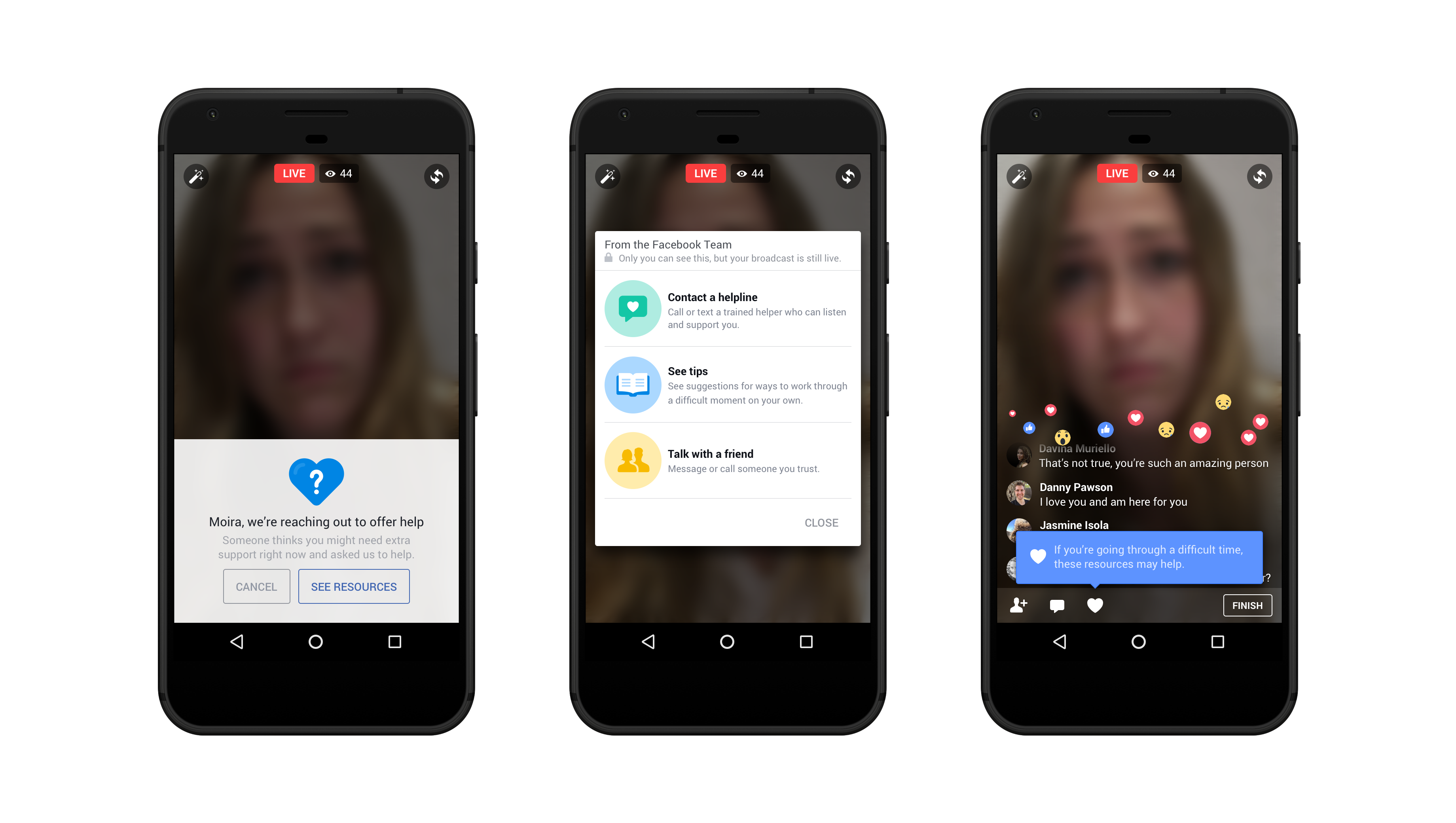

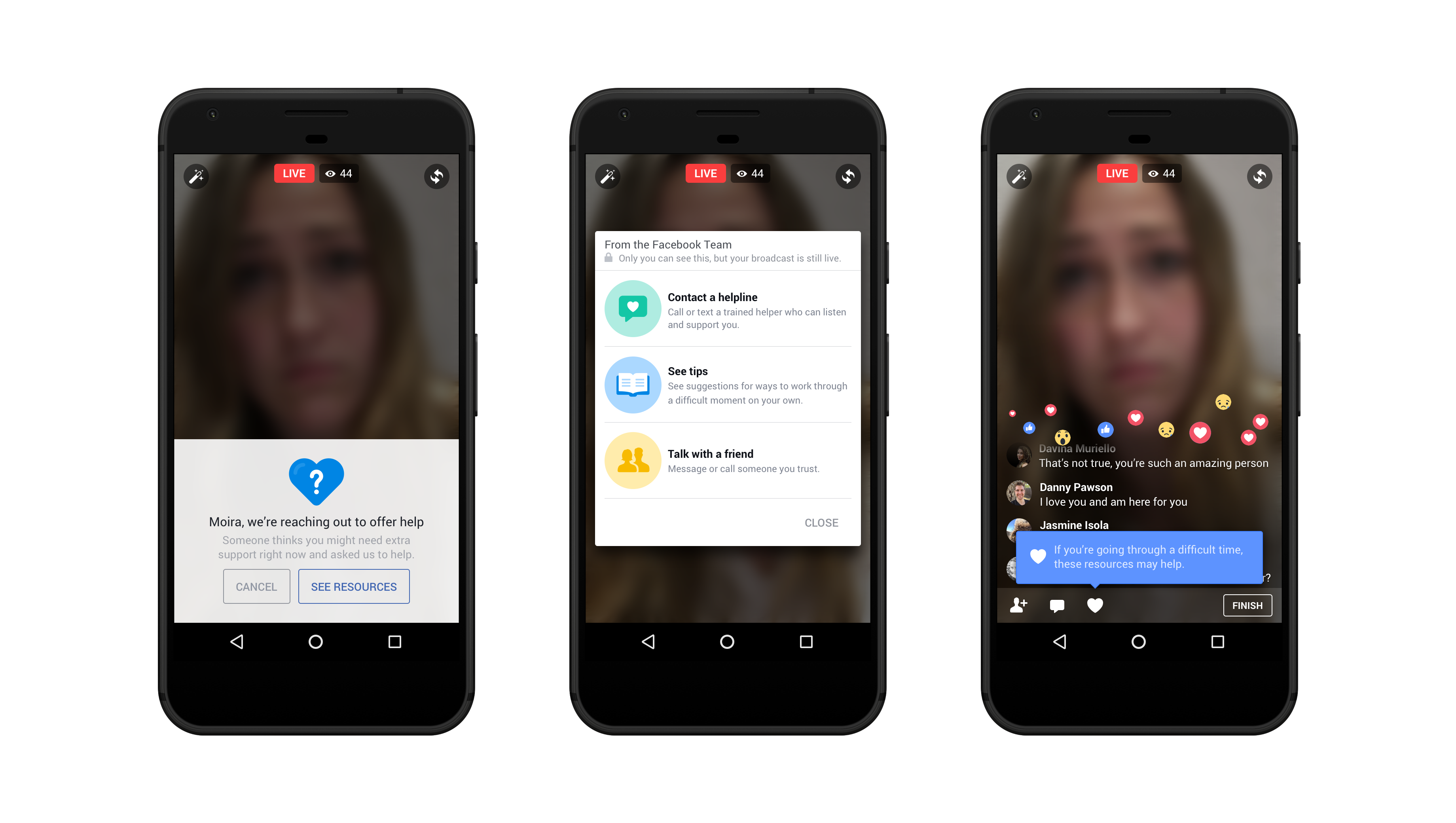

Facebook is also integrating some of its existing suicide prevention tools into Facebook Live. Users will now be able to report streams for suicidal content the same as they can for regular posts, and they will be able to reach out to provide support in real time.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.