AI

AI

AI

AI

AI

AI

Nvidia Corp. is using artificial intelligence to help ensure people will always look their best during a video call, even if they’re still in bed, having just woken up with a hangover.

Vid2Vid Cameo is a new deep learning model that will soon be available within the Nvidia Maxine software development kit, a pretrained collection of AI models developers can use to create augmented reality effects for their video calling and livestreaming applications.

The way it works is this: The user simply selects a reference image, which could be a real photo of themselves looking sharp in business attire or spruced up for someone special, or it could even be a cartoon avatar. Then, when they join a video call, the Vid2Vid Cameo model will capture that person’s real-time motion during the interaction and apply it to that previously uploaded image, in effect bringing it to life.

Nvidia claims Vid2Vid Cameo is extremely accurate and realistic, working by mapping the person’s movements and facial expressions to the reference photo.

The technology has obvious benefits in helping video call participants look smart and professional and perhaps even sexy, if they want, but it goes further too. Because it also requires 10 times less bandwidth than regular video calls, it helps reduce jitter and lag.

“In addition, the underlying technology could also be used to assist the work of animators, photo editors and game developers,” said Nvidia researcher Ming-Yu Liu, who helped create the model.

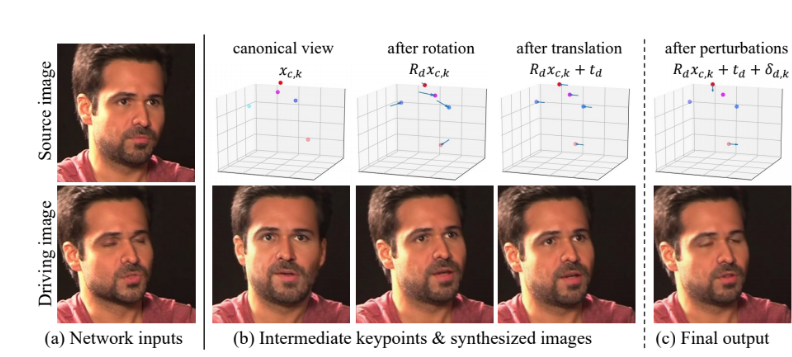

Vid2Vid Cameo was built using a self-learning, generative adversarial network that can synthesize images depicting the same object from multiple viewpoints, from just a single photograph. Aside from the reference photo, all that’s needed is the video stream that dictates how the image should be animated.

Nvidia explained in a research paper that the model works by identifying 20 key points that are necessary to model facial expression and head movement without any need for human annotations. Those points encode the location of features such as the eyes, mouth and nose.

“It then extracts these key points from a reference image of the caller, which could be sent to other video conference participants ahead of time or re-used from previous meetings,” Nvidia’s researchers explained. “This way, instead of sending bulky live video streams from one participant to the other, video conferencing platforms can simply send data on how the speaker’s key facial points are moving. On the receiver’s side, the GAN model uses this information to synthesize a video that mimics the appearance of the reference image.”

Nvidia said Vid2Vid Cameo can even be used to transfer the movements of one person onto the image of another. So, for fun, users could upload an image of Donald Trump, perhaps, or play tricks by uploading a photo of their mother when they see that she’s trying to call them. The possibilities are almost limitless.

Holger Mueller, an analyst with Constellation Research Inc., told SiliconANGLE that Nvidia is pushing the boundary between 2D and 3D visuals with the new Vid2Vid Cameo tool, creatively using GAN models to make video meetings both more interesting and easier to manage.

“This is a massive step forward not only for privacy, ease of use and productivity, but also for efficiency as it helps ease the strain on the network,” he said. “The decision to make it available through its SDK is a smart one too as it should ensure faster adoption. We could be looking at yet another innovation from Nvidia that changes the future of work.”

Nvidia said it will make Vid2Vid Cameo available in the Maxine SDK soon as the “AI Face Codec.” Other useful effects available in the Maxine SDK include AI models for intelligent noise removal, video upscaling and body pose estimation.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.