AI

AI

AI

AI

AI

AI

Every keynote from Nvidia Corp. Chief Executive Jensen Huang is a marathon. It’s a 2.5-hour firehose of product drops and partnerships designed to test the limits of even the most hardcore silicon fanboys. It’s high-octane, high-bandwidth and, frankly, a lot to process.

But if you blinked around the two-hour mark, you missed one of the key stories.

Huang spent about two minutes announcing the Nvidia Agent Toolkit. As we wrote last week, it was part of a “set of open-source tools designed to enhance the capabilities of artificial intelligence agents.”

However, TheCUBE Research partner Raphaëlle d’Ornano at Decoding Discontinuity believes this seemingly minor announcement deserves much more attention than it has received. So this week, she published her latest deep-dive analysis examining what she thinks Agent Toolkit reveals about Nvidia’s larger ambitions and strategy.

This isn’t a new strategy; it’s a classic Nvidia power move. In 2006, its CUDA software turned graphics processing unit from “gaming toys” into the computational backbone of modern AI. It was a long-tail bet that took 10 years to pay off, and it created a moat that competitors are still trying to bridge.

Now, Jensen is replaying the CUDA playbook, but he’s moving one layer up the stack.

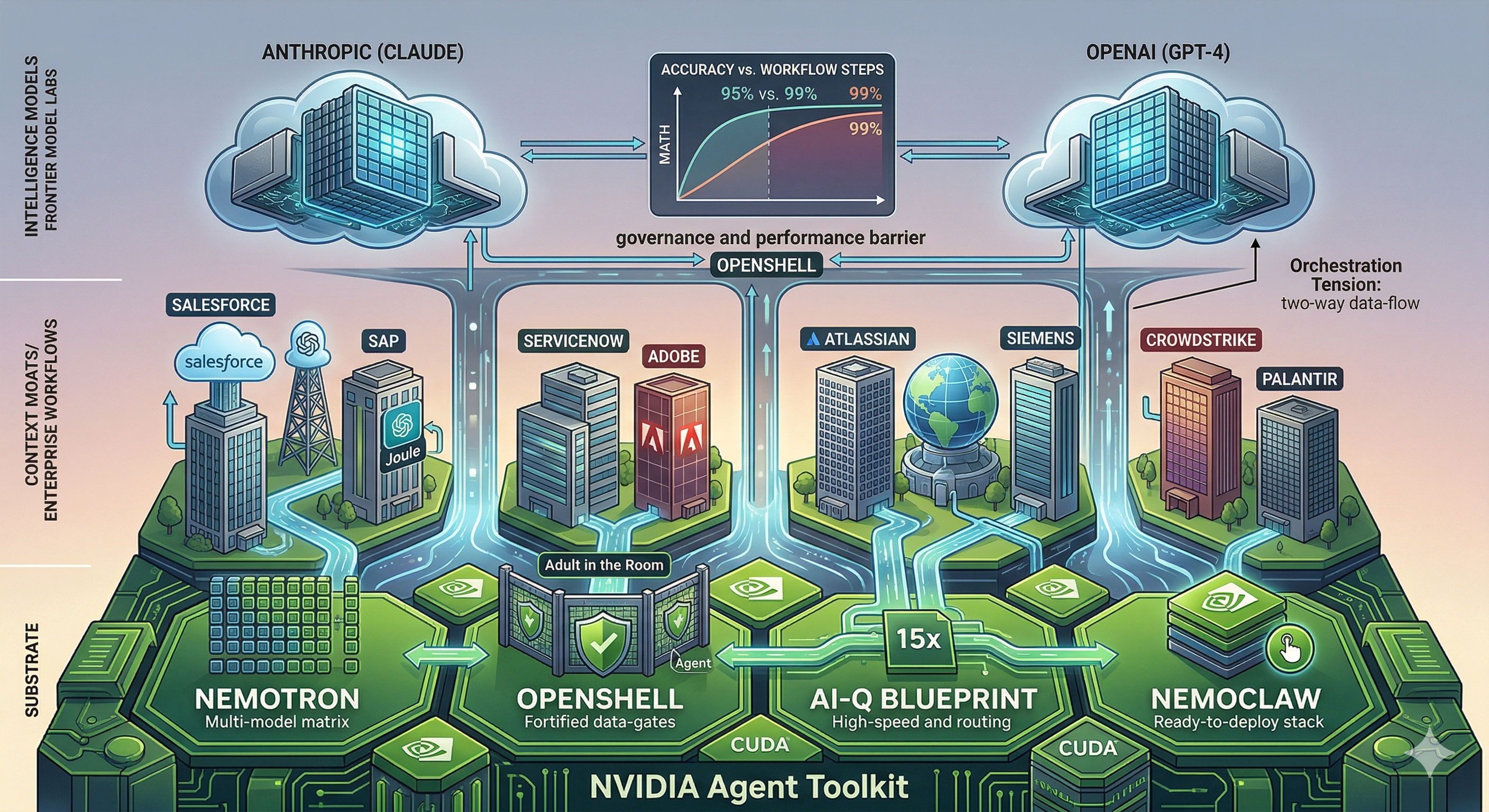

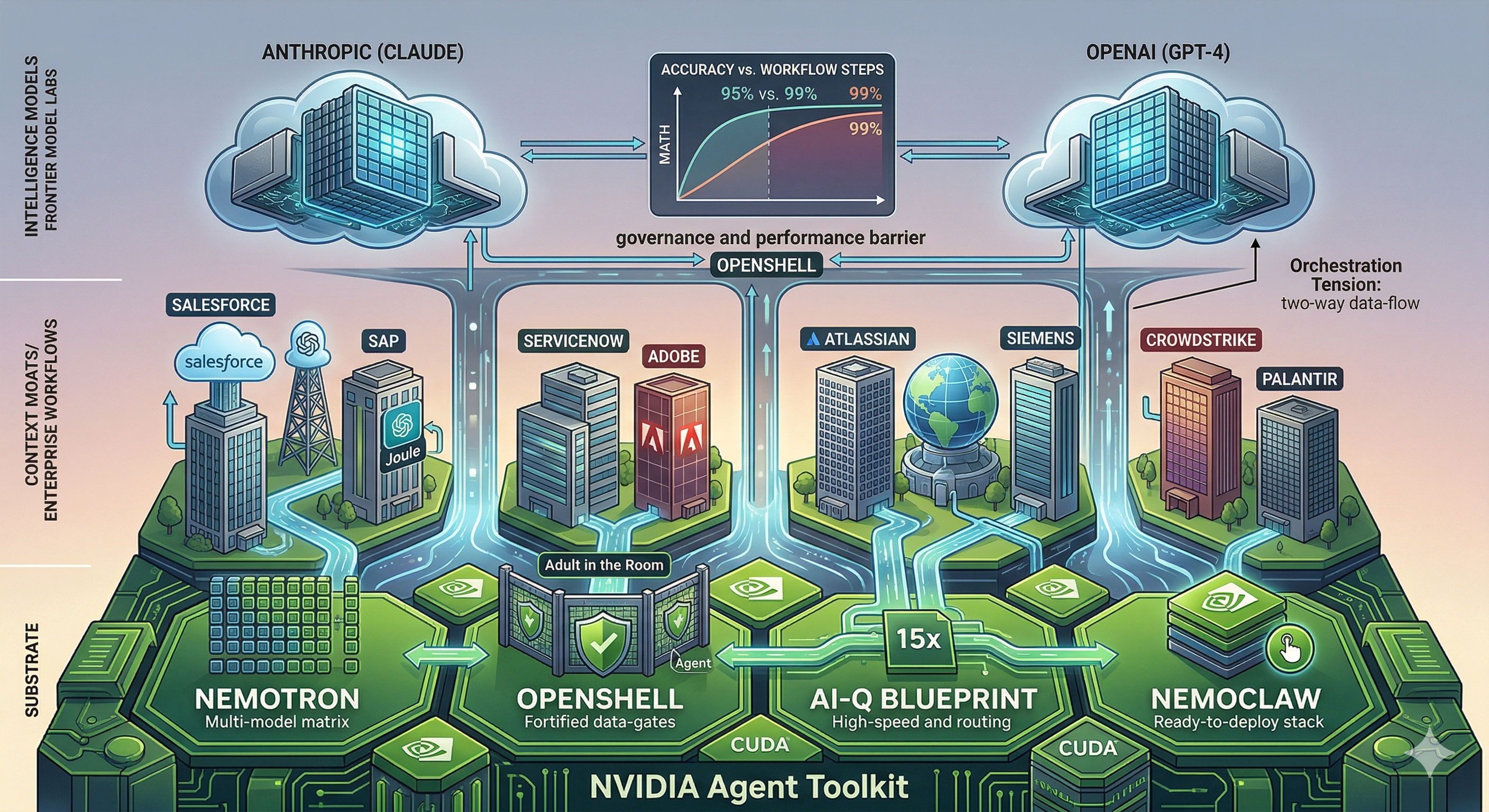

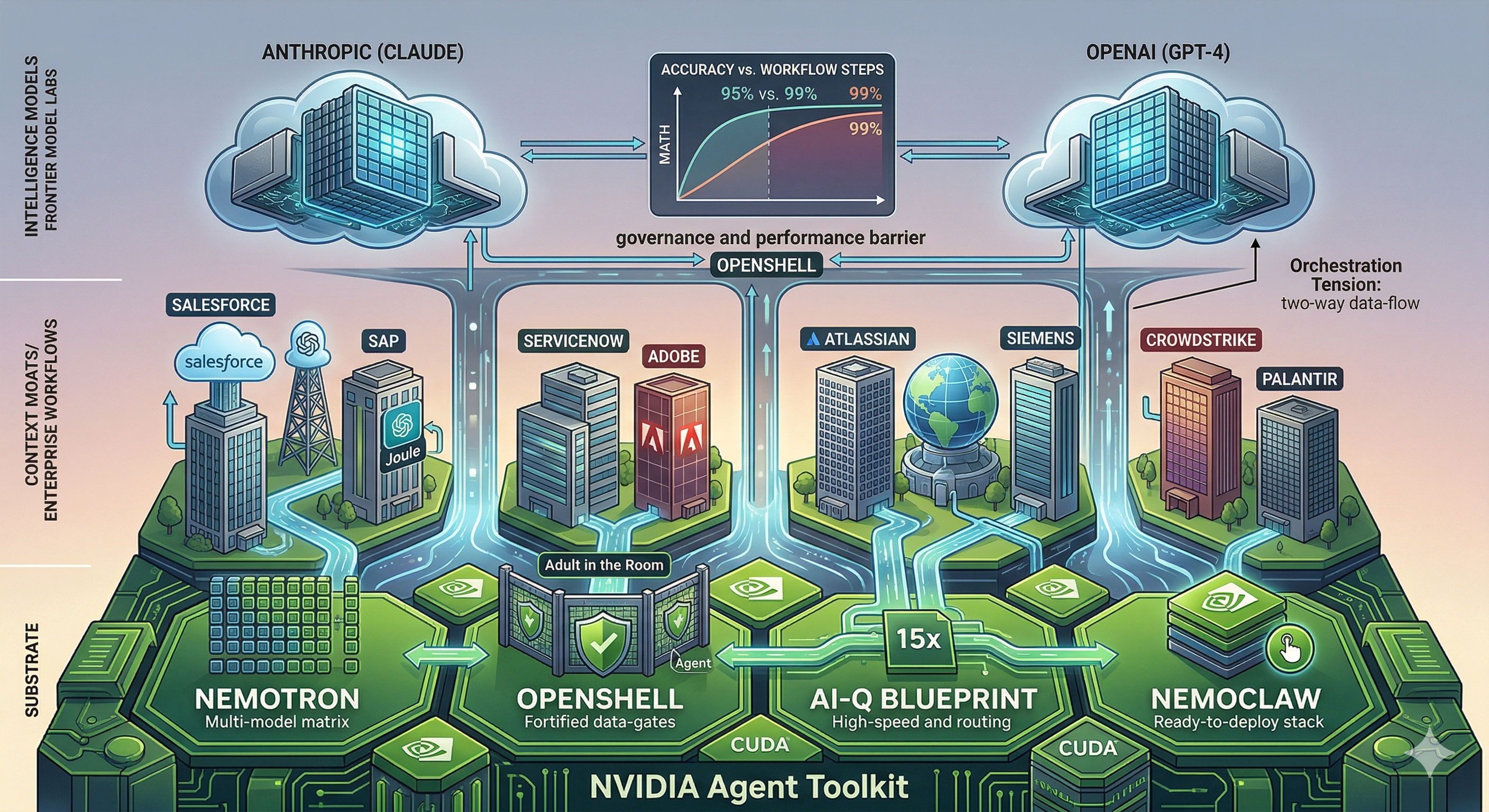

The Agent Toolkit isn’t about owning the “intelligence” (the AI models); it’s about owning the infrastructure beneath every enterprise agent. Whether you’re running GPT-4, Claude or Llama, Nvidia wants to be the plumbing. Not the brain — the substrate.

Raphaëlle breaks this down into four critical components that function as a unified, high-performance system:

Nemotron: This isn’t a “frontier model” killer. It’s not trying to out-reason Claude. It’s a lean, mean, open-source model family optimized for the grunt work. It’s about handling 80% of routine enterprise tasks at a fraction of the cost.

OpenShell: This is the “adult in the room.” It’s an open-source runtime that enforces policy-based security and privacy guardrails. This is the governance layer enterprises must have before they let agents run wild on their data.

AI-Q blueprint: This is the connective tissue. We’re talking retrieval pipelines running 15 times faster than conventional methods. It features a hybrid routing system that sends “heavy lifting” to frontier models and “routine” tasks to Nemotron, slashing query costs by over 50%.

NemoClaw: The “Easy Button.” It packages the whole stack — framework, models and security — into a single, deployable, enterprise-grade unit.

This isn’t just a product launch; it’s an ecosystem forming in real time. The software-as-a-service world is in a “land grab” for control, and SaaS companies are choosing Nvidia as their foundation. Salesforce Inc. is deploying Agentforce on this stack; SAP SE is connecting Joule; ServiceNow Inc. is integrating its Apriel models. From Adobe Inc. to Palantir Technologies Inc., the heavy hitters are voting with their code.

D’Ornano frames the real battle between two architectures:

The Agent Toolkit is Nvidia’s play to make Architecture B win.

Nvidia is betting on Architecture B, but there’s a catch: the orchestration tax. In complex 15-step agent workflows, a model with 95% accuracy per step fails more than half the time (46% success). At 99%, that success rate jumps to 86%.

Currently, only frontier models such as Claude and GPT hit those “Goldilocks” numbers for high-level planning. This gives the labs massive pricing power for now.

Here’s the tension she identifies. Nvidia’s own AI-Q design concedes that frontier orchestration tasks still require Claude- or GPT-level quality. And model quality in agentic workflows does not degrade gracefully. A model hitting 95% accuracy per step across a 15-step workflow delivers a correct result only 46% of the time. At 99%, that jumps to 86%.

The orchestration step is where the frontier labs hold pricing power. That’s where planning, error recovery and multistep coordination take place. If that gap does not close, the model provider becomes the de facto orchestrator through the back door, regardless of who owns the runtime.

The counterargument is that the frontier gap is not fixed. Open-source models have shifted from “vast” to “negligible” on many tasks in under two years. Distillation techniques are accelerating that convergence. And the Nemotron Coalition — which includes LangChain, Cursor and Mistral — is specifically optimizing for agentic tasks.

The “tourists” in the AI space might have missed those two minutes of the keynote, but the enterprise players didn’t. Nvidia is quietly cementing its position as the indispensable foundation for the agentic era.

It isn’t just winning the chip war; it’s redefining the entire AI operating system.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.