NEWS

NEWS

NEWS

NEWS

NEWS

NEWS

![]() Data-as-a-service will be the ultimate convergence between cloud computing and big data. Not to be confused with database-as-a-service (though hosted databases will be a part of data-as-a-service), I see data-as-a-service as consisting of three components:

Data-as-a-service will be the ultimate convergence between cloud computing and big data. Not to be confused with database-as-a-service (though hosted databases will be a part of data-as-a-service), I see data-as-a-service as consisting of three components:

1) APIs that deliver data via an API to third parties, such as the Klout API.

2) Markets that sell data sets, such as Factual.

3) Hosted tools that provide algorithms for processing data, such as the Google Prediction API.

It’s when all three of these components are in play that things get interesting. The players thus far:

Precog (formerly known as Report Grid) is one of the few existing “true” data-as-a-service providers I’ve seen. Here are the features of its platform as I described them previously:

Precog advisor Ed Roman tells me that the company is planning an open source version of its technology called PrecogDB with a REST API identical to the one used by the hosted service, which should help ease any concerns about vendor lock-in (more on that below).

Infochimps is on the way to this convergence with its recent announcement of its big data service business. The company is expanding beyond its roots as a data marketplace and offering both on and off-premise solutions based on the technology stack its team built to handle its own infrastructure.

RedMonk co-founder and analyst Stephan O’Grady suggests this pivot on Infochimps’ part may be due to the concept of the data marketplace being before its time. O’Grady has written about how licensing concerns is holding back data markets, by making it harder for companies to both sell and consume data.

But creating this data-as-a-service foundation for data markets might be the crucial component that makes data markets feasible. “Many companies don’t even know what to do with the data they already have, let alone what to do with a data market,” Infochimps CEO Joe Kelly told me in an interview.

There’s plenty of money to be made in selling and brokering data, but for now the real money is helping companies figure out how to use data. That’s why the third part of my list of requirements for a data-as-a-service is so crucial.

Microsoft is also working towards building a true data-as-a-service platform, and has all the pieces available in different parts:

1) Bing APIs

2) Windows Azure Data Marketplace

As revealed in a webcast with project founder Alexander Stojanovic last December (see Mary Jo Foley’s notes here), Microsoft’s Azure port is part of a broader project at Microsoft codenamed Project Isotope. One aspect of Isotope revealed by Stojanovic is the ability to integrate Microsoft Excel and PowerPivot with Hadoop on Azure. Instructions for doing this are already available on Technet. Another is the integration of Hadoop with the Azure DataMarket.

These points are significant because if successful, these components could make it possible to easily spin-up a Hadoop cluster in the cloud, find external data sets and analyze big data in the familiar Excel interface. If Microsoft brings in the ability to use pre-built algorithms (possibly through an algorithm market) this would effectively replace the “Excel Datascope” concept from one of the company’s previous Hadoop alternatives.

If Microsoft brings Bing and its Probase research project into the picture, analysts could have an insanely powerful big data stack at their finger tips, all accessible through spreadsheet applications or their programming language of choice.

Google is also inching towards a full data-a-a-service stack with tools like BigQuery, a closed preview technology that enables users to take advantage of Google’s own proprietary big data tools from within Google Spreadsheet. Coupled with other tools like the above mentioned Google Prediction API. Google is making a number of interesting tools available for users and once these are better integrated the possibilities will grow exponentially.

Bimeanalytics already offers a business analytics and data visualization service based on BigQuery and you can expect other Google partners to follow.

The real draw back to using Google’s infrastructure and algorithms is that as of now these are a complete black box. Customers really have no idea how Google’s algorithms work and can’t customize them to meet their needs. If you built your own big data cluster and used something like the Apache Mahout libraries for predictions and machine learning (or wrote your own), you’d have to do a lot more work but you’d also have a better understanding of what the algorithms are doing and be free of vendor lock-in.

I’m fascinated by what 1010data is doing. 1010data offers a cloud-based spreadsheet interface for analyzing large data sets with none of the overhead, much like what Microsoft is promising with its Excel/Hadoop integration and Google is promising with BigQuery (see also: Embracing the Spreadsheet: Two Approaches to Self-Service Business Intelligence). It also provides the ability to buy and sell data, making it, along with Precog, an example of a “true” data-as-a-service provider.

Check out this video (which was also run as an advertisement on our show theCube during Strata):

An Analyst’s Tale from 1010data on Vimeo.

There’s still a big tension between the two major big data vendor business models:

1) Provide a complex, mostly open source solution and monetize through extensive professional services.

2) Provide a mostly proprietary but much easier to use solution and monetize through licenses or subscription fees.

I’ve also written about the trouble with black boxes that obfuscate what’s really going on and make it difficult to adapt as needs change, creating a reliance on vendors to update products and change with the times instead of empowering customers to adapt the technologies on their own. The ideal solution provides the right mix of usability, convenience, transparency and portability.

Identity Management in Age of the Cloud, Mobile and Social

With Big Data comes Big Expectations

Enterprization of the Consumer

The Sate of Enterprise App Stores

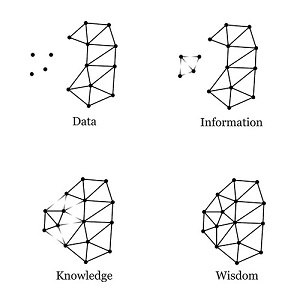

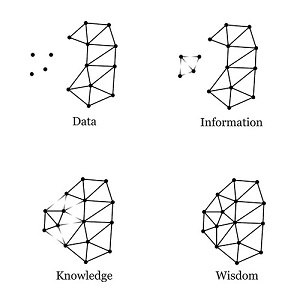

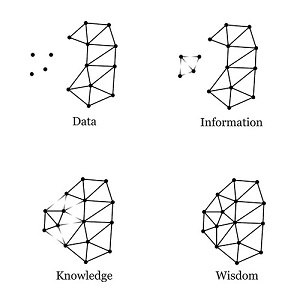

Illustration by Michael Kreil

THANK YOU