NEWS

NEWS

NEWS

NEWS

NEWS

NEWS

Virtual reality headsets made by Oculus, HTC, Sony and others can drive powerful audio-visual immersion, but one aspect of immersive user interfaces is still literally out of reach. That’s what Microsoft Research aims to change.

This week, Microsoft Corp.’s research arm is publishing a white paper on a pair of new prototype systems that provide “haptic feedback” or touch feedback about virtual reality. Researchers there have produced two prototype controller systems that “push back” when a person interacts with a virtual object, according to the paper, which will be presented at the Association for Computer Machinery’s User Interface Software and Technology Symposium this week in Tokyo.

The current generation of haptic feedback in controllers works by buzzing or knocking in the users’ hands (or restricting motion) in order to provide information. The number of solutions currently in development is growing quite large.

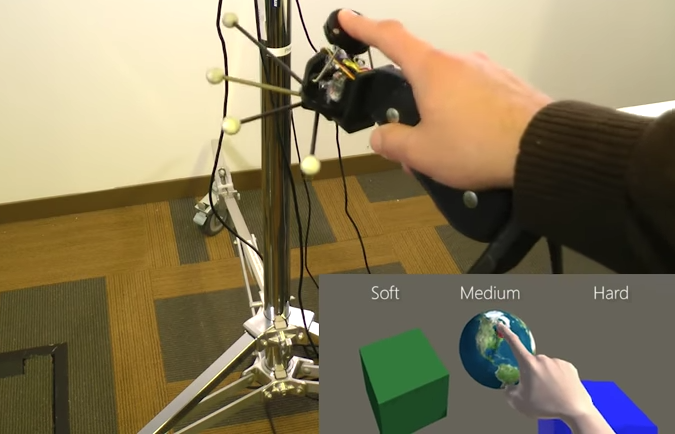

What sets apart the new systems from Microsoft is the use of servos to push against fingertips to provide force feedback and texture sensation.

Online publication MSPoweruser published a video describing and displaying the technologies at work.

The first system, NormalTouch, uses a series of servos and rigid wires to push and tilt a surface beneath the fingertip. This means that force (or elasticity) and angle of incidence can be “felt” by the user when pushing against an object. The second system, TextureTouch, uses a series of rods underneath the fingertip that conform themselves to the virtual object’s topography beneath the finger that forms a vague representation of the virtual surface.

The prototypes are huge and require a lot of space to work, but they give a guide as to one or two particular avenues that could be followed for VR haptic solutions. It’s hard to say with certainty that either of these solutions will end up becoming the standard, but both demonstrate capabilities that are currently unused.

The current generation of VR controllers is still figuring itself out as different manufacturers deliver on designs. Much like the evolution of console controller generations, VR controllers take into account the free-gesturing nature of VR interaction along with different types of feedback. However, none of what has been developed for consumer and business users comes close to the prototypes seen above.

A large number of jobs could benefit from more accurate and higher-fidelity VR feedback for training, production and even robotic telepresence.

For example, remote training that requires an understanding of fine motor skills could use something like the NormalTouch system prototype for getting a hands-on feel for different types of equipment or items used in a particular profession—such as the angularity and tension of a pipe or conduits for technicians, rigidity of flesh and bone for veterinarians or surgeons.

Finally, there’s also the psychological element of “touch” when it comes to interacting with 3D rendered objects from a museum—or perhaps even another person in a VR social space. A VR controller that can provide a sense of physicality to another avatar or object in a virtual space gives extra weight to the “reality” part of virtual reality.

At this moment, these systems cannot convey weight or inertia (and certainly not “touch” for even a grasping hand), so controllers that will deliver high-fidelity sensation for grabbing and moving items is still waiting for a breakthrough.

THANK YOU