EMERGING TECH

EMERGING TECH

EMERGING TECH

EMERGING TECH

EMERGING TECH

EMERGING TECH

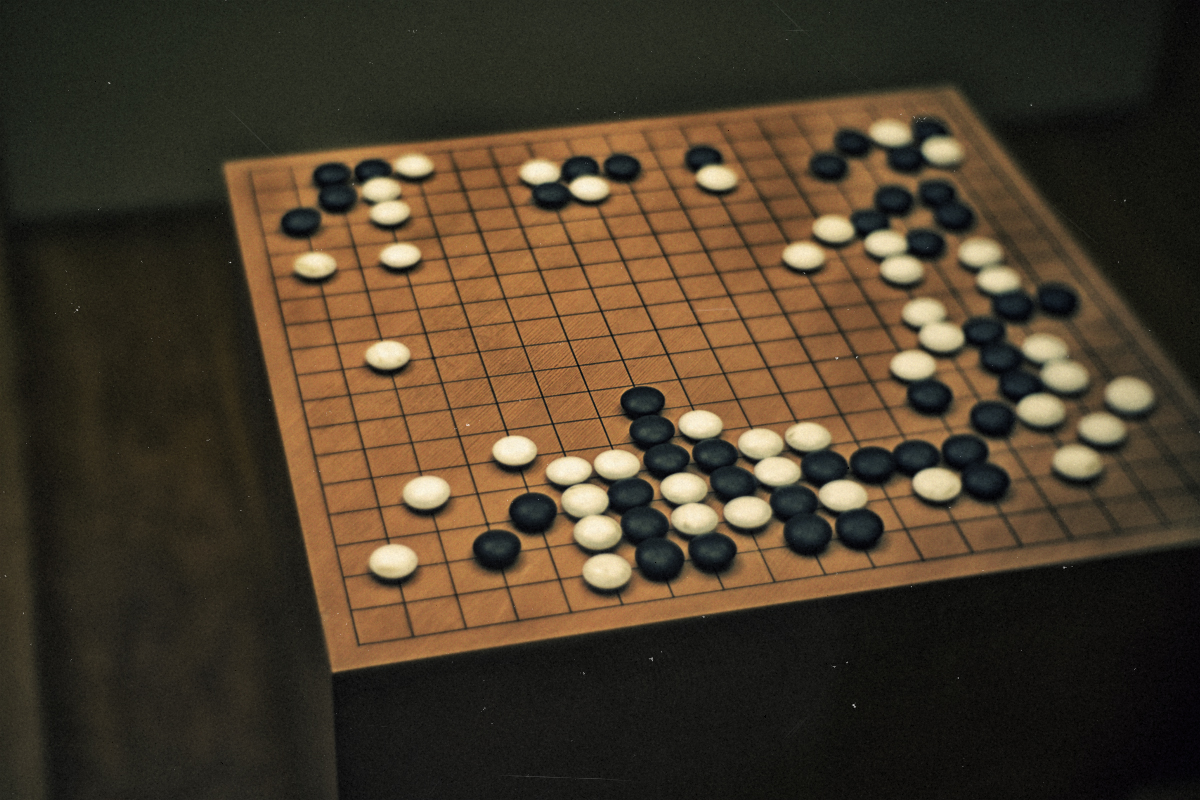

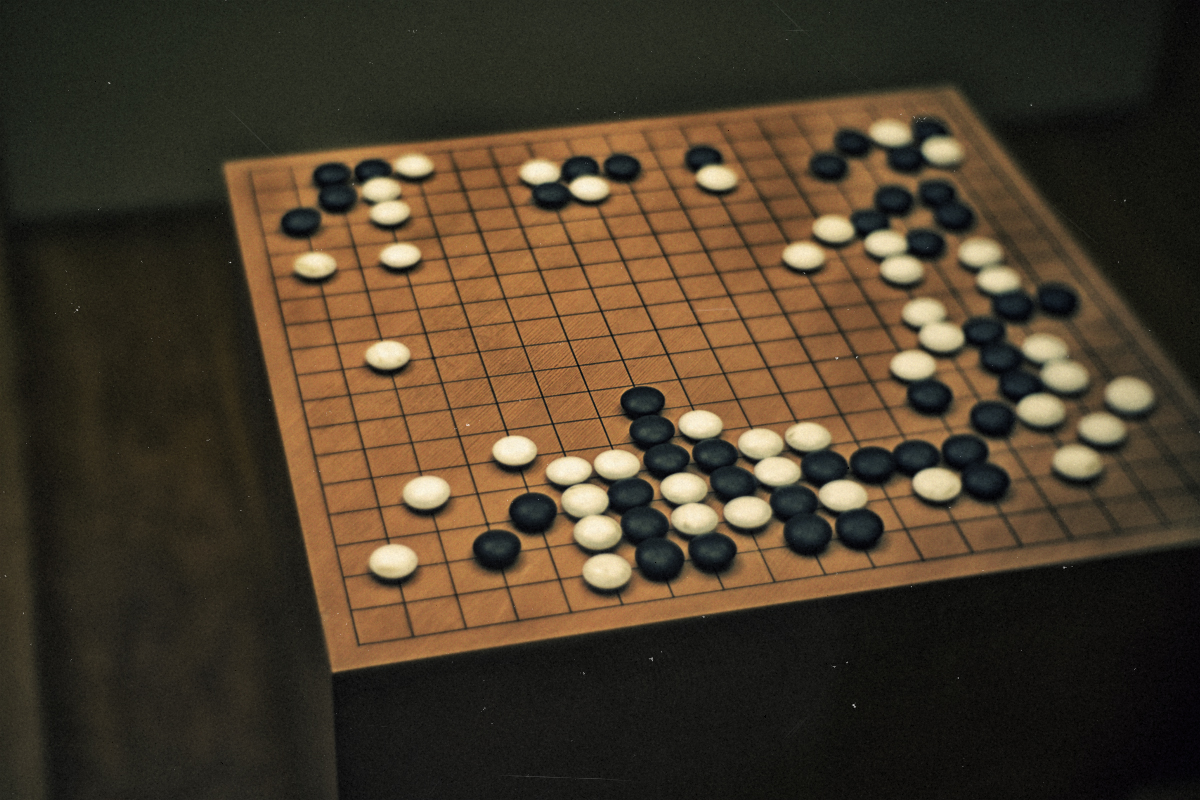

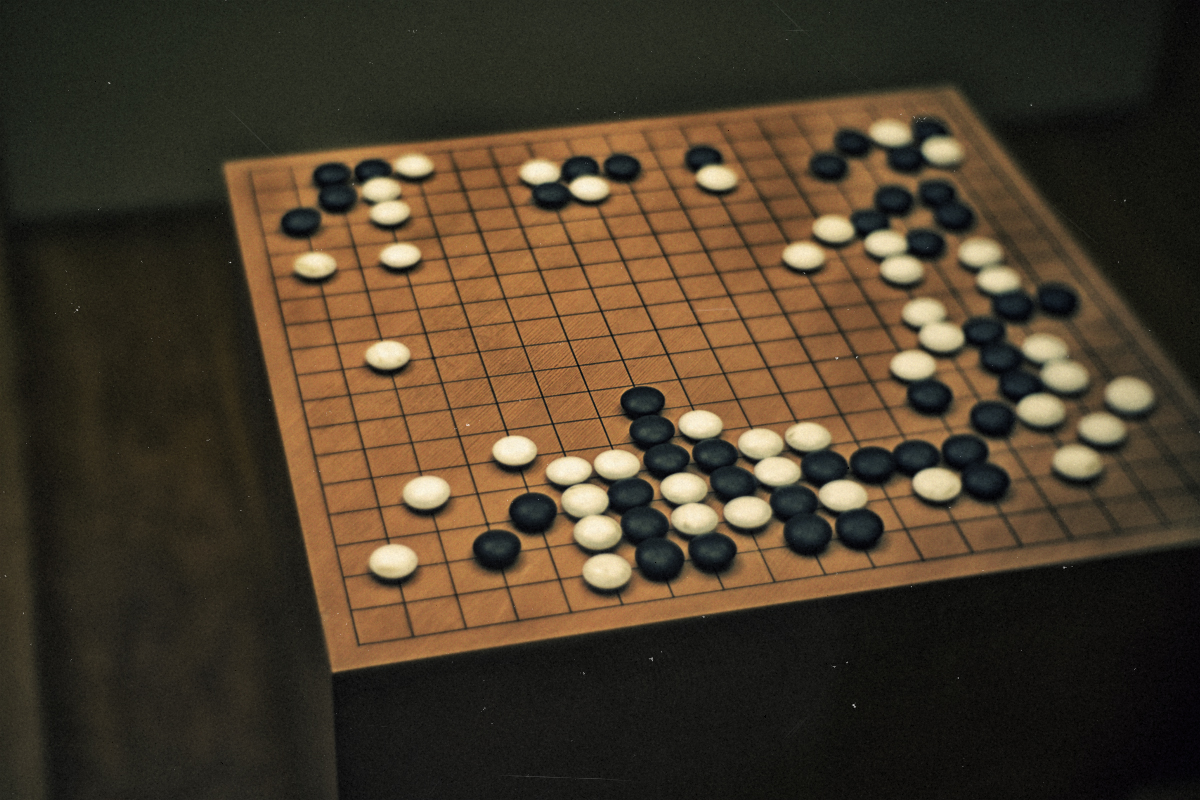

Last year, Google Inc.’s artificial intelligence unit DeepMind proved that that its AlphaGo AI was a match for the best human Go players in the world. Now, it looks like AlphaGo’s journey did not stop there.

DeepMind Chief Executive Demis Hassabis today confirmed on Twitter that a new version of AlphaGo has been secretly playing against normal human Go players online in a series of unofficial tests, and the AI managed to successfully defeat more than 50 of the world’s top players:

“We’ve been hard at work improving AlphaGO, and over the past few days we’ve played some unofficial online games at fast time controls with our new prototype version, to check that it’s working as well as we hoped,” Hassabis said. “We thank everyone who played our accounts Magister(P) and Master(P) on the Tygem and FoxGO servers, and everyone who enjoyed watching the games too! We’re excited by the results and also by what we and the Go community can learn from some of the innovative and successful moves played by the new version of AlphaGo.”

“Having played with AlphaGo, the great grandmaster Gu Li posted that, ‘Together humans and AI will soon uncover the deeper mysteries of Go.’ Now that our unofficial testing is complete, we’re looking forward to playing some official, full-length games later this year in collaboration with Go organisations and experts, to explore the profound mysteries of the game further in this spirit of mutual enlightenment. We hope to make further announcements soon!”

The game of Go, which is roughly 2,500 years old, is famously one of the most complex games in the world, with more possible board states than there are atoms in the universe. This level of complexity made the game a notoriously difficult challenge for artificial intelligence researchers, a challenge that AlphaGo finally solved using the latest deep learning and neural net methods.

AlphaGo’s accomplishment was so impressive that it ended up on the cover of the journal Nature not once but twice in the last year.

THANK YOU