EMERGING TECH

EMERGING TECH

EMERGING TECH

EMERGING TECH

EMERGING TECH

EMERGING TECH

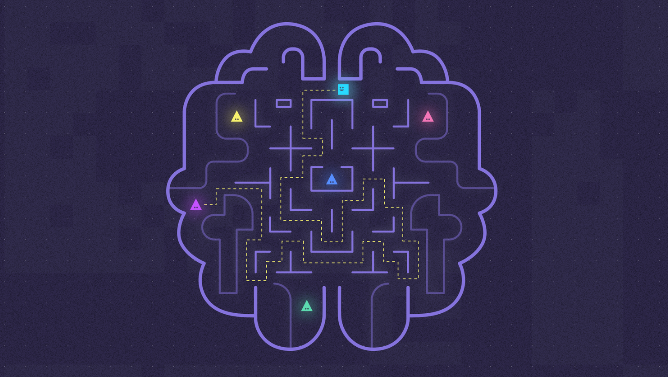

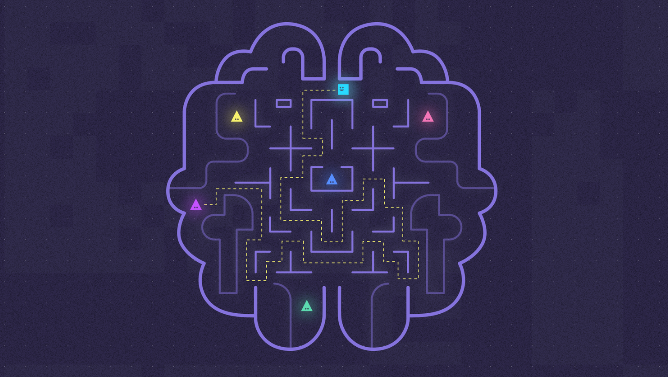

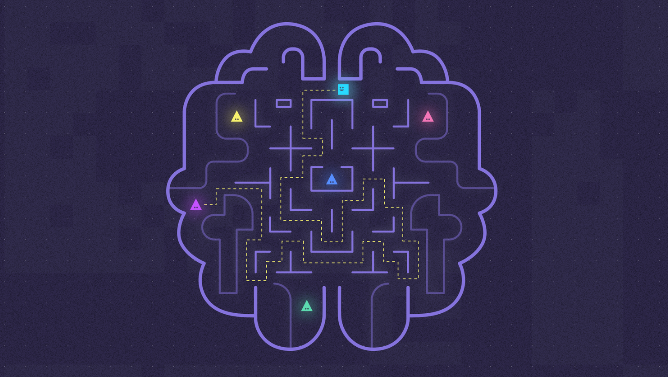

Researchers at Google Inc.’s DeepMind project report that their artificial intelligence has just become a little more human: It now remembers things.

One of the hurdles to overcome in the advancement of AI is the fact that you can train it to complete a task, but memorizing what it has done and being able to use that expertise in the future is another matter. This is known as “catastrophic forgetting,” something the researchers at DeepMind said they are getting closer to solving.

In a blog post, DeepMind explained, “When a new task is introduced, new adaptations overwrite the knowledge that the neural network had previously acquired.”

Simply put, you can train an AI to recognize something such as the features of canines, but if you want it to switch to recognizing humans it will have to be re-trained and will not retain its knowledge of dogs, unlike humans. The same goes for gameplay. An AI created to play poker needs to be overwritten in order to be a chess master.

The researchers at DeepMind said they followed the studies of neuroscience and how the brain remembers what has served it best in the past, much like animals are aware of certain dangers because their brains have retained the most important bits of data.

James Kirkpatrick at DeepMind said it had trained its AI to do much the same, to retain important pieces of data and to overwrite what is not important when faced with a new task. “If the network can reuse what it has learned then it will do,” Kirkpatrick told The Guardian.

It did this by training the AI to learn how to play 10 classic Atari games sequentially, each game requiring different strategies, but also sometimes needing similar skills. After playing the games, which included memorable ones such as Space Invaders and Defender, the AI learned to play seven out of 10 as well as any human could.

At the same time, the researchers said that although they have proved that the AI can learn games sequentially, it does not mean that it plays them any better as a result.

THANK YOU