NEWS

NEWS

NEWS

NEWS

NEWS

NEWS

Human language is still one of the most difficult tasks for computers to understand, one that tech giants from Google Inc. to Microsoft Corp. and many startups and researchers are struggling to solve.

Today, Google is introducing one more tool in a growing arsenal of artificial intelligence tools intended to unlock the secrets of natural language understanding. The search company is releasing SyntaxNet, an artificial neural network system for NLU systems, into open source.

It will be part of Google’s TensorFlow open-source library of software code, which allows developers and researchers to create their machine learning models so they can create their own services. SyntaxNet has the code needed to train new language models on a company’s or researcher’s own data.

More specifically, SyntaxNet will include an English parser, a program that analyzes the grammatical structure of sentences. It’s called–no kidding–Parsey McParseface. That’s a geeky takeoff on the British polar research ship that was going to be christened Boaty McBoatface following an online poll, before cooler heads in the Science Ministry instead named it after naturalist David Attenborough. (Yes, apparently Google was having a spot of trouble coming up with a good name.)

Anyway, SyntaxNet has the potential to make Google’s own search, email, translation and other services more flexible and useful. It also might power new services and devices–maybe even its recently rumored competitor to the Amazon Echo voice assistant, called Chirp. The open source version now opens up those possibilities to other researchers and companies.

Google claims the system beats the previous best model, also its own. The new model is 94 percent accurate in determining the real meaning of a sentence, compared with what Google estimates is about 96 to 97 percent for trained linguists.

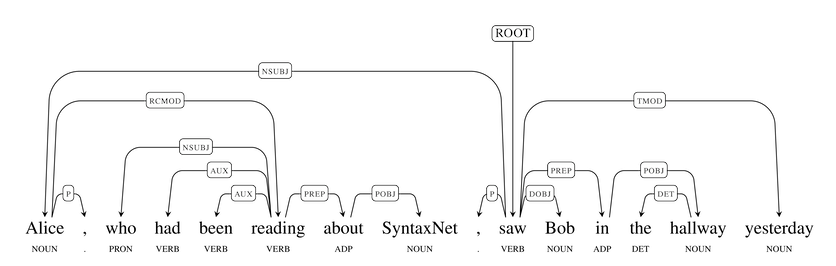

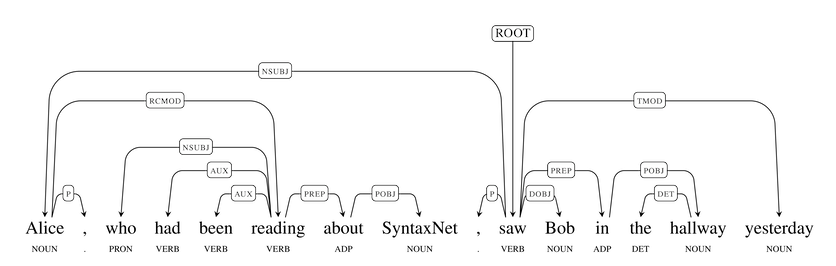

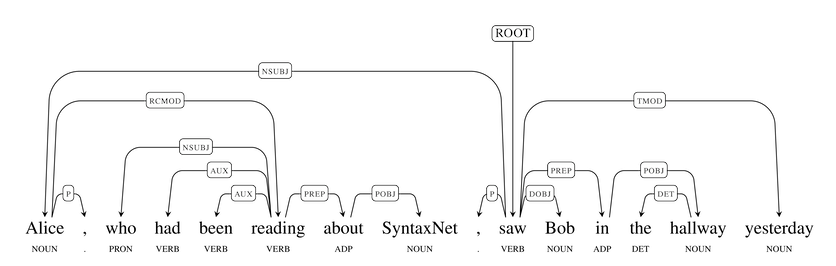

Like other parsers, SyntaxNet predicts what part of speech each word in a sentence is likely to be as well as the relationships between words. For example, when the parser analyzes the meaning of the sentence, “Alice, who had been reading about SyntaxNet, saw Bob in the hallway yesterday” (pictured above), it allows related questions to be answered, such as “Whom did Alice see?” or “What had Alice been reading about?”

But human language can be frustratingly ambiguous. A 20- or 30-word sentence can have as many as tens of thousands of possible syntactic structures. Humans can generally sort them out without much conscious thought, so in a sentence such as “Alice drove down the street in her car,” nobody would interpret this to mean there was a street inside her car. But a machine needs to figure it out.

That’s where the neural network SyntaxNet uses comes in. As Google senior staff research scientist Slav Petrov explains in his blog post and in an academic paper with colleagues, a sentence is processed from left to right and connections, or dependencies, between words are added as each word is considered. The neural network scores various possibilities for plausibility and, using an algorithm called beam search, further maintains several partial hypotheses at each step, discarding them only as higher-ranked hypotheses are made. To see how that works, click on the animated GIF below:

If it sounds complicated, it is. Google says it’s one of the most complex models trained with TensorFlow to date. And all this is only the start. The models are close to human performance only on relatively straightforward text, so on a databases of sentences drawn randomly from the Web, Parsey hits only 90 percent accuracy.

Nonetheless, even that could be useful on a lot of applications. Google is hardly alone here, of course. Microsoft with Cortana, Apple with Siri, Facebook with its M voice chatting tool and the new startup Viv all are contenders in natural language understanding.

As for Google’s newly open-sourced tool? With a name like Parsey McParseface, it better be good.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.