AI

AI

AI

AI

AI

AI

Edge artificial intelligence startup AIStorm wasted no time at all in getting its first set of specialized sensors out of the door, announcing its initial products today just two weeks after closing on an early-stage round of funding worth $13.2 million.

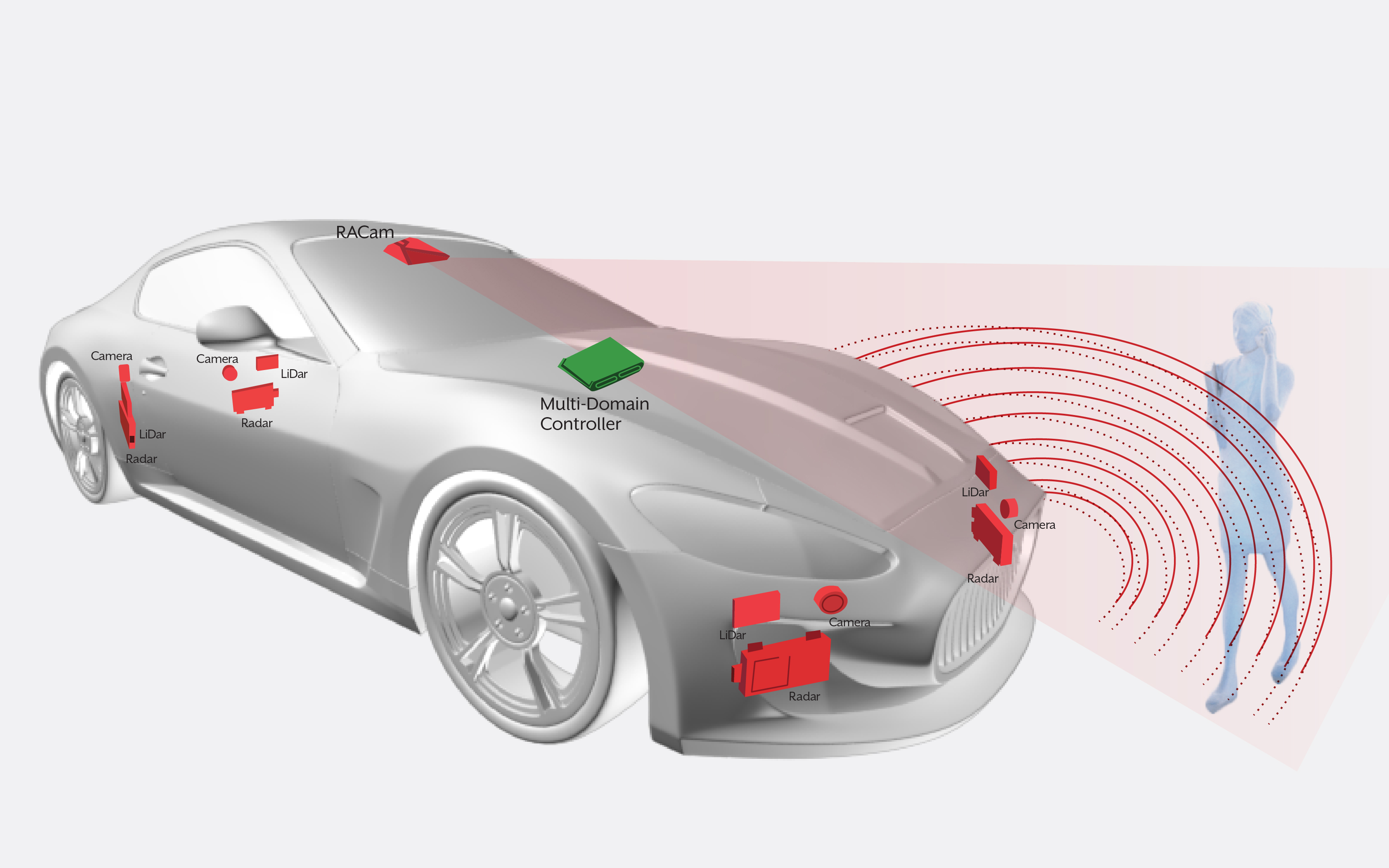

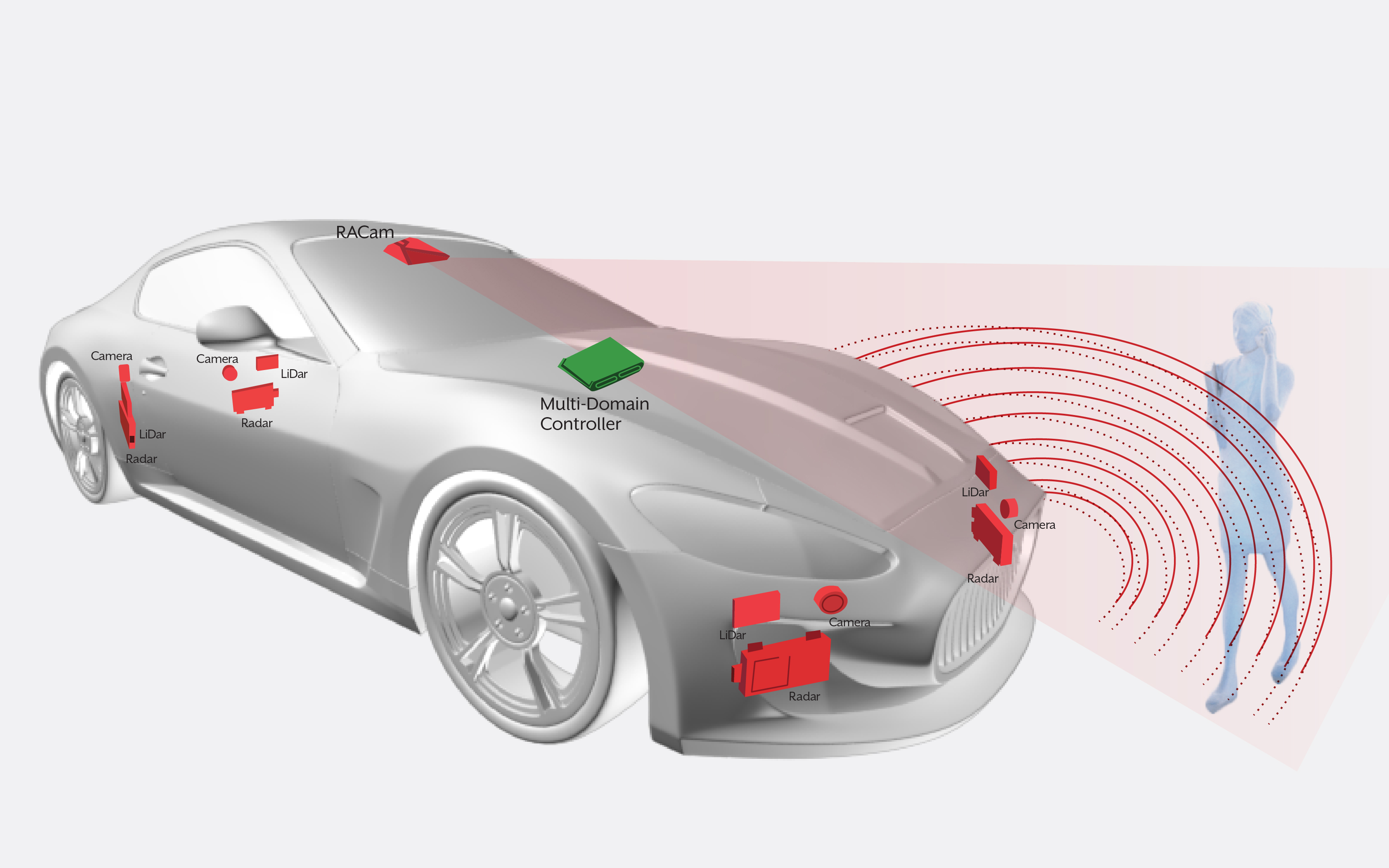

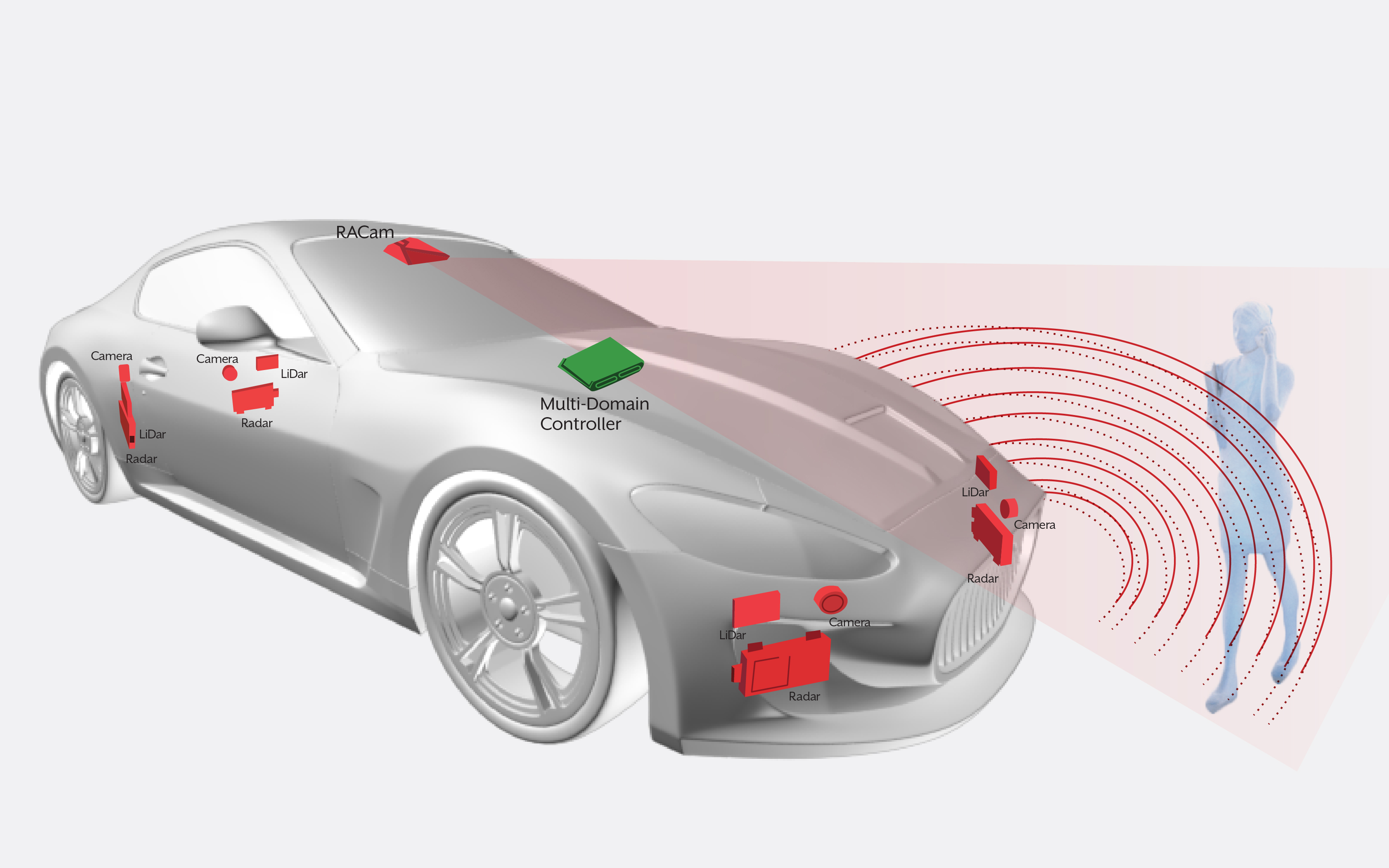

The company sells an “AI-in-Sensor” system-on-a-chip that enables faster processing of AI problems at the edge of the network. The processors are designed to be integrated within sensors that are embedded into mobile devices, “internet of things” machinery and self-driving cars, processing data directly within them.

The company said its chips are especially useful for carrying out AI computations at the network edge, thanks to their unique ability to process information in its raw analog form before it’s encoded into a digital format.

That’s a useful trick because transforming analog data into digital form generally requires extremely powerful graphics processing units. But these GPUs consume tons of power and take extra time to do that processing, making them unsuitable for edge cases, according to the company.

AIStorm said its low-powered SoCs are a better option because they eliminate the need to transform data into a digital format. Instead, they can process information directly from sensors while it’s still in its native form. The processed analog data can then be used to train AI and machine learning models for a wide range of different tasks, the company said.

“By combining the processing chip with the sensor, we can deliver efficient and extremely fast processing of sensor data right at the edge,” David Schie, co-founder and chief executive officer of AIStorm, told SiliconANGLE. “This offers two huge benefits. It reduces response latency and it eliminates the need to send data over the network to a different processor for machine learning and decision making.”

At the Mobile World Congress in Barcelona today, AIStorm announced two chips, aimed at mobile and wearable devices and at advanced driver-assistance systems.

AIStorm’s IoT Vision and Waveform chips are designed for devices such as smartphones, cameras, wearables and other IoT applications. They can help to perform tasks such as fingerprint sensing, gesture control, heart monitoring and heart-based identification, occupancy sensing, facial recognition, voice input, drone imaging and image stabilization, the company said.

Meanwhile, AIStorm’s Advanced Driver Assistance System is designed to help vehicles process the data they gather in order to avoid collisions and help get passengers safely to wherever they’re supposed to be going.

“Many industry players are focused on deep submicron GPU-based solutions to accelerate AI at the edge,” Schie said in a statement. “We believe that such solutions are not compatible with the real-time processing, power and low-cost requirements of these applications.”

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.