AI

AI

AI

AI

AI

AI

Ambitious artificial intelligence computing startup Cerebras Systems Inc. is raising the stakes in its battle against Nvidia Corp., launching what it says is the world’s fastest AI inference service, and it’s available now in the cloud.

AI inference refers to the process of running live data through a trained AI model to make a prediction or solve a task. Inference services are the workhorse of the AI industry, and according to Cerebras, it’s the fastest-growing segment too, accounting for about 40% of all AI workloads in the cloud today.

However, existing AI inference services don’t appear to satisfy the needs of every customer. “We’re seeing all sorts of interest in how to get inference done faster and for less money,” Chief Executive Andrew Feldman told a gathering of reporters in San Francisco Monday.

The company intends to deliver on this with its new “high-speed inference” services. It believes the launch is a watershed moment for the AI industry, saying that 1,000-tokens-per-second speeds it can deliver is comparable to the introduction of broadband internet, enabling game-changing new opportunities for AI applications.

Cerebras is well-equipped to offer such a service. The company is a producer of specialized and powerful computer chips for AI and high-performance computing or HPC workloads. It has made a number of headlines over the past year, claiming that its chips are not only more powerful than Nvidia’s graphics processing units, but also more cost-effective. “This is GPU-impossible performance,” declared co-founder and Chief Technology Officer Sean Lie.

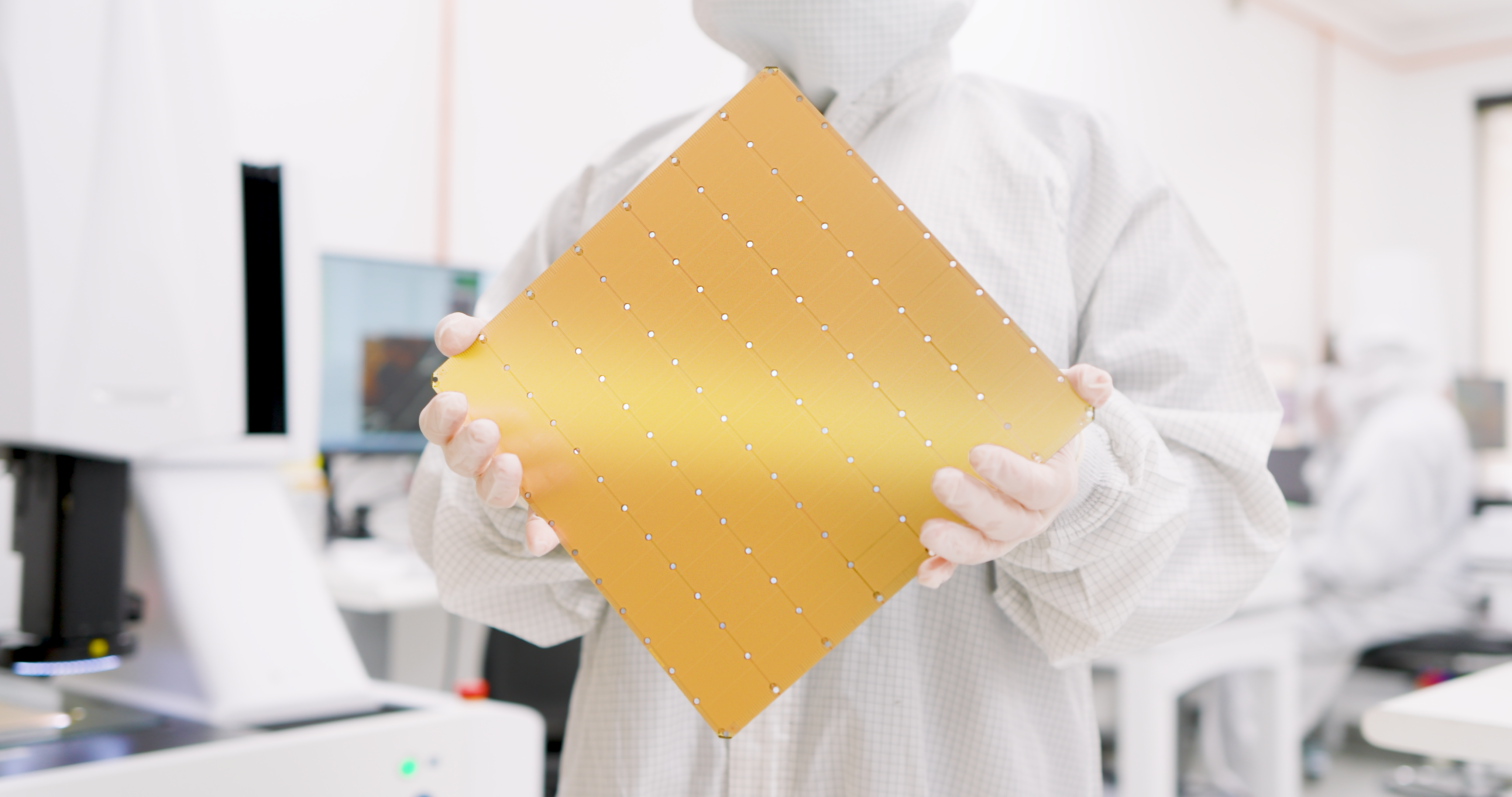

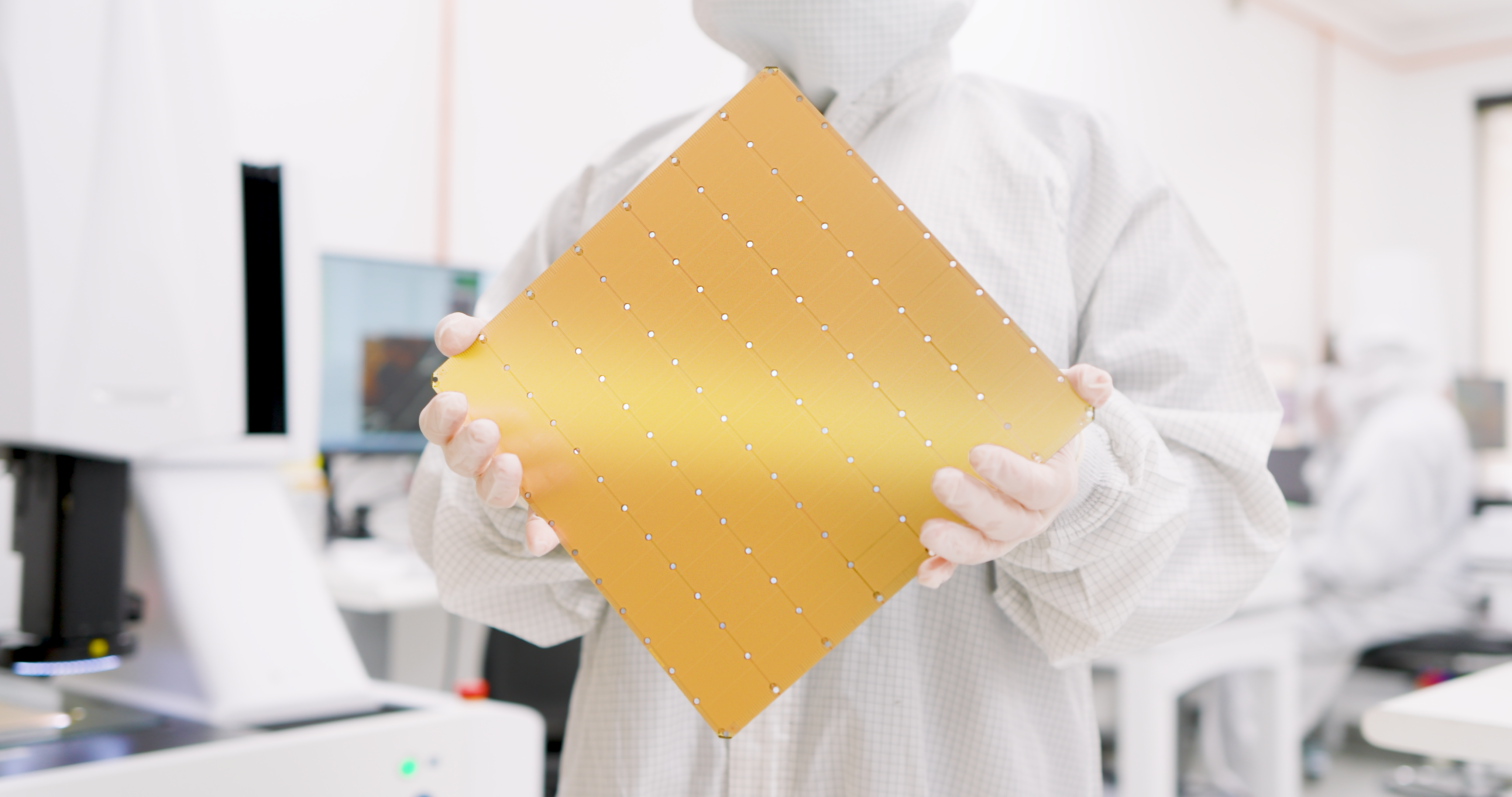

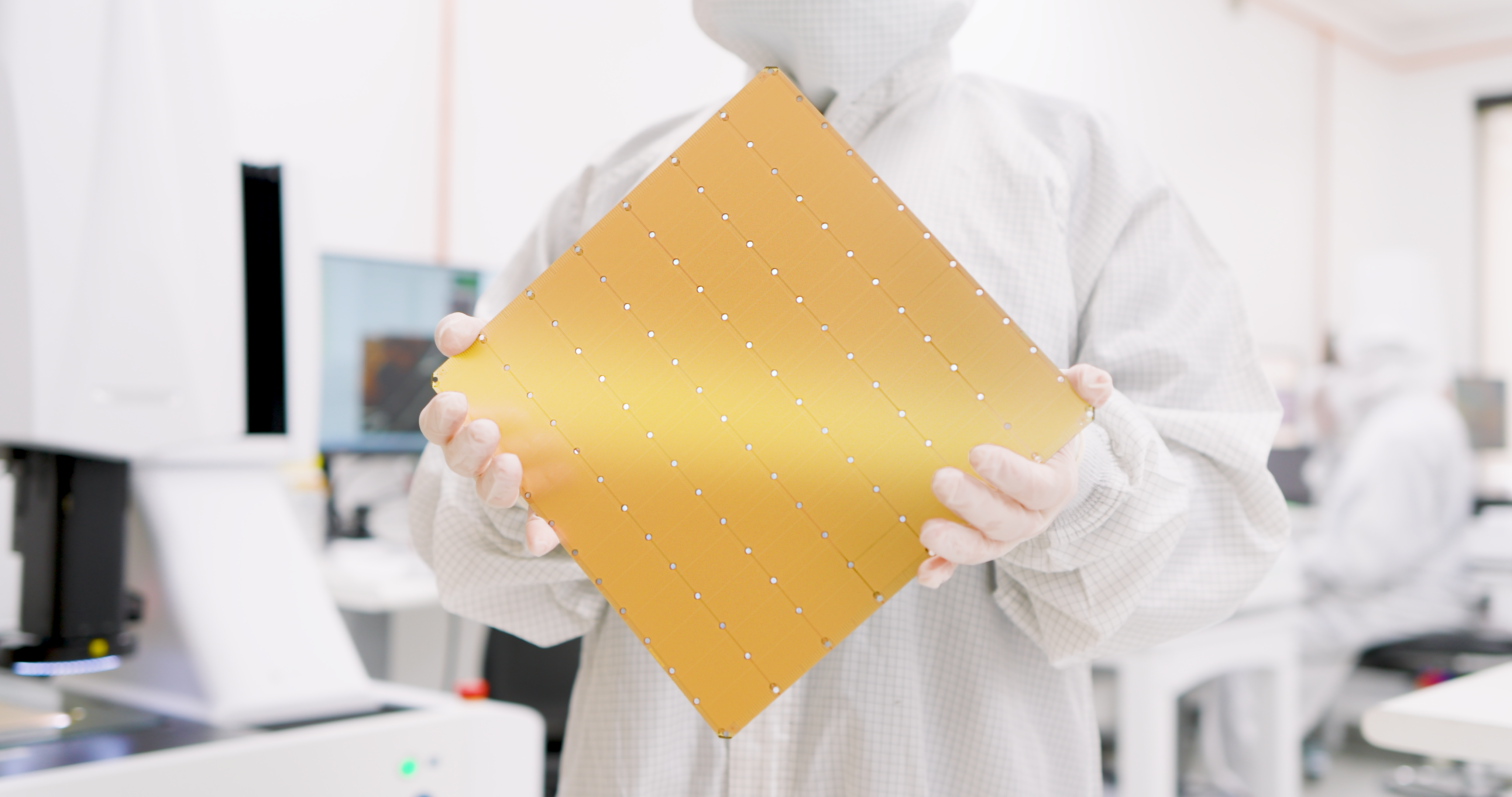

Its flagship product is the new WSE-3 processor (pictured), which was announced in March and builds upon its earlier WSE-2 chipset that debuted in 2021. It’s built on an advanced five-nanometer process and features 1.4 trillion transistors more than its predecessor chip, with more than 900,000 compute cores and 44 gigabytes of onboard static random-access memory. According to the startup, the WSE-3 has 52 times more cores than a single Nvidia H100 graphics processing unit.

The chip is available as part of a data center appliance called the CS-3, which is about the same size as a small refrigerator. The chip itself is about the same size as a pizza, and comes with integrated cooling and power delivery modules. In terms of performance, the Cerebras WSE-3 is said to be twice as powerful as the WSE-2, capable of hitting a peak speed of 125 petaflops, with 1 petaflop equal to 1,000 trillion computations per second.

The Cerebras CS-3 system is the engine that powers the new Cerebras Inference service, and it notably features 7,000 times greater memory than the Nvidia H100 GPU to solve one of generative AI’s fundamental technical challenges: the need for more memory bandwidth.

It solves that challenge in style. The Cerebras Inference service is said to be lightning quick, up to 20 times faster than comparable cloud-based inference services that use Nvidia’s most powerful GPUs. According to Cerebras, it delivers 1,800 tokens per second for the open-source Llama 3.1 8B model, and 450 tokens per second for Llama 3.1 70B.

It’s competitively priced too, with the startup saying that the service starts at just 10 cents per million tokens – equating to 100 times higher price-performance for AI inference workloads.

The company adds the Cerebras Inference service is especially well-suited for “agentic AI” workloads, or AI agents that can perform tasks on behalf of users, as such applications need the ability to constantly prompt their underlying models

Micah Hill-Smith, co-founder and chief executive of the independent AI model analysis company Artificial Analysis Inc., said his team has verified that Llama 3.1 8B and 70B running on Cerebras Inference achieves “quality evaluation results” in line with native 16-bit precision per Meta’s official versions.

“With speeds that push the performance frontier and competitive pricing, Cerebras Inference is particularly compelling for developers of AI applications with real-time or high-volume requirements,” he said.

Customers can choose to access the Cerebras Inference service three available tiers, including a free offering that provides application programming interface-based access and generous usage limits for anyone who wants to experiment with the platform.

The Developer Tier is for flexible, serverless deployments. It’s accessed via an API endpoint that the company says costs a fraction of the price of alternative services available today. For instance, Llama 3.1 8B is priced at just 10 cents per million tokens, while Llama 3.1 70B costs 60 cents. Support for additional models is on the way, the company said.

There’s also an Enterprise Tier, which offers fine-tuned models and custom service level agreements with dedicated support. That’s for sustained workloads, and it can be accessed via a Cerebras-managed private cloud or else implemented on-premises. Cerebras isn’t revealing the cost of this particular tier but says pricing is available on request.

Cerebras claims an impressive list of early-access customers, including organizations such as GlaxoSmithKline Plc., the AI search engine startup Perplexity AI Inc. and the networking analytics software provider Meter Inc.

Dr. Andrew Ng, founder of DeepLearning AI Inc., another early adopter, explained that his company has developed multiple agentic AI workflows that require prompting an LLM repeatedly to obtain a result. “Cerebras has built an impressively fast inference capability that will be very helpful for such workloads,” he said.

Cerebras’ ambitions don’t end there. Feldman said the company is “engaged with multiple hyperscalers” about offering its capabilities on their cloud services. “We aspire to have them as customers,” he said, as well as AI specialty providers such as CoreWeave Inc. and Lambda Inc.

Besides the inference service, Cerebras also announced a number of strategic partnerships to provide its customers with access to all of the specialized tools required to accelerate AI development. Its partners include the likes of LangChain, LlamaIndex, Docker Inc., Weights & Biases Inc. and AgentOps Inc.

Cerebras said its Inference API is fully compatible with OpenAI’s Chat Completions API, which means existing applications can be migrated to its platform with just a few lines of code.

With reporting by Robert Hof

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.