AI

AI

AI

AI

AI

AI

MatX Inc., a chip startup founded by former Google LLC engineers, has raised $500 million in funding to bring its first product to market.

Jane Street and Situational Awareness led the Series B investment. MatX stated today that they were joined by more than a half-dozen others, including chipmaker Marvell Technology Inc. and Stripe Inc.’s co-founders. The startup previously raised over $100 million from a consortium that included many of the same backers.

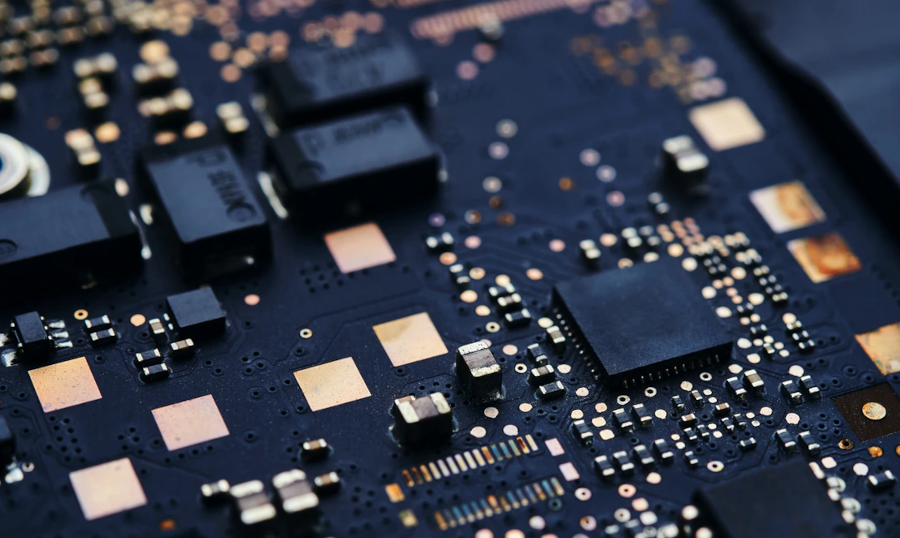

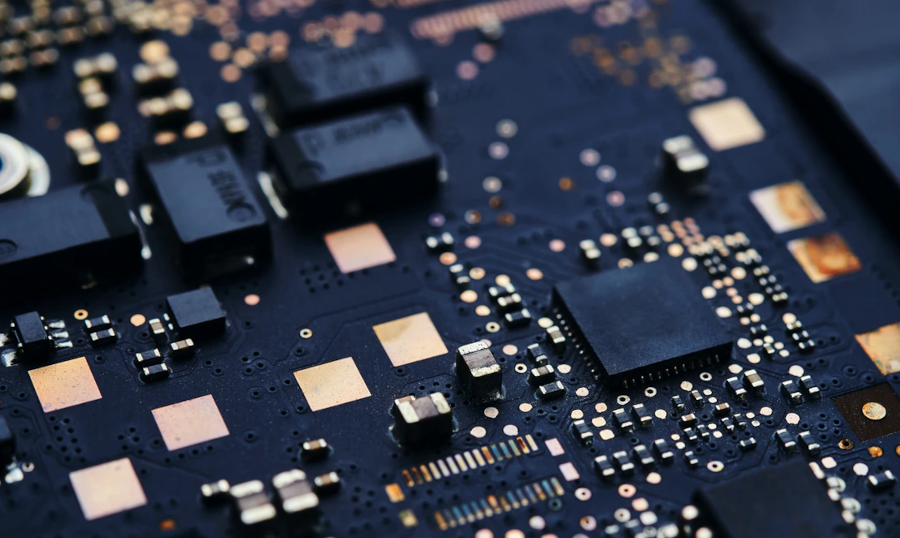

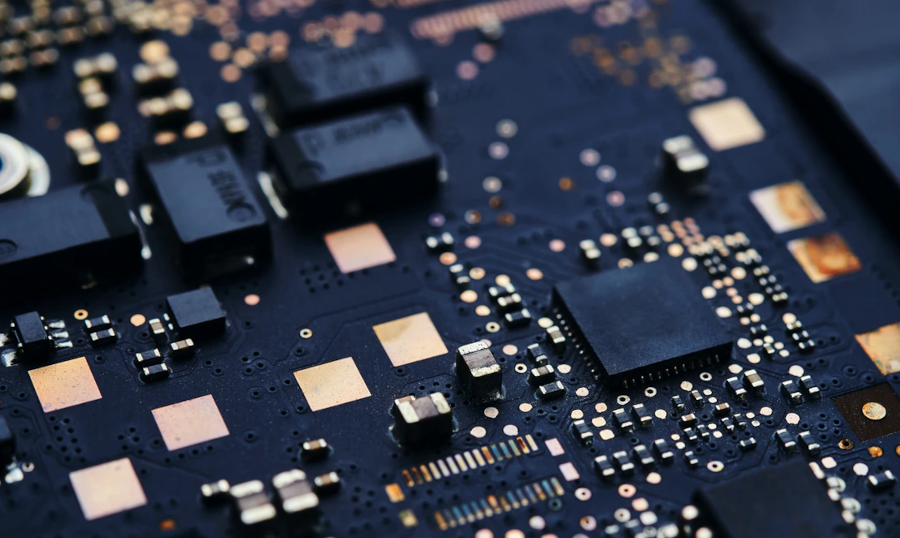

MatX is developing a processor optimized to run large language models. The company says that the chip, which is called the MatX One, will provide higher throughput than today’s graphics cards. Hundreds of thousands of MatX One accelerators can be linked together into a cluster to run large-scale training and inference workloads.

Many artificial intelligence processors implement a circuit design known as a systolic array. It’s a collection of relatively simple, identical computing modules linked together by a network. Each module performs a small portion of the calculations involved in processing an AI prompt.

MatX One is based on an architecture the company calls a splittable systolic array. The name hints that the chip may be capable of splitting its systolic arrays into multiple smaller ones. That approach makes it possible to tailor the configuration of a chip’s circuits to the datasets they process, which boosts efficiency.

The processor will store most model weights, the settings that determine how an LLM processes prompts, in SRAM cells. SRAM is a high-speed memory variety that is often embedded directly into chips next to their logic circuits. The technology provides lower latency than other types of RAM, which speeds up processing.

MatX One will use slower, higher-capacity memory called HBM to store KV cache data. A KV cache is a mechanism that LLMs use to speed up processing. It reduces the need to repeat frequently occurring calculations by caching their results, which saves time.

A series of research blog posts on MatX’s website hints that its chip will also support other performance optimization methods. One post reveals that the company has been working to combine two of the most popular methods, speculative decoding and blockwise sparse attention. The former technology speeds up prompt response generation, while the latter increases the efficiency of LLMs’ attention mechanism.

“The chip combines the low latency of SRAM-first designs with the long-context support of HBM,” MatX co-founder and Chief Executive Reiner Pope wrote in a blog post today. “These elements, plus a fresh take on numerics, deliver higher throughput on LLMs than any announced system, while simultaneously matching the latency of SRAM-first designs.”

The company will use its newly raised capital to finalize the design of its chip. MatX hopes to complete the tape-out process, the final step of the semiconductor development workflow, within a year.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.