AI

AI

AI

AI

AI

AI

Anthropic PBC is challenging the Trump administration’s recent decision to restrict the use of its software in the public sector.

The artificial intelligence developer first announced its intent to litigate last month. Today, it filed two complaints with the U.S. District Court for the Northern District of California and the federal appeals court in Washington, D.C.

Last June, Anthropic won a contract to provide the Pentagon with access to its Claude series of large language models. Not long thereafter, defense officials asked the company to modify Claude’s terms of service to permit “all lawful use.” The company declined. In particular, Anthropic indicated that it wouldn’t permit Claude to be used for domestic mass surveillance or the development of fully autonomous weapons.

Last month, U.S. President Donald Trump ordered that federal agencies stop using Claude within six months. In a related move, U.S. Defense Secretary Pete Hegseth designated the company as a supply chain risk. The latter move barred U.S. defense contractors from using Claude to deliver products or services to the Pentagon.

The first lawsuit that Anthropic filed today seeks to scrap the two directives. It brings more than a half-dozen arguments in favor of voiding the orders.

First, the complaint alleges that the orders are causing “immediate and irreparable” economic harm to Anthropic. It states that the U.S. General Services Administration, which manages federal procurement programs, has scrapped a contract that allowed multiple agencies to access Claude. The lawsuit goes on to argue that the federal ban’s impact extends to Anthropic’s private sector business.

“Current and future contracts with private parties are also in doubt, jeopardizing hundreds of millions of dollars in the near-term,” the company wrote.

A subsequent section of the complaint alleges that Trump’s ban on Claude in federal networks is “outside any authority that Congress has granted the Executive.” Anthropic is making a similar case against Hegseth’s move to designate it as a supply chain risk. The lawsuit states that the latter directive is “contrary” to Section 3252,” a part of the U.S. legal code focused on such regulatory actions.

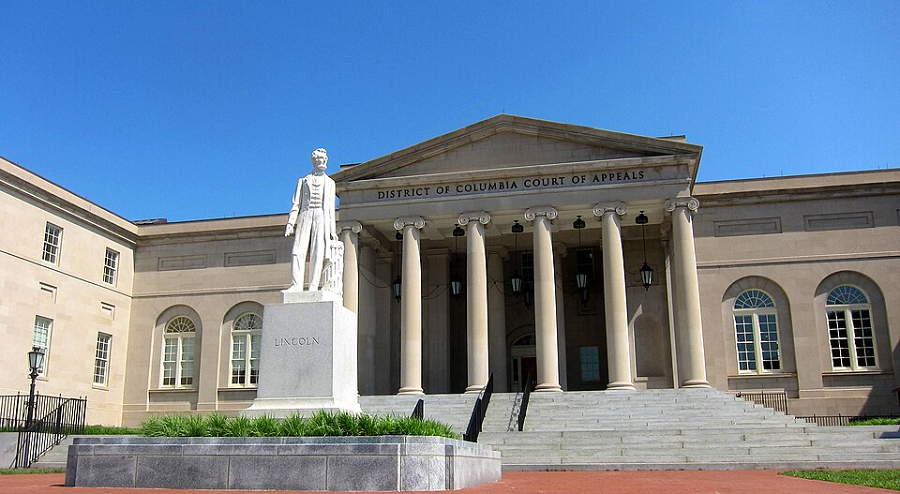

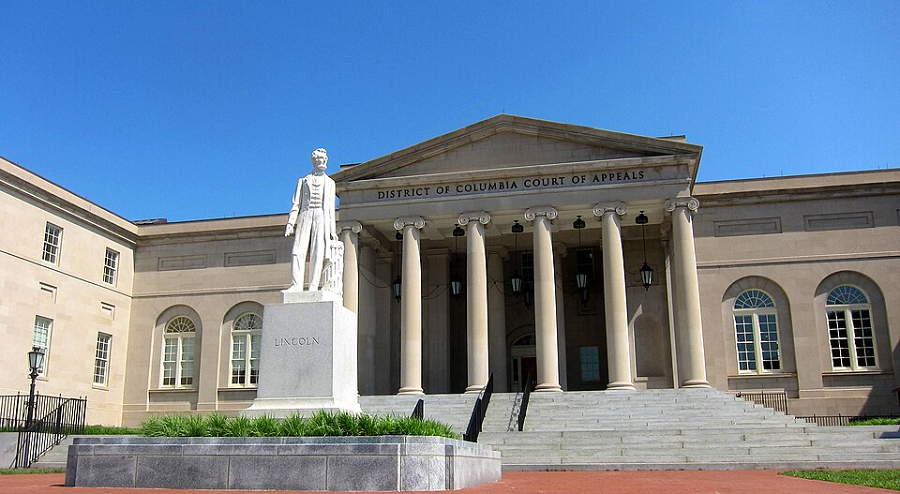

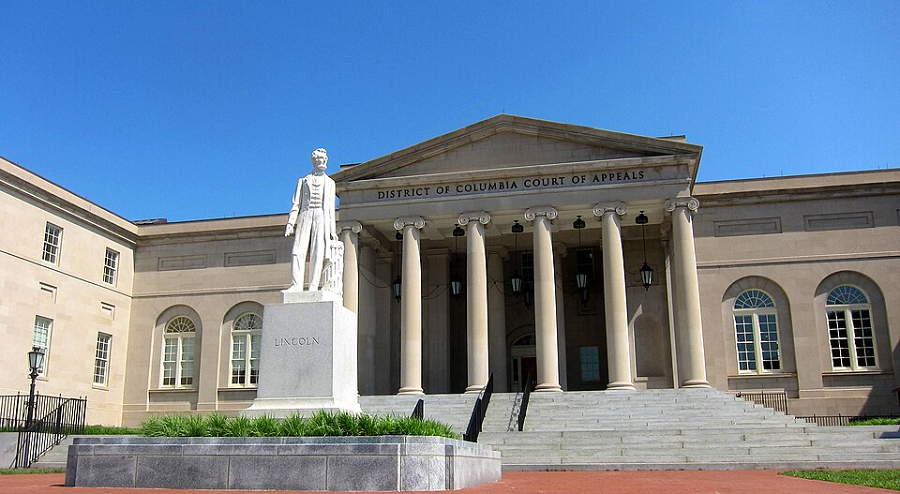

Anthropic’s second complaint, which it filed with a D.C. appeals court, takes aim at the process through which Hegseth issued the designation. The order was preceded by a “determination that Anthropic presents a supply chain risk to national security under the Federal Acquisition Supply Chain Security Act of 2018.” The AI developer is asking the court to review the determination.

According to the lawsuit, Anthropic is seeking the review because it believes that the process through which the designation was issued didn’t follow the required procedures. Furthermore, the company alleges that the designation represents a “a pretextual form of retaliation in violation of the First and Fifth Amendments to the U.S. Constitution; arbitrary, capricious, and an abuse of discretion.”

Anthropic is also asking that the Claude ban be suspended until the courts issue a ruling in the matter.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.