The enterprise AI story of 2026 isn’t about who’s experimenting anymore. Across cloud-native AI environments, the infrastructure question has shifted from whether AI can run on Kubernetes to whether it can run repeatably and at scale, with proof of measurable business value. That shift is rewriting competitive priorities, and the cloud-native stack is where the answers are being built, tested and operationalized.

The timing of KubeCon + CloudNativeCon EU makes it particularly significant. Platform engineering is maturing from an aspirational concept into an operational discipline. GPU orchestration is finding its footing within Kubernetes-native stacks, and open-source governance frameworks are consolidating around projects that have earned production trust. For enterprises, the cloud-native ecosystem is no longer a proving ground. It’s the platform.

“At KubeCon EU in Amsterdam, expect ‘AI on Kubernetes’ to look less like experiments and more like repeatable platform patterns,” said Rob Strechay, principal analyst at theCUBE Research. “Projects like virtual large language models and Kubernetes-native distributed inference stacks like distributed large language models are coming into their own, showing how the cloud-native community is standardizing everything from scheduling to cache-aware serving, right alongside the core Cloud Native Computing Foundation building blocks teams already trust.”

Join theCUBE, SiliconANGLE Media’s livestreaming studio, from March 24–26 for theCUBE’s coverage of KubeCon + CloudNativeCon EU. Our coverage will focus on how organizations such as IBM and Red Hat are operationalizing cloud-native AI in production environments, how the CNCF project ecosystem is establishing the infrastructure standards for AI at scale and what platform engineering maturity means for enterprise AI return on investment in 2026. (* Disclosure below.)

IBM reaps the rewards of putting AI to work

Among the companies demonstrating how cloud-native AI is translating into measurable business results is IBM. The company’s software-led hybrid cloud strategy is delivering results measured in billions. The company’s generative AI book of business surpassed $12.5 billion in the fourth quarter of 2025, up from $9.5 billion the prior quarter, while software now accounts for 45% of IBM’s business, compared with 25% in 2018. That performance marked the highest quarterly software growth rate in IBM’s history, according to Arvind Krishna, chairman and chief executive officer of IBM and James Kavanaugh, senior vice president and chief financial officer of IBM.

“AI is now embedded across our business, from how we deliver services to our software portfolio to the capabilities we are adding to our infrastructure platforms and how we drive our own productivity,” according to Kavanaugh.

IBM’s internal AI deployment tells the same story at the human scale. More than 20,000 IBM employees use Bob, an AI-powered toolset for enterprise software development lifecycles, achieving average productivity gains of 45%, according to Krishna. The company’s AI-driven efficiency savings had reached a $4.5 billion annual run rate, more than double the $2 billion target IBM set just two years earlier, Kavanaugh added.

“IBM is one of those companies that has AI chops internally,” said Dave Vellante, chief analyst of theCUBE Research, during a segment on theCUBE Pod. “Companies like IBM, JPMorganChase, Dell … they’re applying AI internally, and they’re getting returns. These big companies are seeing [returns], and their learnings are going to trickle down to other companies.”

IBM’s trajectory is a leading indicator of a broader shift in accountability for cloud-native AI investment. Enterprises that spent heavily on AI pilots over the past year are now pressed to show what those investments returned, and the vendors that can connect infrastructure decisions to measurable business outcomes are emerging with the strategic advantage, according to Samantha Weston, industry analyst at theCUBE Research.

“Over the last year, we’ve seen an explosion of AI experimentation across cloud-native environments, but 2026 is shaping up to be the year of accountability,” Weston said. “Organizations are moving past proof of concept and now asking for measurable ROI. That shift makes KubeCon incredibly timely because the cloud-native stack is largely where AI workloads are built, scaled and operationalized. We’ve already proven that AI can run on Kubernetes, so this year it’s about whether that infrastructure translates into business impact.”

From metal to agent: Red Hat’s full-stack AI push

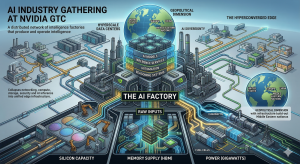

Red Hat Inc. is heading into KubeCon EU with a direct answer to enterprise AI’s most persistent obstacle: the gap between piloting a model and running it reliably at scale. The IBM subsidiary recently launched Red Hat AI Enterprise, a unified platform for AI lifecycle management built on Red Hat OpenShift. It also introduced the Red Hat AI Factory with Nvidia Corp., a co-engineered stack for provisioning and optimizing GPU-accelerated cloud-native AI infrastructure. Together they form what Red Hat calls a “metal-to-agent” stack, spanning GPU hardware through to the AI agents that drive business logic, according to Chris Wright, CTO and SVP of global engineering at Red Hat, and Joe Fernandes, VP and GM of the Red Hat AI Business Unit.

“By integrating advanced tuning and agentic capabilities with the industry-leading foundation of Red Hat Enterprise Linux and Red Hat OpenShift, we are providing the complete stack — from the GPU-accelerated hardware to the models and agents that drive business logic,” Fernandes said.

As enterprises push cloud-native AI workloads deeper into production, operational complexity has emerged as one of the most persistent obstacles, particularly when integrating Kubernetes infrastructure, AI models and developer workflows across distributed environments, according to Paul Nashawaty, principal analyst at theCUBE Research and host of the AppDevANGLE podcast. That challenge is driving renewed investment in platform engineering and standardized developer environments designed to simplify infrastructure consumption while maintaining governance and reliability.

“The organizations getting the most value from cloud native are treating platform engineering as a product, delivering standardized environments that improve both developer velocity and operational reliability,” Nashawaty said, based on insights gleaned from the AppDev Done Right Summit. “In terms of what to watch at the event, the clearest signal will be how the ecosystem simplifies Kubernetes consumption.”

CNCF sets the cloud-native AI ground rules

Underpinning the AI ambitions of companies such as IBM and Red Hat is the Cloud Native Computing Foundation’s expanding project ecosystem. As enterprises move from AI experimentation to scaled deployment, CNCF’s role has shifted from stewarding container orchestration standards to establishing the governance and infrastructure ground rules for cloud-native AI at scale, according to Strechay.

“The ‘AI factory’ conversation is getting real because the silicon vendors are shipping more of the missing plumbing as open source: operators, device plugins and hardened integration layers that make accelerators first-class citizens in Kubernetes,” Strechay said. “With Kubernetes now broadly viewed as the de facto operating layer for AI, the competitive edge shifts to how efficiently you can stand up repeatable, multi-tenant, observable GPU/accelerator fleets, across Nvidia, AMD and Intel stacks, without reinventing the platform every quarter.”

That production readiness doesn’t happen by accident. The CNCF’s project graduation model — encouraging open development from the start, then tracking community adoption as the signal for enterprise readiness — gives organizations a reliable way to distinguish infrastructure with genuine traction from technology that doesn’t survive contact with real workloads, according to James Harmison, senior principal technical marketing manager at Red Hat, and Jennifer Vargas, senior principal marketing manager at Red Hat.

“Everyone is trying to look for the ROI,” Vargas told theCUBE. “It’s really expensive to deploy AI at the scale that everyone wants. On the enterprise, [they] need to demonstrate ROI very quickly. I think agentic AIs probably are key to that because it will help enterprises match their workflows. Enterprises already understand automation, how to automate processes. What they need is to take it to the next level.”

Observability is advancing rapidly, but the trajectory is becoming clearer. OpenTelemetry is emerging as the standard layer for instrumentation and data pipelines, while innovation is increasingly focused on how organizations use that telemetry for governance, cost management and richer operational signals, according to Strechay.

“I expect more attention on open ecosystems that are gaining operational gravity,” he said. “OpenSearch is a good example, with observability and analytics investments and a community that keeps expanding.”

TheCUBE event livestream

Don’t miss theCUBE’s coverage of KubeCon + CloudNativeCon EU from March 24–26. Plus, you can watch theCUBE’s event coverage on-demand after the event.

How to watch theCUBE interviews

We offer you various ways to watch theCUBE’s coverage of KubeCon + CloudNativeCon EU, including theCUBE’s dedicated website and YouTube channel. You can also get all the coverage from this year’s events on SiliconANGLE.

TheCUBE podcasts

SiliconANGLE’s “theCUBE Pod” is available on Apple Podcasts, Spotify and YouTube, which you can enjoy while on the go. During each podcast, SiliconANGLE’s John Furrier and Dave Vellante unpack the biggest trends in enterprise tech — from AI and cloud to regulation and workplace culture — with exclusive context and analysis.

SiliconANGLE also produces our weekly “Breaking Analysis” program, where Dave Vellante examines the top stories in enterprise tech, combining insights from theCUBE with spending data from Enterprise Technology Research, available on Apple Podcasts, Spotify and YouTube.

Guests

During theCUBE’s coverage of KubeCon + CloudNativeCon EU, expect conversations to focus on observability and controlling costs in multicloud environments, evolving security for software supply chains, zero-trust architectures and runtime protection, open-source governance, sustainability and community-driven innovation. Stay tuned for our complete guest list.

(* Disclosure: TheCUBE is a paid media partner for the KubeCon + CloudNativeCon EU event. Sponsors of theCUBE’s event coverage do not have editorial control over content on theCUBE or SiliconANGLE.)

Image: SiliconANGLE

A message from John Furrier, co-founder of SiliconANGLE:

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

- 15M+ viewers of theCUBE videos, powering conversations across AI, cloud, cybersecurity and more

- 11.4k+ theCUBE alumni — Connect with more than 11,400 tech and business leaders shaping the future through a unique trusted-based network.

About SiliconANGLE Media

SiliconANGLE Media is a recognized leader in digital media innovation, uniting breakthrough technology, strategic insights and real-time audience engagement. As the parent company of

SiliconANGLE,

theCUBE Network,

theCUBE Research,

CUBE365,

theCUBE AI and theCUBE SuperStudios — with flagship locations in Silicon Valley and the New York Stock Exchange — SiliconANGLE Media operates at the intersection of media, technology and AI.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.

AI

AI