AI

AI

AI

AI

AI

AI

Every year the artificial intelligence industry gathers at Nvidia Corp.’s GPU Technology Conference expecting to see faster graphics processing units, bigger models and the next wave of AI software innovation.

And yes, that will all be there.

But if you’ve been watching the conversations we’ve been having on theCUBE over the past few years, you know the real story isn’t just happening on stage — it’s happening under the hood.

What’s unfolding right now is the largest infrastructure buildout the tech industry has seen since the birth of the cloud. But unlike the cloud era, which was largely a software revolution, the AI era is rapidly becoming something else entirely.

It’s becoming industrial.

The companies racing to lead this new era aren’t just writing code. They’re securing power, booking semiconductor capacity, locking in memory supply and deploying massive clusters designed to produce intelligence at scale.

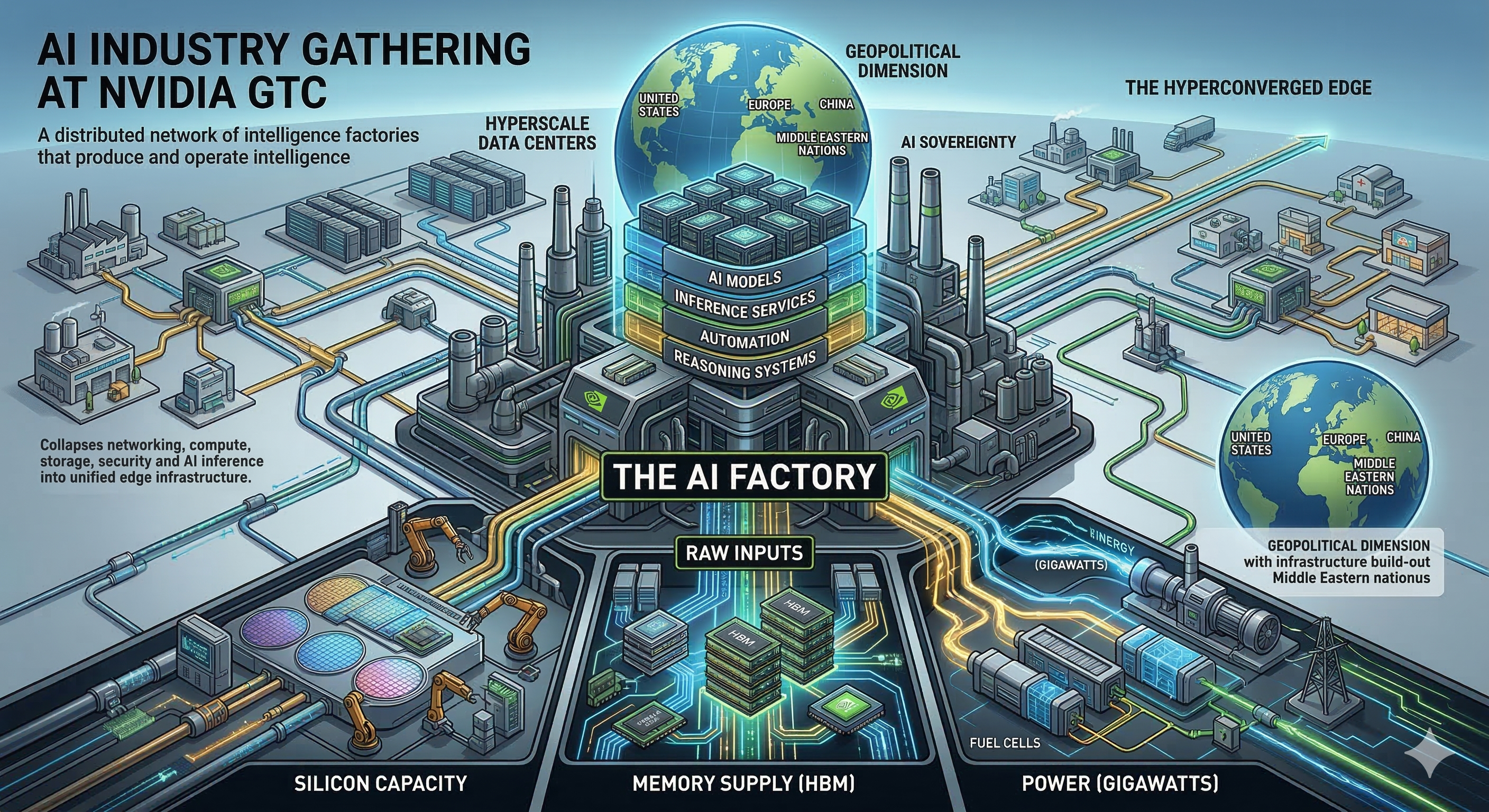

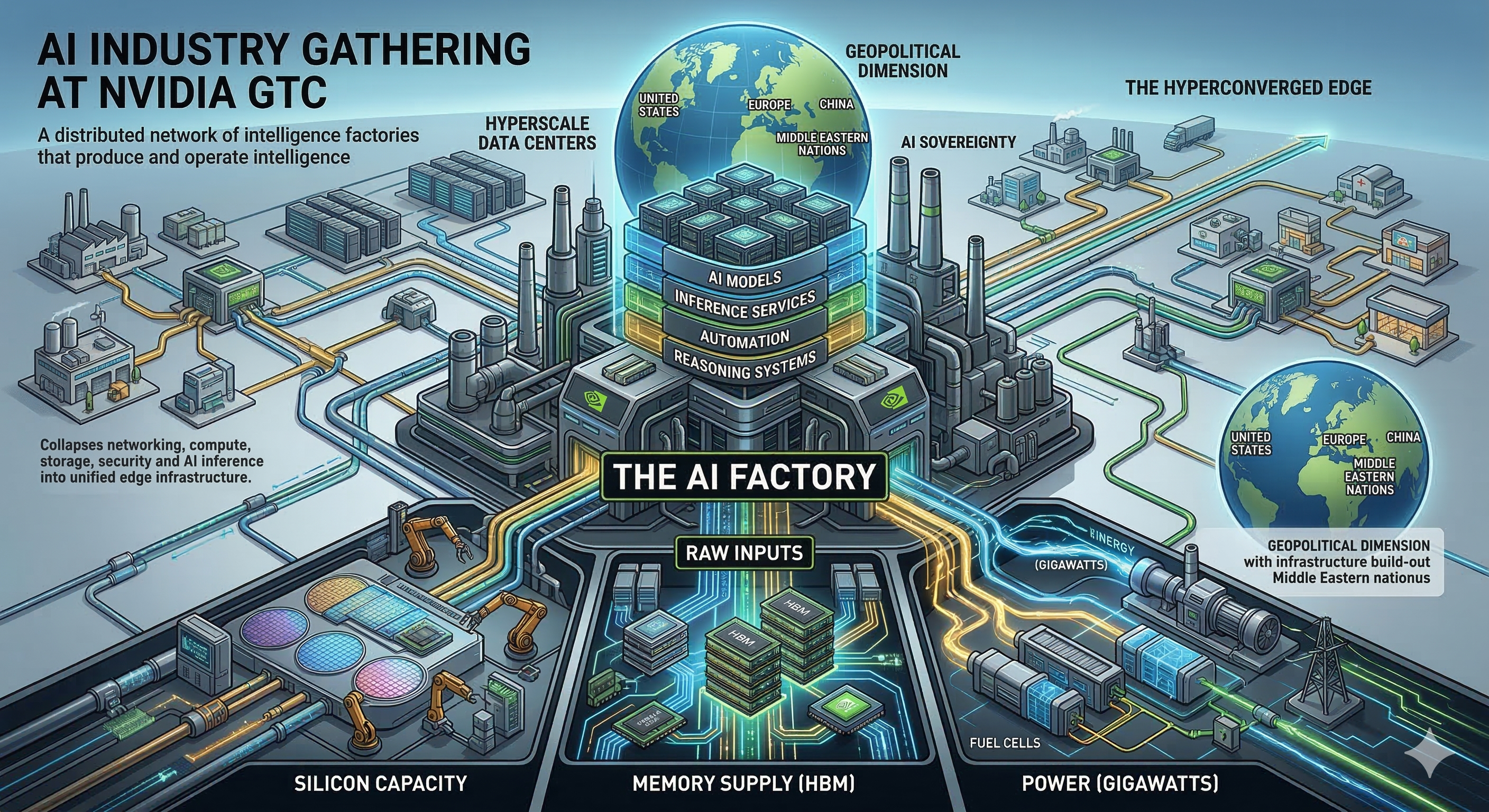

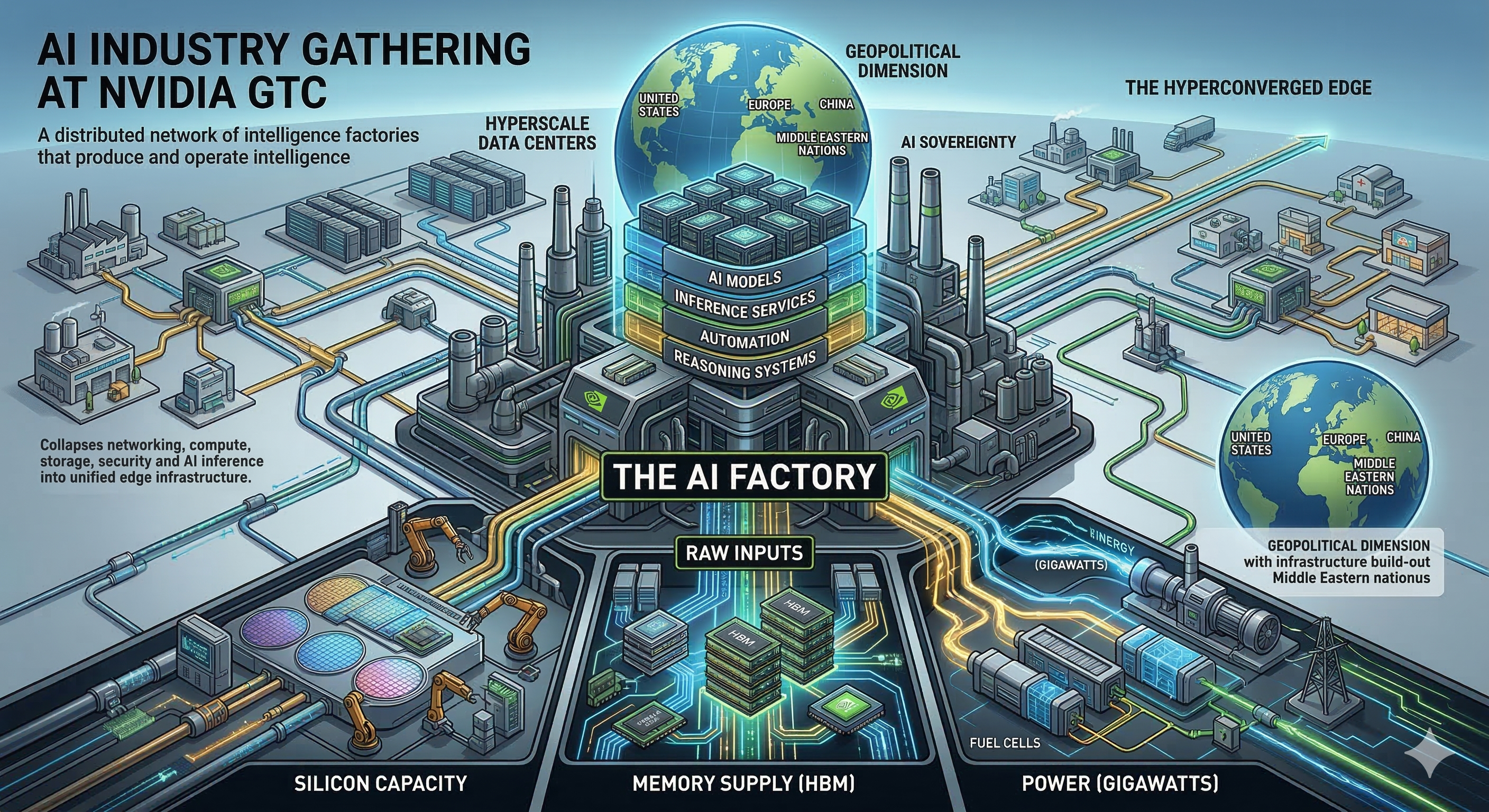

We call this system the AI factory.

And as we head into GTC, the deeper narrative isn’t just about the next GPU architecture. It’s about a global infrastructure race — a trillion-dollar supply chain trench war — to build the factories that will manufacture intelligence for the next decade.

What’s even more interesting is that this factory is no longer confined to hyperscale data centers. It’s beginning to extend outward into what we call the hyperconverged edge, where AI moves closer to where data is created and decisions are made.

That shift — from centralized AI to a distributed network of intelligence factories — may ultimately define the next phase of the industry.

Over the past few years we’ve been describing a shift in how computing infrastructure is designed.

Traditional data centers stored data and ran applications.

AI infrastructure does something fundamentally different: It manufactures intelligence. An AI factory is a vertically integrated system designed to convert raw inputs — power, silicon, memory and data — into outputs such as AI models, inference services, automation and reasoning systems.

Companies leading this transformation include Nvidia, Amazon.com Inc., Microsoft Corp., Google LLC, Meta Platforms Inc., among others. Collectively, these firms are investing hundreds of billions of dollars in AI infrastructure. Some of our reported estimates suggest the next phase of this cycle could approach $1 trillion in capital investment.

GTC will showcase the latest pieces of this architecture — but the most important story lies deeper in the supply chain.

One of the strangest economic dynamics in this cycle is what I call the GPU appreciation paradox. In traditional technology markets, hardware depreciates rapidly.

But in the AI era, the opposite appears to be happening. Take the widely deployed Nvidia H100 GPU. Instead of losing economic value over time, the productivity of these chips is increasing as the models they serve become more powerful.

As frontier models improve, the value generated by the compute serving those models rises. This is why some AI labs are locking in multiyear GPU contracts at roughly $2.40 per hour, well above estimated build costs.

AI compute has become the most constrained resource in the digital economy. And that’s turning GPUs into something closer to productive capital assets than traditional information technoogy hardware.

GPUs may get the headlines at GTC, but the real bottleneck in the AI factory could be memory.

Modern AI systems rely heavily on long-context reasoning, meaning models can process huge sequences of text, code and multimodal data. That capability requires massive amounts of high-bandwidth memory or HBM.

HBM is physically expensive:

Our data suggest that by 2026, as much as 30% of hyperscaler capital expenditures could go toward memory alone (drumroll: Wait for all the price increases for systems).

This shift is already reshaping semiconductor allocation across the industry, with memory supply increasingly prioritized for AI infrastructure.

One of the key constraints we’re watching closely is semiconductor fabrication capacity.

Datacenter accelerators are now consuming a growing share of manufacturing capacity at TSMC. Suppliers like NVIDIA (GPUs), Broadcom (TPUs and custom AI silicon), and others are committing to far more aggressive volume growth than traditional consumer chip designers.

At a high level, there are two major constraints in the semiconductor manufacturing process that matter for AI infrastructure:

1. Front-end capacity: the upstream wafer fabrication stage, where advanced logic process nodes produce the silicon and logic circuits on wafers.

2. Back-end capacity: sometimes referred to as the mid-end where advanced packaging technologies such as CoWoS (Chip-on-Wafer-on-Substrate) integrate the chips with high-bandwidth memory (HBM), substrates, and other components to create the final accelerator packages.

Manufacturers like TSMC have to carefully balance both stages of the process. Last year the back-end packaging stage, especially CoWoS, was the biggest bottleneck. While still tight, the constraint is increasingly shifting toward the front-end wafer fabrication capacity.

The bottom line: AI demand is exploding, but silicon production is struggling to keep pace.

To understand the ultimate limit of AI scaling, you have to go even further upstream.

The real gatekeeper in the semiconductor ecosystem is ASML Holding NV, the Dutch manufacturer of extreme ultraviolet lithography machines. These tools are required to produce the most advanced chips.

Each machine costs more than $350 million, contains hundreds of thousands of components, and relies on a supply chain of thousands of specialized suppliers. More importantly, production is limited. ASML can manufacture roughly 70 to 100 extreme ultraviolet tools per year through the end of the decade.

That output effectively caps how quickly the world can expand advanced semiconductor production. You can innovate software at exponential speed. But you cannot easily scale the industrial manufacturing systems that produce the silicon.

Another key input to the AI factory is energy. Frontier AI clusters now require enormous amounts of electricity — often measured in gigawatts. Yet power isn’t necessarily a hard scaling limit.

It’s a cost problem. To bypass grid constraints and multi-year permitting timelines, hyperscalers and AI labs are increasingly deploying behind-the-meter power systems. These include natural gas turbines, modular microgrids, fuel cells and factory-built data center modules.

Even if energy costs increase, the economics still favor early deployment because the marginal value of frontier AI models is so high. In the AI race, speed often matters more than efficiency.

While the hyperscale AI factory is grabbing headlines, another important shift is happening further out in the infrastructure stack. The AI factory is expanding to the edge.

Enterprises are increasingly deploying localized AI systems to support real-time decision-making in environments such as: factories, hospitals, retail stores, logistics centers and smart cities. These environments require low latency, data sovereignty and continuous operation even when cloud connectivity is limited.

This is where the hyperconverged edge enters the picture. Hyperconverged edge platforms collapse networking, compute, storage, security and AI inference into unified edge infrastructure.

Instead of isolated devices, organizations deploy distributed mini AI factories capable of running localized inference and synchronizing with centralized AI clusters. In this architecture, hyperscale AI factories train models, while hyperconverged edge systems operate those models in the real world.

This distributed model of intelligence production will likely be a major theme emerging from GTC discussions.

The AI infrastructure race is also becoming deeply geopolitical.

Governments around the world increasingly see AI capability as a matter of national sovereignty. Control over AI infrastructure means control over economic competitiveness, industrial automation, defense systems and national innovation capacity

The United States currently leads in several key areas, including AI software ecosystems and access to advanced manufacturing through partners such as Taiwan Semiconductor Manufacturing Co. But other regions are moving quickly.

China is pursuing full-stack vertical integration through companies such as Huawei, investing heavily in domestic chip design, memory production and sovereign AI infrastructure. Europe and Middle Eastern nations are also investing in sovereign compute capacity to ensure they are not dependent on foreign cloud providers.

In many ways, AI factories are becoming the strategic infrastructure of the digital age, similar to energy grids or telecommunications networks.

Perhaps the biggest misconception about the AI boom is how it is categorized. Many investors still treat AI companies as traditional software firms.

But the companies leading the AI Factory buildout increasingly resemble heavy industrial operators. They are deploying gigawatt-scale data centers, global semiconductor supply chains, massive capital investment programs and vertically integrated infrastructure stacks

This is not just another software cycle. It’s the emergence of industrial-scale intelligence production.

As we head into another Nvidia GTC, it’s easy to get caught up in the product announcements like the next GPU, the next model, the next software framework.

But the bigger story unfolding beneath the surface is about infrastructure power.

The companies that win the next four to five years in AI won’t simply be the ones with the best algorithms. They’ll be the ones that secured the supply chain early: The ones that are locked in the lithography capacity. The ones that booked the memory wafers. The ones that built the power plants. The ones that extended their AI factories all the way out to the edge.

Because the future of AI is no longer just about training bigger models. It’s about building the global system that runs them.

The AI factory is quickly becoming the industrial backbone of the digital economy — a distributed network of hyperscale clusters and hyperconverged edge infrastructure that together produce and operate intelligence.

And if the last decade belonged to the cloud, the next decade will belong to the companies that build and control these factories. That’s the story I’ll be watching closely this week at GTC.

Because the race to build the AI factory is only just getting started.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.