INFRA

INFRA

INFRA

INFRA

INFRA

INFRA

Nvidia Corp. is throwing down the gauntlet to the rest of the artificial intelligence chip industry with the launch of its next-generation Vera Rubin platform.

Announced at GTC 2026 today, it consists of no less than seven new ships designed to power what Chief Executive Jensen Huang said is the “greatest infrastructure buildout in history.”

Vera Rubin is named after the pioneering astronomer who first discovered evidence for dark matter, and it’s much more than just a simple refresh of its previous-generation graphics processing units. The company said it’s a complete architectural overhaul that’s aimed to power the enterprise shift toward “agentic AI” – a world where autonomous AI agents that can reason, use third-party software tools and execute complex workloads on behalf of humans.

The Vera Rubin platform is anchored by the new Rubin GPU and Vera central processing units, but that’s not all. The platform also consists of Nvidia’s NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 data processing unit and the Spectrum-6 Ethernet switch, plus the new Nvidia Groq 3 large processing unit that’s designed to support the deterministic, low-latency requirements of trillion-parameter model inference.

Huang promised that Vera Rubin will deliver a “generational leap” in AI compute performance. “Seven breakthrough chips, five racks, one giant supercomputer, built to power every phase of AI” was how Huang described it. “The agentic AI inflection point has arrived.”

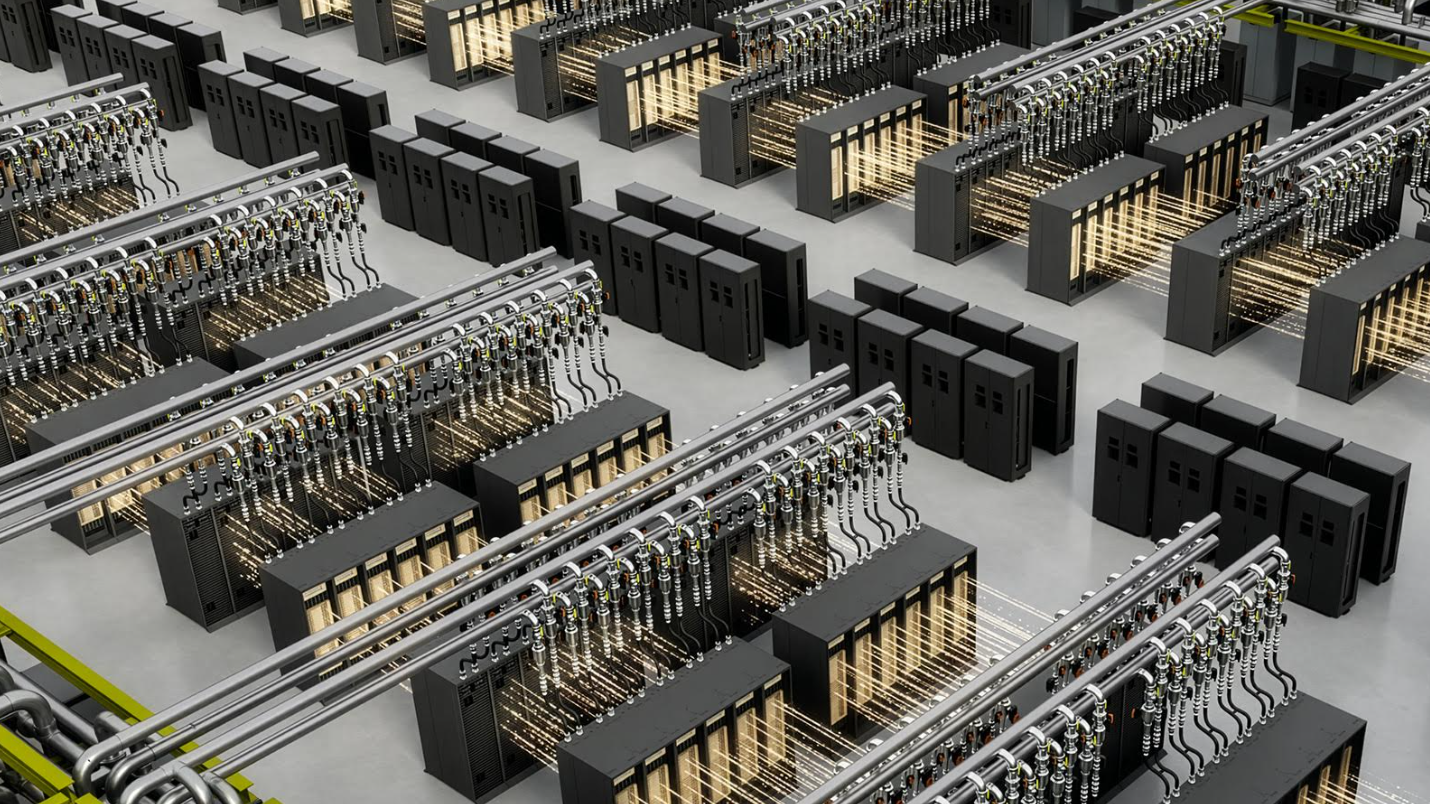

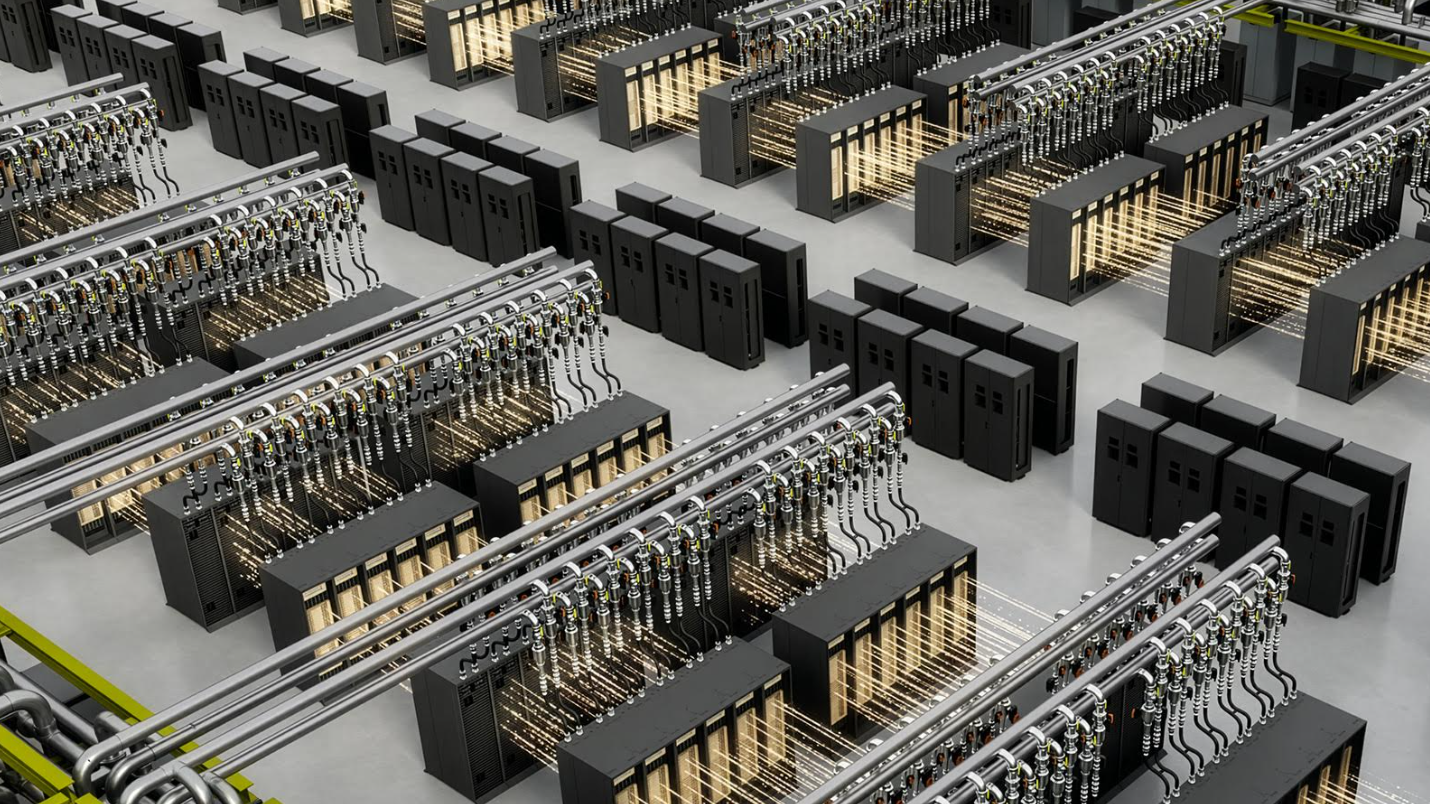

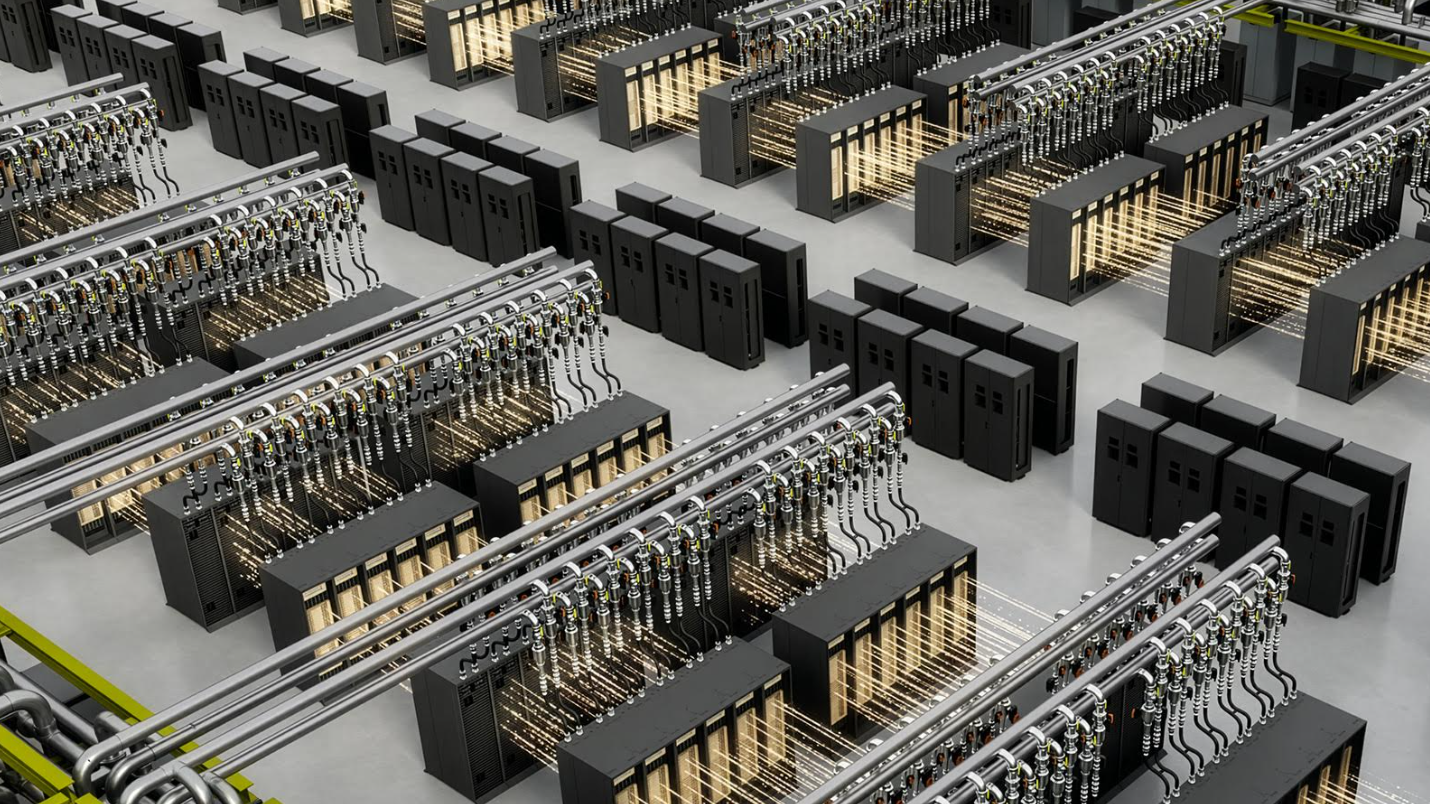

Nvidia said it wants to move away from selling discrete chips and standalone servers and move toward selling complete “AI factories,” made up of fully integrated rack-scale systems and pod-scale deployments to support sovereign AI deployments.

At the heart of this strategy is the new Vera Rubin NVL72, which is a liquid-cooled rack-scale system made up of 72 Rubin GPUs and 36 Vera CPUs connected over its high-speed NVLink 6 interconnects. The system also integrates the new ConnectX-9 SuperNICs and BlueField-4 DPUs to achieve “breakthrough efficiency.”

For instance, Nvidia said the Vera Rubin NVL72 platform can be used to train large mixture-of-experts models using just one-fourth of the number of GPUs compared to what would be required with its previous-generation Blackwell chips. In terms of inference, the company said Vera Rubin will deliver 10 times greater throughput at just a 10th of the cost per token.

For agentic reasoning workloads, Nvidia has introduced the Vera CPU Rack, which consists of 256 CPUs in a single cluster. It’s aimed at reinforcement learning and agentic workloads that require heavy CPU-based simulation to validate GPU-generated results, the company explained. According to Nvidia, these racks are 50% faster and twice as efficient as traditional x86-based CPU servers at reasoning tasks.

Meanwhile, the BlueField-4 STX storage rack is meant to act like a dedicated “context memory” tier, which AI agents can use to maintain coherence during massive, multi-turn interactions, Nvidia said. By offloading cache data to the BlueField-4 chips, companies can increase their inference throughput by up to five-times.

Finally there’s the Nvidia Groq LPX Rack, which is meant to set new standards for accelerated computing. It’s aimed at low-latency workloads and the large context demands of agentic systems, and combines the performance of Vera Rubin with Nvidia’s custom LPUs to accelerate inference throughput per megawatt by 35 times. When paired with the Vera Rubin GPUs, they will boost performance by jointly computing each layer of the underlying AI model for every output token, Nvidia said.

OpenAI Group PBC and Anthropic PBC CEOs Sam Altman and Dario Amodei both heaped praise on the new platform. “Nvidia infrastructure is the foundation that lets us keep pushing the frontier of AI,” Altman said. “With Nvidia Vera Rubin, we’ll run more powerful models and agents at massive scale and deliver faster, more reliable systems to hundreds of millions of people.”

The new chips aren’t just about raw performance – they also tackle two of the major problems with AI infrastructure, namely power consumption and heat. With the new Vera Rubin DSX AI Factory Reference Design, Nvidia has unveiled a comprehensive blueprint for data center operators to build out multiple, massive clusters of Vera Rubin chips.

The DSX stack is powered by Nvidia’s DSX Max-Q software, which uses dynamic power provisioning to squeeze 30% more infrastructure into a fixed power envelope, the company explained. Meanwhile DSX Flex helps AI factories interact with the power grid to unlock “stranded” energy. Companies including Dassault Systèmes SA and Cadence Inc. say they have already integrated the blueprint into their respective Systems Engineering and Reality Data Center Digital Twin platforms.

In addition, Nvidia rolled out the Nvidia Omniverse DSX Blueprint, which allows customers such as Schneider Electric Co. and Siemens AG to build “physically accurate digital twins” of their AI factories. By simulating airflow, power utilization, network topologies and thermal behavior virtually, those companies will be better able to optimize their AI infrastructure and squeeze out more performance at lower costs.

Nvidia said customers won’t have to wait long to get their hands on the new Vera Rubin platform. It’s expected to ship via cloud infrastructure partners like Amazon Web Services Inc., Google Cloud and Microsoft Corp, as well as hardware manufacturers such as Dell Technologies Inc. and Supermicro Computer Inc. in the second half of the year.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.