AI

AI

AI

AI

AI

AI

In the race to define enterprise artificial intelligence, most of the industry is looking up the stack — chasing smarter models, bigger benchmarks and more capable generative AI systems.

In some ways, Oracle Corp. is looking in a different direction.

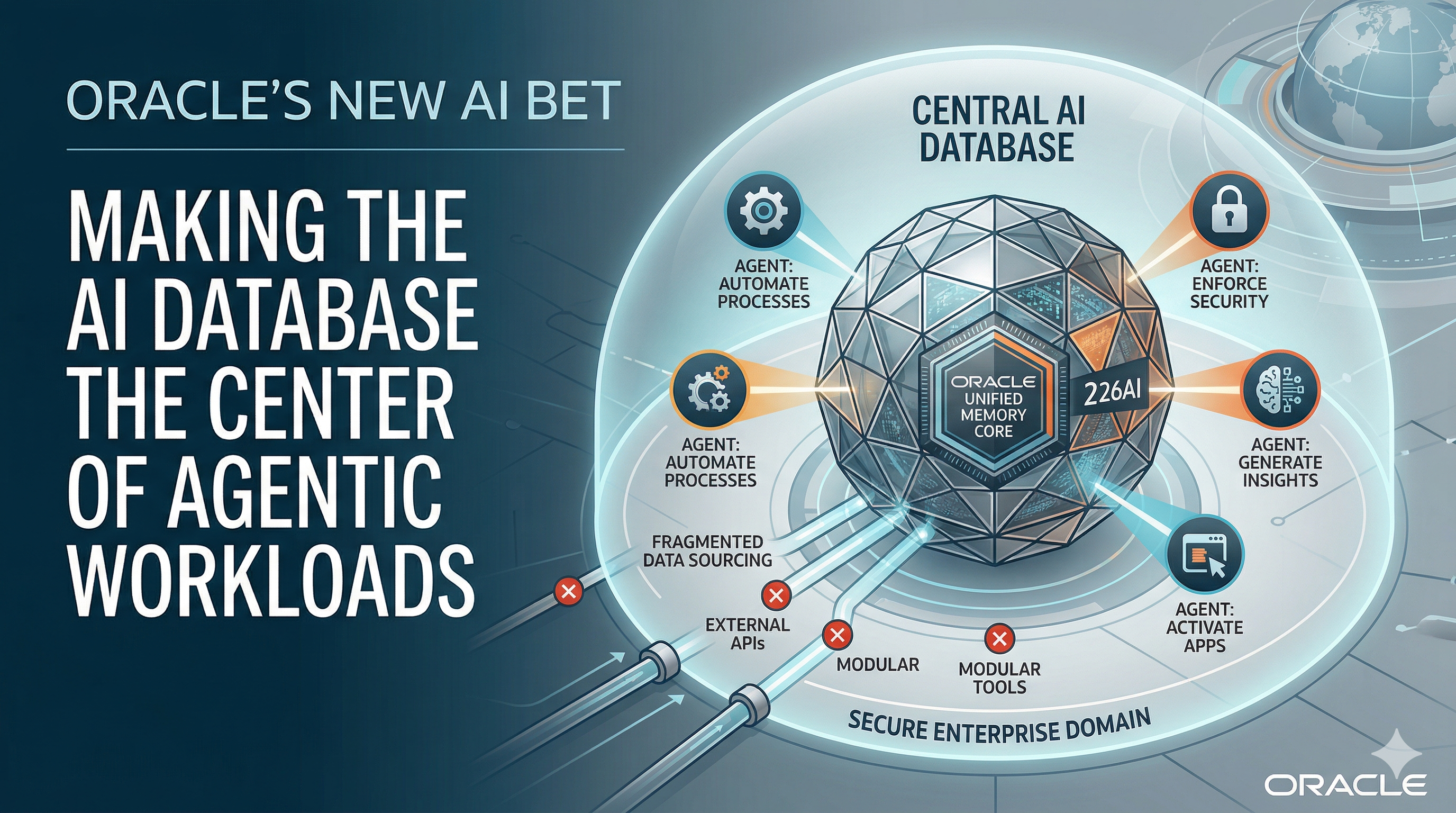

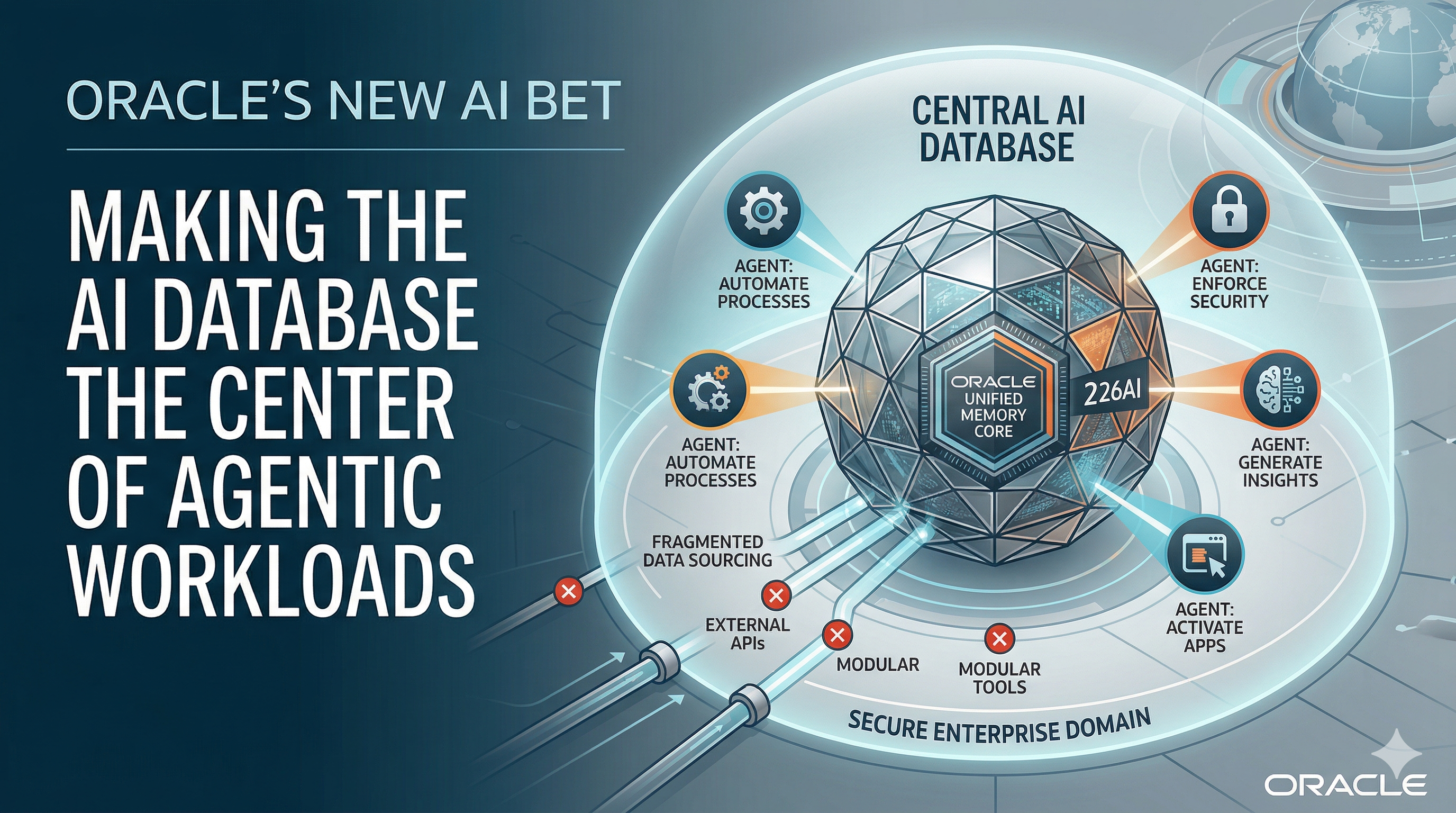

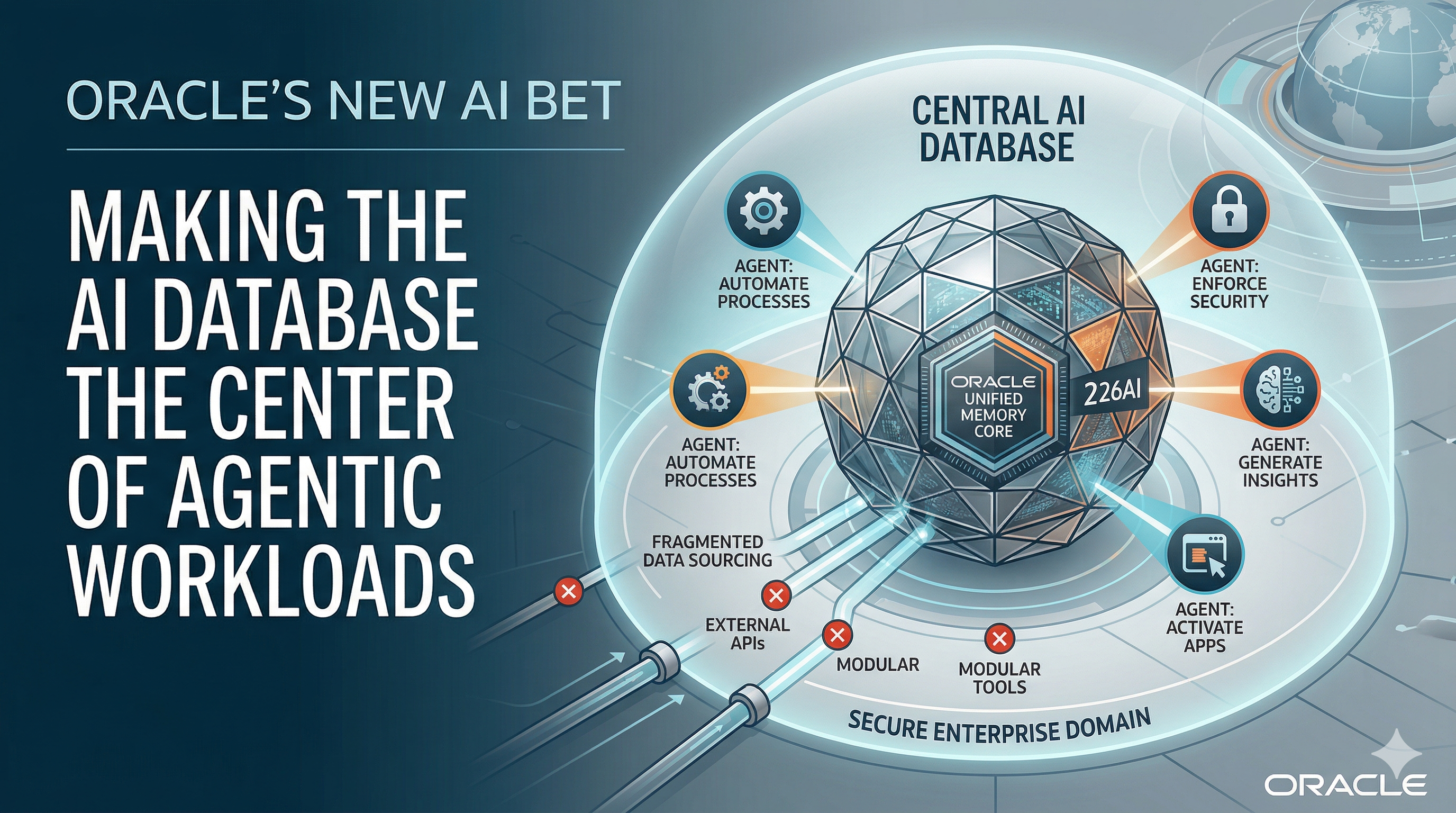

At its latest showcase during the Oracle AI World Tour London 2026, the company made a calculated move in the agentic AI market. Instead of competing head-on in the model wars, Oracle is positioning the database as the center of gravity for enterprise agentic AI, effectively arguing that the future of AI won’t be determined by agents alone, but by where and how they interact with data.

It’s a familiar posture for Oracle, a company that has historically won by controlling the system of record. But in the context of AI, the implications are larger. The database, long treated as back-end plumbing, is being recast as something closer to an operating system for enterprise intelligence.

That shift starts with a blunt view: The bottleneck in enterprise AI isn’t the model.

Though Oracle supports open models, emerging agent MCP and A2A frameworks, and modern data formats such as vector search and Apache Iceberg, its core argument is more opinionated: The most secure and scalable approach to agentic AI runs inside the database.

Despite rapid advances in large language models, many companies remain stuck in pilot mode. Early experiments show promise, but scaling those systems into production has proved far more difficult. The issue isn’t generating outputs. It’s grounding those outputs in real, governed, constantly changing enterprise data.

Most corporate data environments are fragmented by design. Information is spread across transactional systems, analytics platforms and data lakes, often duplicated and inconsistently governed. Layer AI agents on top — systems designed not just to respond, but to act — and those inconsistencies quickly become liabilities.

Oracle’s answer is to remove the seams.

The company’s latest push embeds agentic AI capabilities directly into the database, collapsing what has become an increasingly complex and costly stack. In a typical modern architecture, vector databases, orchestration frameworks and application logic sit alongside traditional systems, all requiring synchronization. Oracle is betting that this modular approach, while flexible, is ultimately too fragile, too costly and too exposed for production-scale, enterprise-grade AI.

Instead, it is promoting a converged data engine — one architecture where transactional data, embeddings, graph relationships, spatial data and security controls coexist and operate in real time.

Central to that vision is a unified memory layer. Rather than moving data between specialized systems, the idea is to allow AI agents to operate directly on live enterprise data in its native form. If successful, this would reduce latency and eliminate the inconsistencies that arise from maintaining multiple copies of the same information.

The company is also introducing what amounts to an internalized agent development model — one that brings the creation and execution of AI agents inside the enterprise boundary. Today, much of that innovation is happening in external ecosystems, where flexibility comes at the cost of control.

Oracle’s approach is more restrictive, but also more governed, positioning agents as managed workloads rather than experimental tools. Enterprises aren’t looking for experiments. They’re looking for production.

Security, long a selling point for Oracle, is being extended to this new paradigm. In traditional systems, access controls are often enforced at the application layer. Oracle is pushing those controls down into the database itself, applying policies at the row, column and cell level, and tying them to both user and agent identities. In a world where queries are dynamically generated, agentic AI guardrails are a shift that could prove to be a significant differentiator for enterprise workloads.

Taken together, these moves reflect a broader strategy: Reduce AI data fragmentation.

The current AI landscape is defined by specialization. A growing ecosystem of vendors offers solutions for every layer of the stack. That has accelerated innovation, but it has also introduced complexity — and with it, operational risk.

As organizations move beyond experimentation, stitching these components together becomes a challenge in its own right. Moving data between single-purpose systems adds latency and cost. Having agents make multiple stops to retrieve answers compounds the problem, and managing context across fragmented systems becomes unnecessary overhead.

Oracle is betting that, for large enterprises, simplicity will outweigh modularity when it comes to agentic AI.

At the same time, a converged data architecture must compete with a fast-moving ecosystem of specialized tools, each evolving rapidly. Developers, who have driven much of the momentum in AI, may resist more opinionated platforms. And many enterprises are already deeply integrated with hyperscale cloud providers, raising questions about how Oracle’s approach fits alongside existing investments.

Oracle’s counter is pragmatic. Its agentic AI capabilities are designed to run across major cloud environments, including Amazon Web Services, Microsoft Azure and Google Cloud, allowing enterprises to activate AI where their data already resides. The goal is to minimize movement, reduce fragmentation and align with existing data gravity rather than disrupt it.

Oracle is architecting AI around its customers’ data. By bringing AI to where data already lives, organizations — especially more conservative ones — can activate AI within environments they already trust, turning AI into an extension of existing systems rather than an experimental overlay.

The industry, in effect, is splitting along philosophical lines. One camp favors composability, where loosely coupled systems can be mixed and matched. The other, increasingly represented by Oracle, is advocating for convergence — tightly integrated platforms designed to reduce operational friction.

Both approaches have merit. The outcome will likely depend less on ideology than on execution.

What is clear is that the center of the AI conversation is shifting.

As enterprises move from prototypes to production, the challenges become less about generating content and more about managing data — ensuring accuracy, consistency and security at scale. In that environment, the infrastructure layer regains importance.

Oracle’s strategy is to elevate the database from infrastructure to the control plane for agentic AI.

If AI agents are to become embedded in core business processes, the systems that feed them data — and govern their actions — will matter as much as the intelligence they exhibit.

Oracle is betting that the future of enterprise AI will be decided not at the edge of the stack, but at the data foundation itself.

Exclusive Oracle World Tour video

TheCUBE interviewed Tirthankar Lahiri, senior vice president of mission-critical and AI engines in Oracle’s AI Database group:

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.