AI

AI

AI

AI

AI

AI

The geopolitical dislocations ripping through the stock market are filtering down to information technology budgets in the form of increased uncertainty.

It seems that every quarter of budget optimism is followed with some external event that causes organizations to tighten their belts. Specifically, we’ve seen the increased momentum from January’s chief information officer sentiment survey on spending, pull back from 4.6% growth to 3.6%. War, oil prices, the threat of inflation and even the prospect of Fed tightening now loom larger.

Although big tech continues massive capital expenditures – and the genuine enthusiasm from this month’s Nvidia GTC and RSAC events is still being felt – mainstream enterprises are once again expressing caution in their spending intentions. In addition to economic and world affairs, artificial intelligence success still eludes most mainstream organizations.

Our observation is that the tech industry is in the third inning of the AI wave, which started in earnest mid last decade with DeepMind and other significant research milestones that led to the ChatGPT and subsequent moments such as Claude Code and OpenClaw. Meanwhile, organizations are still in the first inning and rightly cautious about deploying AI at scale.

The data suggests that though virtually all firms are leaning into AI, those realizing return on investment at large scale remain the mid- to low teens. Despite leading thinkers such as Nvidia Corp. Chief Executive Jensen Huang advising not to focus on ROI, and let innovation flourish irrespective of hard dollar returns, the reality is that in the land of enterprise customers, tangible returns and risk management remain key governors of spending.

In this Breaking Analysis, we share new survey data from Enterprise Technology Research that quantifies macro spending and AI adoption in the enterprise. And we put forth a thesis as to why the gap exists between AI enthusiasm and enterprise adoption — and how the software stack will evolve to make adopting and securing AI simpler. Finally, we draw on the insights from GTC 2026 and what Jensen called “the most important slide” of his keynote. It puts forth a new revenue model that potentially unlocks a new wave of enterprise value.

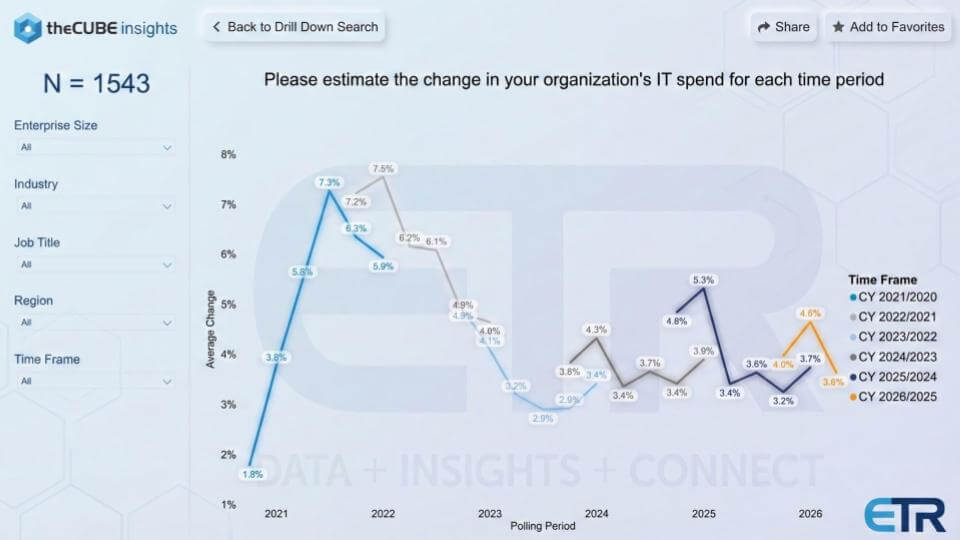

The first chart is the one we keep coming back to because it shows a time series on macro spending sentiment. It’s from ETR’s quarterly drill-down survey of expected IT spending changes, going back to the COVID era, with a large sample size (N = 1,543). The story is one of a whipsaw between optimism and caution – and how quickly sentiment moves when world events get in the way.

Coming out of COVID, the data above shows a big uptick in budget flexibility. IT spending expectations surged into the 7.3% to 7.5% range. Then the air came out as rates rose and uncertainty took hold. By 2022, expectations compressed and ultimately bottomed at 2.9%, inversely proportional to interest rates. This was a reminder that when the macro tightens, IT budgets tighten with it.

From there, the chart becomes a map of confidence shocks. As the Fed started to lower rates, spending expectations improved, but the recovery wasn’t smooth. We saw periodic pops – 4.3%, then up to 5.3% – followed by pullbacks as new uncertainties such as Ukraine and tariffs surfaced. The most recent example is seen exiting December at 4.0%, rising to 4.6% in January, then falling back to 3.6% now with the war in the Middle East. We feel that’s a meaningful swing in a short period of time, especially given AI’s overall momentum.

We believe the right way to interpret the data is IT spending is sensitive to the business climate, and the business climate is being shaped by rates, geopolitics, policy noise and headlines. It’s not always possible to prove causation with a single chart, but over many cycles the market data appears consistent – when uncertainty rises, budget confidence softens, and IT spending follows that trend.

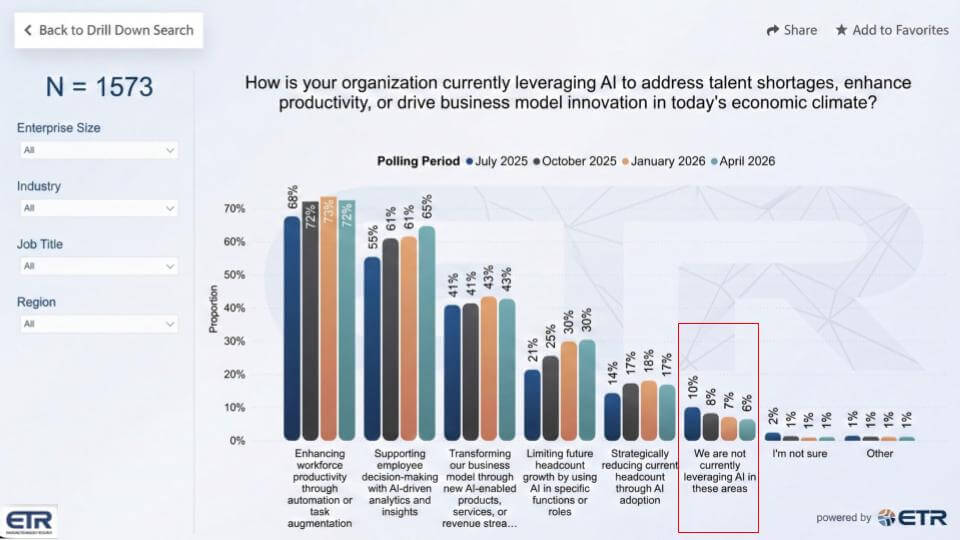

The slide below is a check on how organizations say they are using AI in the current climate (N = 1,573), and it has been asked consistently since July 2025. The top answer is what you’d expect – enhancing workforce productivity through automation or task augmentation. That answer has stayed consistently in the low 70% range range and has been durable across multiple quarters. The question we see organizations asking is: How do we make new breakthroughs beyond early use cases? In other words, firms are seeing early wins but they’re eager to see them compound.

The most impressive movement is the steady rise in supporting employee decision-making with AI-driven analytics and insights. That’s a logical next step after productivity because it builds on the work organizations have already done modernizing analytics. When data is organized and accessible, AI can amplify it quickly.

This is where the modern data stack players have see real tailwinds – vendors such as Snowflake Inc. and Databricks Inc. are the poster children for consolidating analytics into usable platforms, with Oracle Corp. and others such as IBM Corp. also relevant in the broader market. The data suggests more organizations are now pushing AI into the “insights” layer, not just the “automation” layer.

Two other points stand out:

The bottom line in the data is AI is in the building. Productivity remains the primary use case, decision support is gaining momentum, and the job impact is showing up first in hiring plans, not sudden mass reductions. Our take is these are predictable and relatively straightforward early wins, but they’re not game-changing. Later in this post we posit an emerging new architecture that can support more dramatic organizational change as AI becomes simpler and safer to adopt.

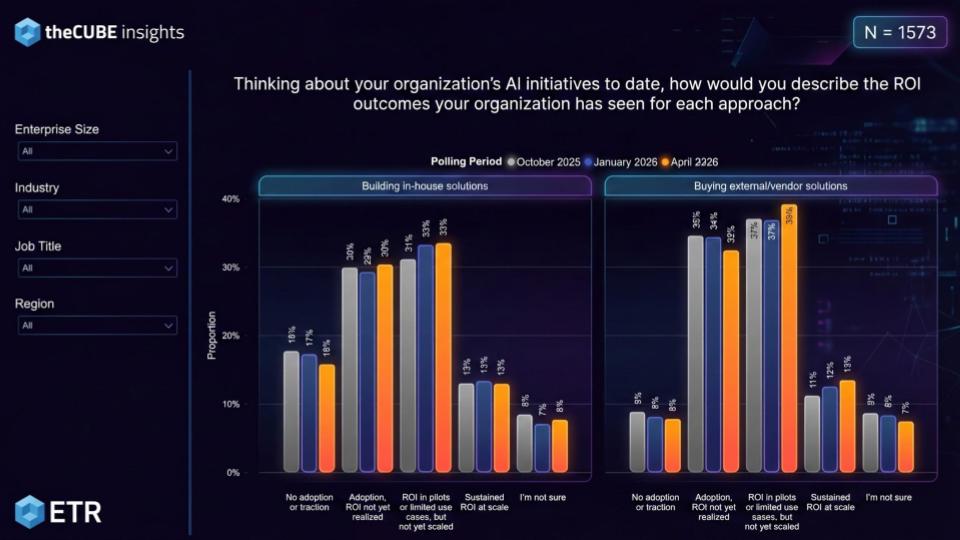

The chart below gets to the heart of the agentic gap – what kind of ROI organizations are actually reporting from AI initiatives so far (N = 1,573). ETR splits the data into two approaches: building in-house solutions on the left and buying external vendor solutions on the right. In both cases, “no adoption or traction” is declining, but it remains meaningful – especially on the in-house side. Embedded AI and vendor-delivered capabilities appear to have an path to adoption, which shows up in the lower “no traction” bars on the right-hand side.

The more telling story is what happens after initial adoption:

On the in-house side, “adoption, ROI not yet realized” sits around the 30% range and has been stuck. That implies a meaningful slice of organizations are building, experimenting, and learning – but not getting payback yet.

Then you hit the dominant category in both sides of the chart: ROI in pilots or limited use cases, but not yet at scale. It’s roughly 33% for in-house and 39% for vendor solutions. That is the clearest indicator that AI is working in pockets, but most enterprises are still struggling to industrialize it.

Finally, the metric everyone cares about – sustained ROI at scale – sits in the low to mid-teens in both cases, about 13%. That’s the headline. Whether organizations build or buy, only a small minority say they have durable ROI at scale.

This supports the broader point we’ve been making in that vendors are moving fast – from RAG-based chatbots to reasoning to agentic workflows – and enterprises are absorbing that shift more slowly. The constraint is not enthusiasm for AI or lack of vision. Rather, it’s operational readiness – AI governance, safety, security, integrating data, hardening processes and building repeatable deployment muscle memory so pilots can convert into production outcomes at scale.

We have argued in previous Breaking Analysis segments that as we moved from on-premises perpetual software models to software as a service, it changed firms’ technical, operational and business models. We further argue that a more profound change is coming with AI that will affect not only IT departments but entire organizations. We’ve written extensively about the infrastructure shift from general-purpose computing to accelerated architectures.

In this section we go further up the value chain and drill into the emerging AI software stack. Here we specifically project the technical layers we see emerging that will support more rapid AI adoption.

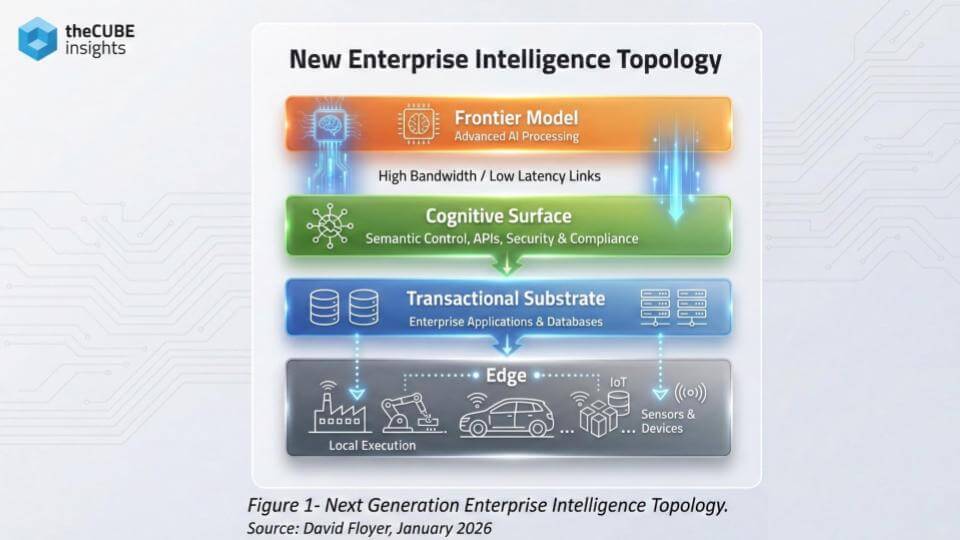

The slide below ties the ROI data to a deeper architectural shift – the enterprise is moving from an app-centric world to an intelligence-centric one. The diagram lays out a four-layer topology and, in our view, it explains what’s missing in today’s software stack and what has to emerge to help simplify adoption, support new business models and help organizations that are stuck in pilots. Organizations are enthusiastic about AI and they’re funding it. That’s not the constraint. The problem is that most enterprises are trying to bolt agentic workflows onto yesterday’s software stack, while the stack itself is being rearranged.

At the top sits the Frontier Model – the scarce, capital-intensive layer that produces tokens. It runs on the most advanced hardware, improves rapidly and is increasingly concentrated in a small number of providers (OpenAI Group PBC, Anthropic PBC, Google LLC and xAI Holdings Corp.). For most enterprises, building this layer is not a viable objective. The economic reality is that frontier models are enabled by AI factories – and most companies will consume them, not replicate them.

The more underappreciated layer is the Cognitive Surface. We have often referred to this layer as the System of Intelligence or SoI. This is where intent gets shaped, context gets assembled, constraints get enforced and outputs get turned into actions. It is also where the enterprise requirements live – security, policy, compliance, auditability, latency control and integration to existing systems.

This is the layer that turns “a smart model” into something operable inside a regulated enterprise. It is also the layer that determines switching costs, because policy, semantics and tool integration get hardened here. As such, switching vendors will become much harder, in our view.

We expect this layer to be distributed – but controlled. Large enterprises will want instances closer to their data for latency, sovereignty and regulatory reasons. But they won’t own the evolution of the cognitive surface. They’ll configure it, operate it and integrate it – within guardrails defined by the frontier model provider. That preserves enterprise control over data and policy while preventing semantic drift.

Below that sits the Transactional Substrate – the systems of record. This layer is essential because it stores truth and executes transactions. The change we project is that intelligence migrates upward. The apps and databases don’t disappear, but their role focuses around state, service level agreement guarantees and execution.

Finally, the Edge eventually becomes important because sensing and physical execution happen there. Capability at the edge will lag initially, but it becomes strategic as agents and automation demand local action and local autonomy when disconnected. This is where smaller language models will thrive, in our view.

The other key point is that the lack of a mature cognitive surface contributes to the agentic AI gap. We see this in the ROI data. Enterprises are being asked to move to a world where intelligence is produced in AI factories as tokens, accessed through application programming interfaces and governed through a cognitive surface. Until organizations (and SaaS players) build the control, governance and integration muscle memory in that middle layer, they’ll keep shipping pilots – and struggling to turn them into repeatable ROI at scale.

We see this model evolving and the four frontier labs will be fundamental in supporting this new software stack. We believe OpenAI, Anthropic and Google will aggressively compete for enterprise traction, while xAI is best positioned for edge workloads, in our view — leveraging Elon Musk’s flywheel of Tesla, Optimus and SpaceX.

We believe the deeper shift underway is not just architectural – it’s economic. AI is catalyzing a model where intelligence is manufactured in AI factories as tokens, accessed through APIs, and paid for as a first-class line item. At the macro, today firms spend approximately 4% of their revenue on technology. We believe this figure will rise to 10% or more within the next decade. Spend will move away from general-purpose computing toward accelerated computing – supported by extreme co-design across central processing units, graphics processing units and networks – with power as the governing constraint.

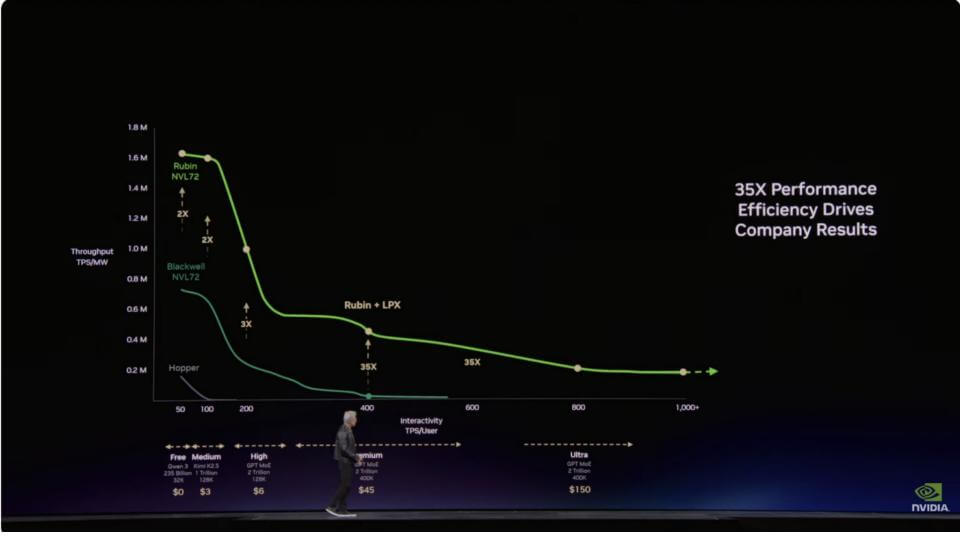

This is why Jensen said the slide below from GTC was the most important. The vertical axis is throughput normalized by energy (tokens per second per megawatt). The horizontal axis is interactivity – responsiveness that is broader than simple latency but latency is the driver of user experience. In a power-constrained world, moving up the curve on the vertical axis means dollars to operators. This what we wrote about as “Jensen’s New Law” in a previous Breaking Analysis.

Access to the latest and greatest systems from Nvidia can be the difference between a stalled AI program and one that scales. Hyperscalers, AI clouds and frontier labs have known this for years. Getting on the Nvidia technology curve is critical for leadership. The annual cadence from Hopper to Blackwell to Rubin is the key – massive step-function improvements on a 12-month cycle, not an 18- to 24-month Moore’s Law clock. The 35X improvement called out on the slide below is the kind of delta that changes unit economics overnight if you have a fixed power budget. The big capex spenders know this and the dynamic will migrate to enterprises as described by Jensen and shown on the horizontal axis.

That’s the other part of the chart where the business model starts evolve. Training monetizes primarily on the vertical axis – maximize throughput per megawatt and customers “buy more, save more” or “buy more, make more” depending on whether they’re building models or selling capacity to model builders.

Interactivity is a second monetization opportunity on the X-axis. It creates tiers – free, medium, high, premium, ultra – where users pay more for better responsiveness, and where the most demanding workloads drive the highest willingness to pay. Low-latency inference becomes a priced product and a service delivered through software.

That’s where the Groq integration and Nvidia’s $20 billion Groq investment starts to makes sense. Rubin + LPX extends the curve further to the right – preserving throughput while improving interactivity. The implication is that the platform that can move the curve right without collapsing the curve down gets to charge for use cases that are sensitive to responsiveness, especially in agentic workflows and edge inference. The spectrum goes from freemium (free ChatGPT) to paid ($20/month) to higher-paid tier ($200/month) to coding assistance to super-low-latency agentic– ultra-expensive but worth it because it drives revenue.

The takeaway for enterprises is that this is not something they can absorb overnight. They have to pick the use cases that matter, build the systems, validate safety and controls, operationalize them, prove ROI, then scale. At the same time, the cost model changes. Token spend becomes part of cost of goods sold – the way cloud costs became part of SaaS COGS – and organizations start managing token budgets as a core operating discipline.

This is why cautious IT budget sentiment can coexist with AI enthusiasm. Customers don’t want to over-invest in legacy spend, and they don’t want to over-rotate into the new spend until they understand where they sit on the curve – and how to translate throughput and interactivity into unit economics, outcomes and predictable revenue returns.

Going forward this will create new revenue models and begin to break down organizational silos that exist today because of technology constraints. Many departments build their own custom tech stack to support their specific mission. Processes are developed and organized around this tech stack. Data lives in their siloed department and humans then integrate the data via extract/transform/load processes, data pipelines, data science workflows and the like.

Increasingly, we believe these silos will dissolve to a great extent as organizations gain access to intelligence in the form of tokens to power their agentic enterprises. They will build digital representations of their organizations and the operational model will evolve to support this new reality.

Jensen said something profound at GTC. Every CEO must understand where they sit on this Pareto curve. Are you monetizing on the vertical axis, the horizontal axis or both? Today a new employee gets a laptop and access to systems. In the future they will get a token budget to direct their revenue-producing agents. A software engineer paid $300,000 to $500,000 who spends only $5,000 annually on tokens would be like a chip designer eschewing modern design tools and using graph paper instead. They would be fired.

That sounds absurd, but the analogy holds for the future of business. Profit-and-loss managers, sales pros, operational staff, logistics planners, finance pros and others will all be managing armies of agents and burning tokens. Closing the agentic gap requires new technology, business and operational models that can be executed securely and safely.

That day is coming. Where do you sit on the pareto and how fast can you get there?

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.