INFRA

INFRA

INFRA

INFRA

INFRA

INFRA

There’s a narrative forming in artificial intelligence infrastructure — and most people are still looking in the wrong place. Everyone’s focused on graphics processing units. More compute. Bigger clusters. Faster chips. That’s where the headlines are.

But the real constraint in AI factories isn’t compute. It’s movement.

Inside every modern AI system, data is constantly in motion — between GPUs, across racks, across clusters. And right now, that movement is hitting a wall. Not because bandwidth is lacking on paper, but because the architecture moving that data is fundamentally broken.

That’s where Resolight.ai Ltd. enters the picture. The stealth startup is aiming to tackle the hidden bottleneck in AI infrastructure, promising to rewrite the physical and economic equations of AI factories. Current players such as Nvidia Corp., Broadcom Inc., Marvell Technology Inc., Cisco Systems Inc. and Advanced Micro Devices Inc. are all working with co-packaged optics vendors such as Ayer Labs and Celestial AI — recently acquired by Marvell for up to $3.25 billion — to solve interconnect challenges. Resolight says its photonic processor can outperform these approaches by orders of magnitude.

I sat down with co-founder and Chief Executive Ofer Shapiro at theCUBE studio in Palo Alto for an exclusive coming-out-of-stealth discussion. What he described isn’t an incremental improvement — it’s a rearchitecture of AI networks that could shift the economics of the next generation of AI factories.

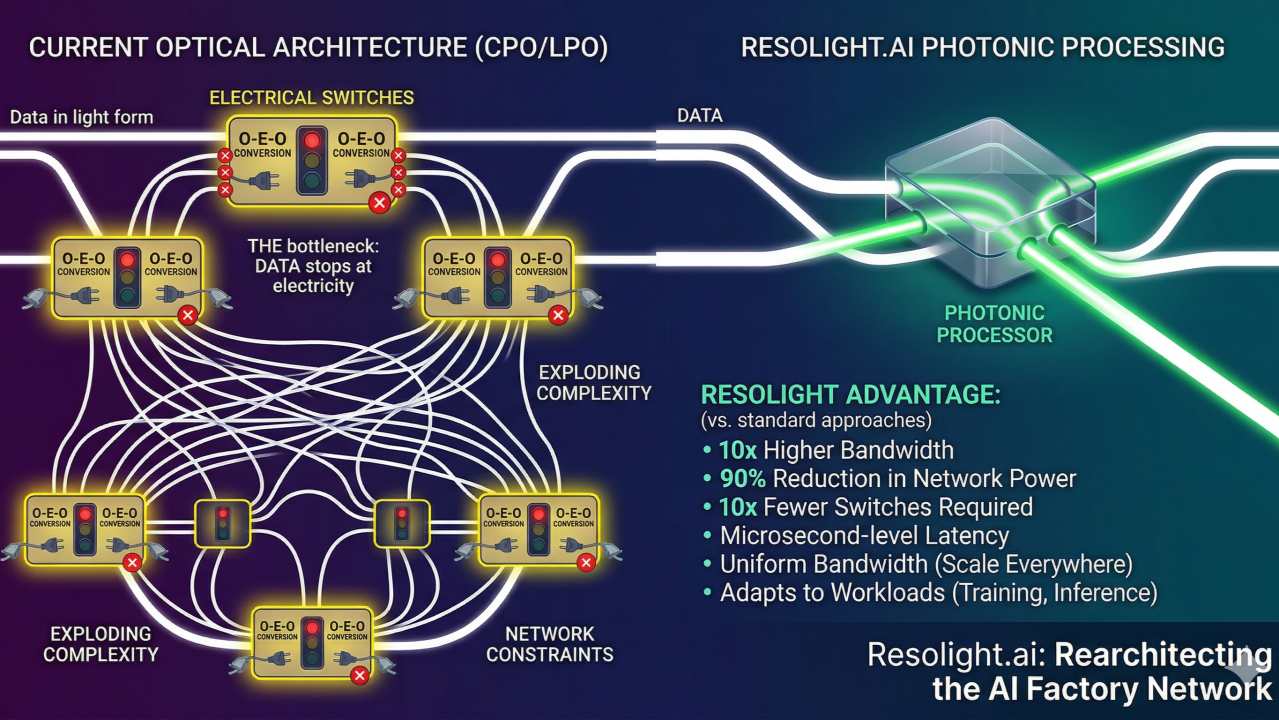

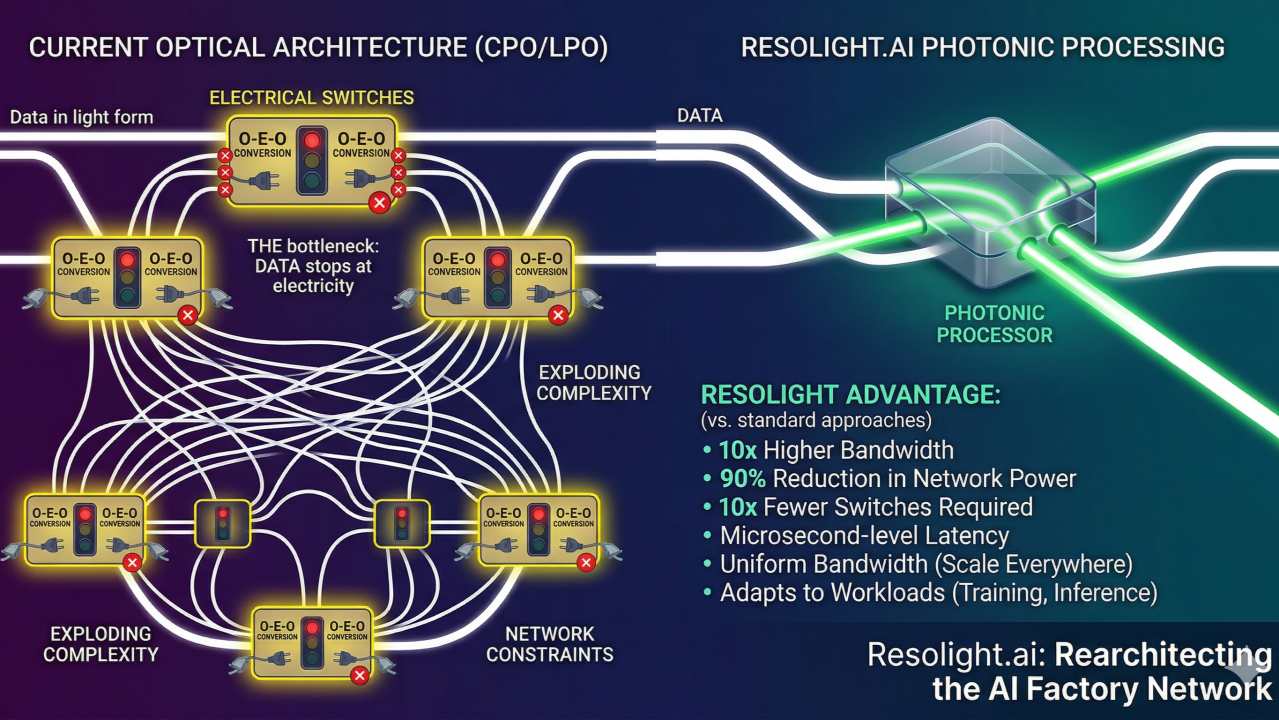

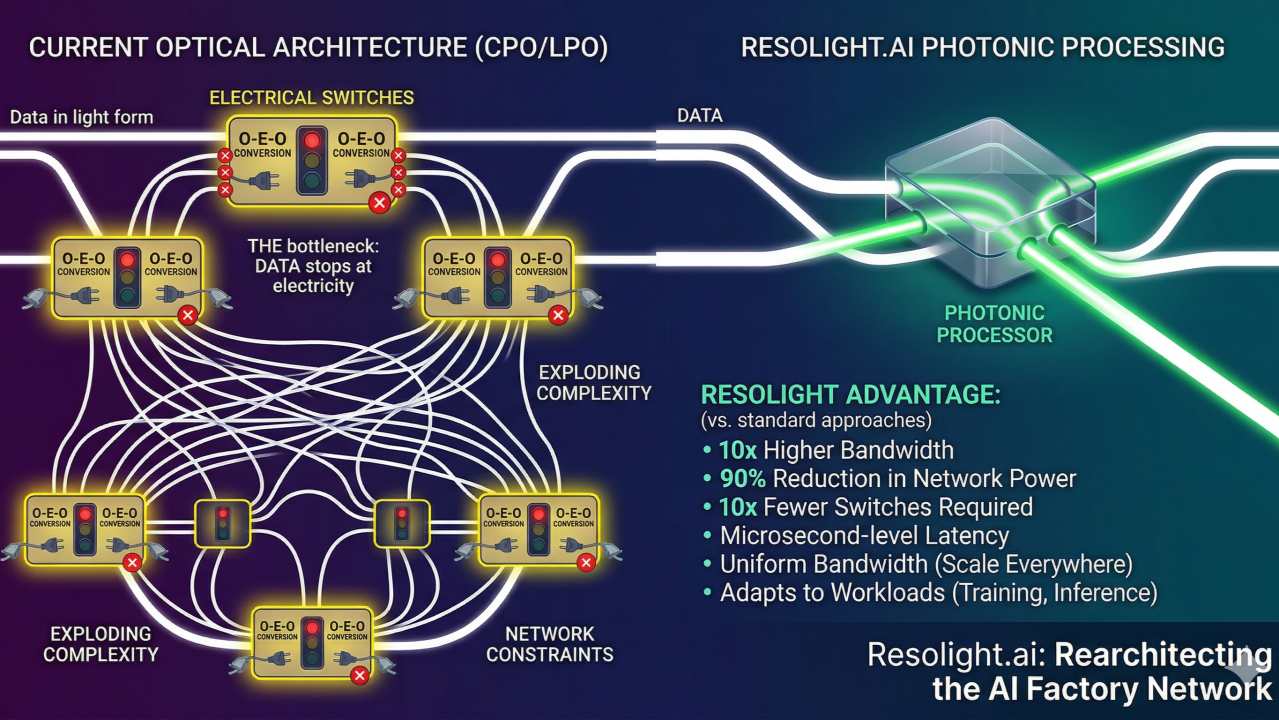

The AI industry has already made a key shift: moving from copper to optics. Co-packaged optics ,or CPO, and linear pluggable optics, or LPO, are now standard for moving massive amounts of data across AI clusters.

But even after data moves as light, it is converted back into electrical signals for processing and routing. That conversion is the bottleneck.

“You build a high-speed optical highway… and then install a stoplight in the middle,” Shapiro explained. That stoplight is the electrical switch —s till limiting throughput, adding latency and consuming power.

Resolight’s answer is what Shapiro calls photonic processing: keeping data in the optical domain throughout the network, eliminating the need for optical-to-electrical-to-optical conversions.

Rather than processing individual bits electronically, the system manipulates data in bulk, directly in light form. According to Shapiro, this approach delivers:

This isn’t simply a faster switch. It’s the removal of the traditional switching paradigm altogether.

AI factories are scaling at a pace the networks can’t yet support. Today’s architectures strain under:

Shapiro says that once you model millions of GPUs, the number of required switches and connections explodes — creating a hard wall to scaling.

Historically, AI infrastructure has traded off between:

Resolight’s architecture collapses that distinction. Its “scale everywhere” vision allows:

The network no longer dictates how compute is used. Software does. That’s a fundamental unlock for AI factory economics.

Reducing network complexity by an order of magnitude drives wide-ranging benefits:

Data center design can finally prioritize compute density and flexibility rather than over-engineering for network constraints.

Incumbents are optimized for incremental gains — faster ports, better application-specific integrated circuits, slightly improved efficiency. Resolight is doing something different: breaking the model those systems are built on.

Shapiro emphasized that this type of leap doesn’t come from optimizing existing designs. It comes from rethinking how information moves across the data center. That’s classic startup territory.

Publicly, there’s little noise. Privately, conversations with leading AI infrastructure teams are moving quickly from introductions to testing plans. The challenge is well-understood — the credible path forward has just arrived.

At Nvidia’s GTC conference, the message was clear: AI can’t wait. More compute drives more intelligence, which drives more revenue — but only if the system can feed that compute. Networking is no longer a support function. It is the engine’s transmission.

And today, that transmission is under strain.

AI factories are entering a new phase, and the constraints are shifting. Compute is no longer the bottleneck. The network is.

Resolight is betting that the next wave of AI infrastructure won’t be defined by faster chips alone — but by a fundamentally new way to move and process data.

If the company succeeds, this isn’t just a better component. It’s a new architecture. For investors and technologists building the next generation of AI factories, that’s where the real leverage lies.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.