NEWS

NEWS

NEWS

NEWS

NEWS

NEWS

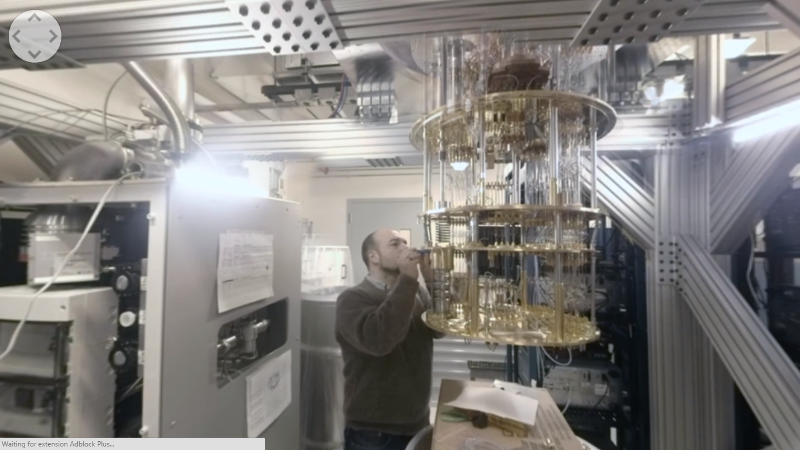

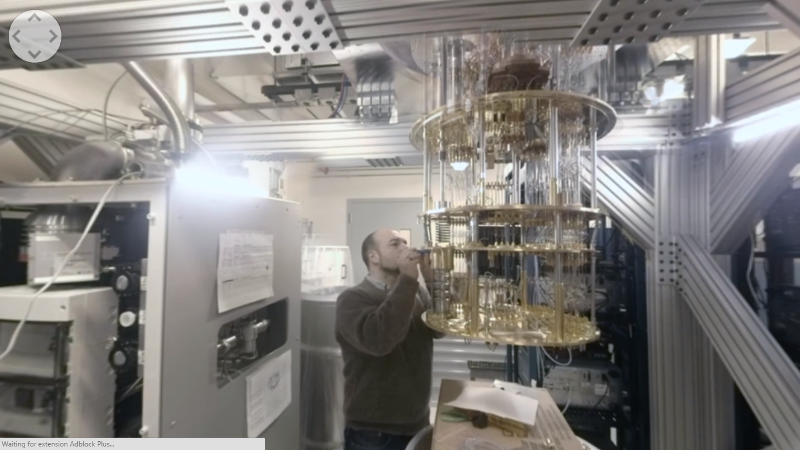

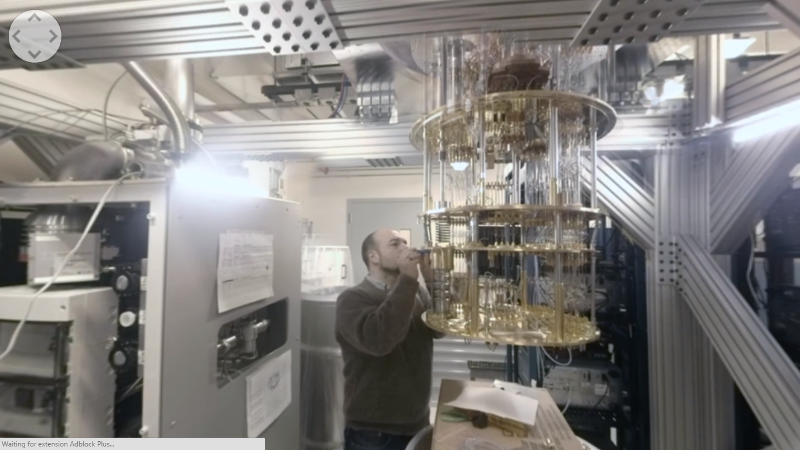

Tucked away at IBM Corp.’s T.J. Watson Research Center in a high-tech fridge cooled to almost absolute zero is an experimental chip that could help advance scientific inquiry further than any conventional supercomputer. It’s the culmination of a more than three-decade research effort that the technology giant launched shortly after Richard Feynman first proposed the idea of quantum computing in a 1982 paper.

From theory to practice

In his thesis, Feynman theorized that the special laws governing subatomic particles could be exploited to surpass the capabilities of regular computers restricted by classical physics. The core premise of the technology has since been repeated countless times in science journals: Whereas a bit in a normal machine can only represent one of two values, 1 or 0, its quantum counterpart has a third possible state wherein it’s set to 1 and 0 at the same time. This quirk is owed to a phenomenon known as quantum superposition that is illustrated by the famous Schrödinger’s cat thought experiment.

A qubit may thus represent three values simultaneously, while a pair can be used to represent seven with enough effort, three can represent 15 and so on. And if 100 such qubits were to be implemented on the same chip, as physicist Hans Robinson postulated in a 2005 New Scientist article, then the number of possible states could climb to more than sexttillion. That’s 1 followed by 30 zeros, which far exceeds the capacity of current supercomputers. As a result, a 100-qubit computer would be excellent at performing tasks that involve going through a large amount of different mathematical combinations. Decrypting scrambled data and genome sequencing are two of the more common applications that come up whenever the topic is discussed.

But 100-qubit computers only exist in the theoretical realm for the time being. And until recently, the scientific community didn’t even know if one could even be built in practice due to the incredible fragility of the few small quantum computers that have been made to date. That is, until IBM came along with its new chip.

Breaking the barrier

Dubbed Quantum Experience, the demo at the T.J. Watson Research Center manages to fit five qubits on a single processor by exploiting a breakthrough that IBM engineers announced last April. Their discovery provides a way of correcting the errors that form in semiconductors over time due to external factors such as heat and background radiation.

A qubit is vulnerable to two main types of glitches: Bit-flips wherein a 1 changes to a 0 or vice versa and phase-flips, which interfere with the relationship between the two values when the qubit is in a superimposed state. Theoretical physicists led by MIT’s Peter Shor have developed methods to fix both over the past two decades, but historically, only one of the errors could be corrected if they manifested in a qubit at the same time. As a result, a company that would have tried building a five-qubit computer a few years ago would’ve seen its system overwhelmed with unchecked data corruption issues to the point of becoming unusable.

By overcoming the problem, Quantum Experience paves a path for bigger and better quantum computers to be built in the coming decades. But there are still many more challenges that need to be addressed before IBM’s vision can be realized, not least of which is the task of developing suitable software for quantum computers. After all, a genome sequencing or encryption application created to run on conventional hardware isn’t exactly equipped to exploit superimposed numerical states.

Cloud savvy

To ensure that there will be software to take advantage of quantum computers by the time they become a reality, IBM is making its chip accessible to the academia through a free cloud service. The new algorithms that researchers will develop using the processor may very well end up finding use in the quantum computers of tomorrow if and when they arrive, and not only in those developed by Big Blue. Alphabet Inc., Canada’s D-Wave Systems Inc. and a number of other companies are also working to hurry along the quantum computing revolution.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.