INFRA

INFRA

INFRA

INFRA

INFRA

INFRA

Computer graphics chip maker Nvidia Corp. is paving the way for a new breed of supercomputer with the announcement that its artificial intelligence and high-performance computing infrastructure will soon support Arm-based central processing units.

The company said early today that its CUDA-X AI and HPC libraries, graphics processing unit-accelerated AI frameworks and software development tools will all support Arm-based machines by the end of the year. It’s an important step because Arm-based supercomputers should enable far greater scale thanks to their greater power-efficiency, the company said.

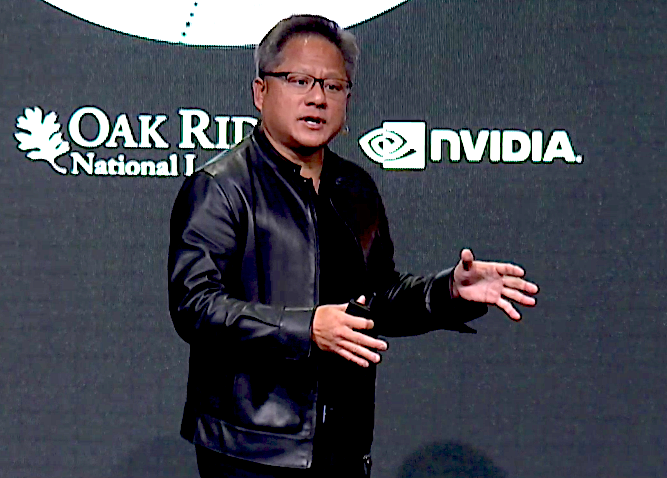

“As traditional compute scaling ends, power will limit all supercomputers,” said Nvidia Chief Executive Jensen Huang (pictured). “The combination of Nvidia’s CUDA-accelerated computing and Arm’s energy-efficient CPU architecture will give the HPC community a boost to exascale,” a term that refers to computers that can do a quintillion operations per second.

In a press briefing, Ian Buck, Nvidia’s general manager and vice president of accelerated computing, said the decision to support Arm CPUs came about because of broad and growing interest in the industry.

“What makes Arm interesting is it’s very open,” Buck said. “[It provides] flexibility for new ways to connect CPUs and GPUs and do more energy-efficient computing.”

Nvidia’s infrastructure is already used in 22 of the world’s top 25 most energy-efficient supercomputers, thanks to its support for x86 and POWER-based computer chips. With the additional support for Arm chips, Nvidia hopes to boost its presence in the HPC space to support even more advanced AI workloads, it said.

Nvidia is also looking to expand its supercomputing power into specific use cases, including training AI systems for self-driving cars.

To do that, today it unveiled what it says is the world’s 22nd fastest supercomputer, called the DGX SuperPOD, along with a reference architecture for companies looking to deploy it in their own or outside data centers.

Nvidia said the DGX SuperPOD is designed to provide the AI training infrastructure necessary for the deployment of massive fleets of autonomous vehicles. Built in just three weeks, the new machine is composed of 96 of Nvidia’s older DGX-2H supercomputers that are integrated using new data center interconnect technology, obtained by the company when it acquired Mellanox Inc. earlier this year.

The company said the DGX SuperPOD is designed to train neural networks for self-driving cars so they understand the “rules of the road” and delivers 9.4 petaflops or quadrillion floating-point operations per second, a standard measure of processing capability. That’s an astonishing amount of power – so much so that it can slash the training time on the popular image classification ResNet-50 AI algorithm from 25 days to less than two minutes, officials said.

“AI leadership demands leadership in compute infrastructure,” Clement Farabet, vice president of AI infrastructure at Nvidia, said in a statement. “Few AI challenges are as demanding as training autonomous vehicles, which requires retraining neural networks tens of thousands of times to meet extreme accuracy needs.”

With reporting from Robert Hof

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.