AI

AI

AI

AI

AI

AI

Google LLC has announced a set of artificial intelligence upgrades to its search engine that will enable users to find more types of information, as well as increase the accuracy of returned results.

Company executives detailed the enhancements at the company’s Search On virtual event on Thursday.

In cases when users want to find a particular song but can’t remember its name, they can now hum, whistle or sing parts of it and Google will try to identify the tune. That’s made possible by machine learning models that convert the audio into an abstract representation consisting of a series of numbers. This series is then compared to a database of number sequences generated from popular songs.

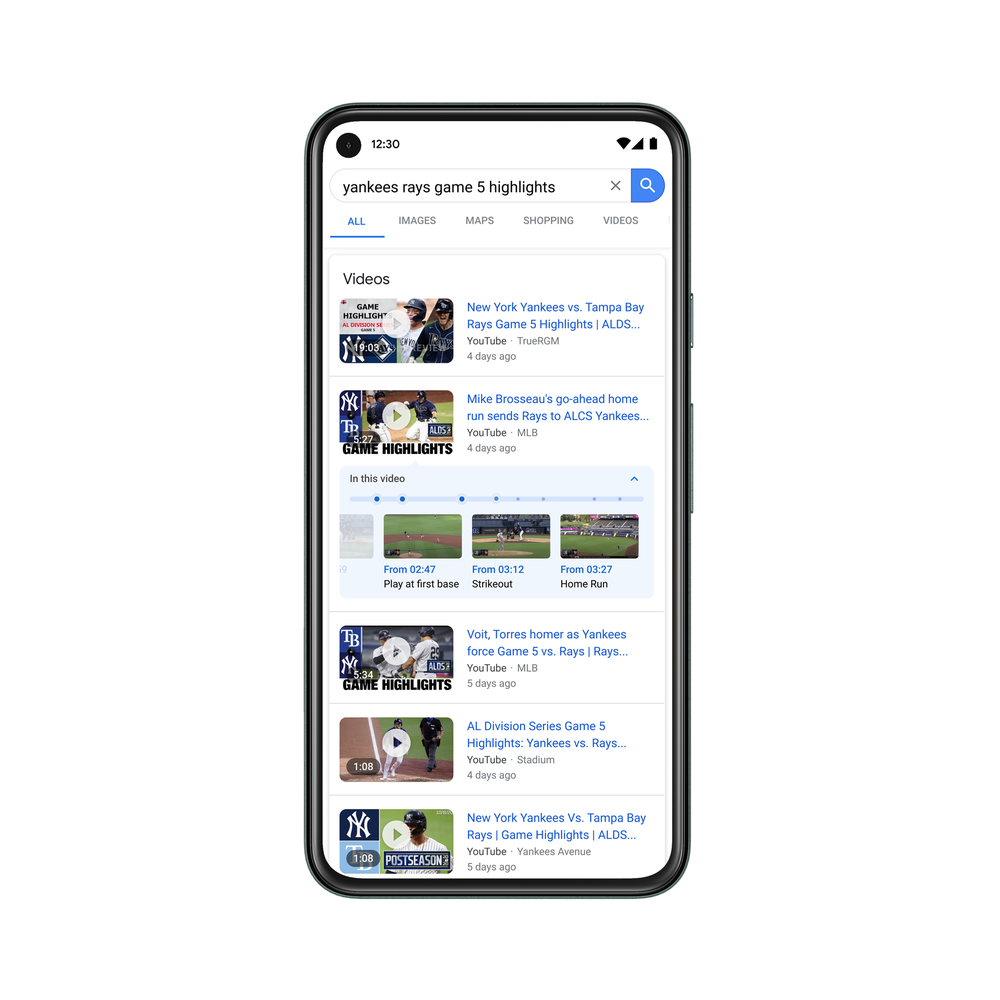

Looking for videos will become easier as well. Google’s algorithms can now identify key moments in clips indexed by its search engine, tag them and incorporate them into search results. For instance, if a user is looking for information about a step in a recipe, Google could not only surface a relevant culinary video but also flag the specific part of the clip during which the given step is discussed.

“We’ve started testing this technology this year, and by the end of 2020 we expect that 10 percent of searches on Google will use this new technology,” detailed Google Head of Search Prabhakar Raghavan.

Beyond making it easier to browse media content, Google wants to simplify the task of looking up statistics such as economic growth and smartphone adoption. The search giant has been working on a repository of statistics called the Data Commons Project since 2018 that includes billions of data points from sources such as the U.S. Census Bureau. Now, Google will start surfacing statistics from the repository in response to user queries.

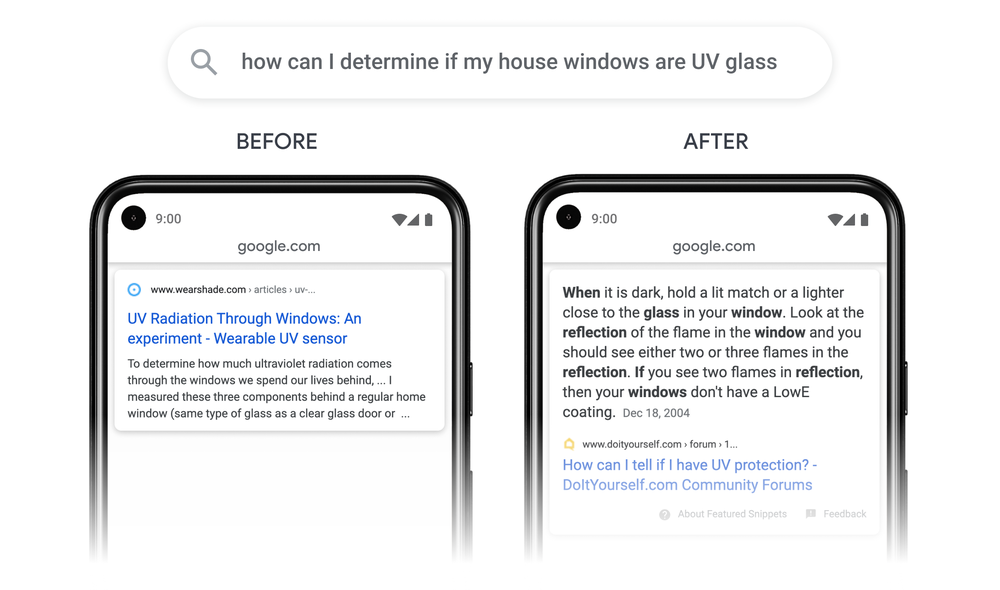

Google also announced more general-purpose enhancements at Search On that focus on boosting the search experience as a whole rather than specific types of queries. Google’s built-in spellchecker, which detects when a query might be misspelled and suggests alternative wording, is receiving what the company describes as the biggest improvement in the past five years. Another upgrade will enable the search engine to return the specific passage from a web page that it deems to be most relevant to a user’s request.

Separately, the company is implementing new neural networks optimized to identify query subtopics. If, for instance, a user searches the term “smartphone accessories,” these neural networks would generate a list of subtopics that might include wireless chargers, headphones and phone cases. Google plans to incorporate a more diverse mix of subtopics into search results with the help of the technology to increase the chance of users finding what they’re looking for.

Google executives detailed a number of other product enhancements at the event as well, including improvements to business listings and the Google Lens app. Lens lets aim their phone camera at an object to receive more information about it.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.