BIG DATA

BIG DATA

BIG DATA

BIG DATA

BIG DATA

BIG DATA

SambaNova Systems Inc., an artificial intelligence hardware startup that has raised more than $465 million in venture funding, today introduced its long-anticipated computing platform optimized for AI workloads.

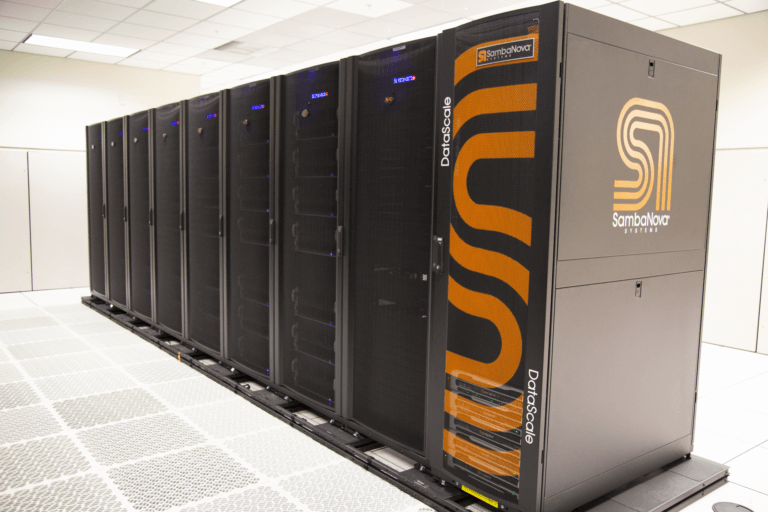

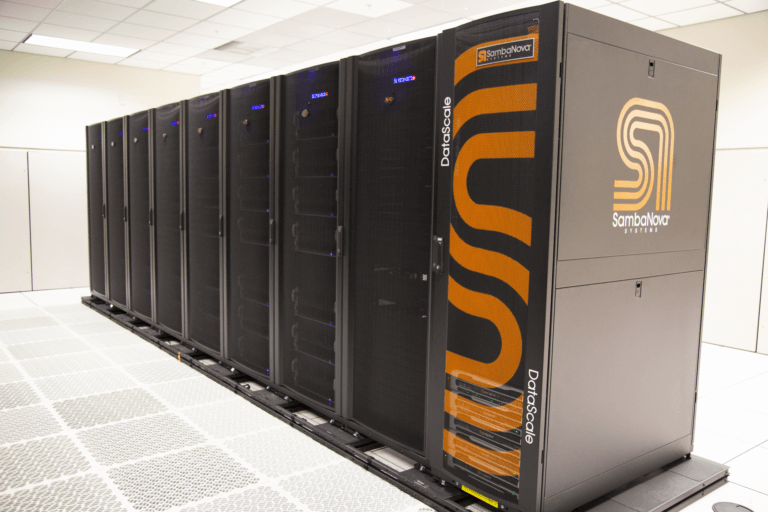

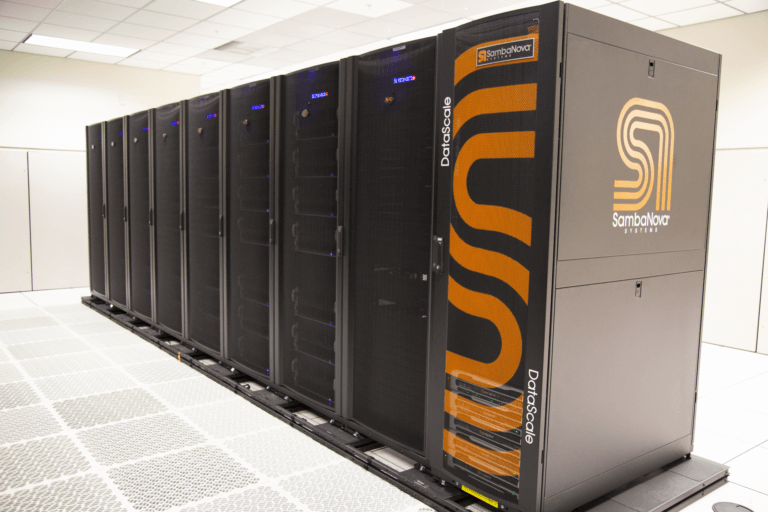

SambaNova Systems DataScale employs custom seven-nanometer chips that the company says are better attuned to machine learning and deep learning processes than the general-purpose microprocessors and graphics processing units widely used for such tasks today.

The system’s Reconfigurable Dataflow Architecture works with an integrated open-source software stack called SambaFlow that “will configure the right flow to optimally run machine learning models,” said Chief Executive Rodrigo Liang. “We minimize the need to interface to memory and take away one of the largest bottlenecks, which is the interconnect between chips and memory.” SambaFlow also eliminates the complexity of scaling workloads across multiple processors, the company said.

Conventional central processing units are “about add/subtract/multiply/divide,” Liang said. “With machine learning you need to optimize for data and figure out what output flows into what input.” The company’s architecture can attach as much is 12 terabytes of memory directly to the processor and avoids the need to employ outboard accelerators such as GPUs.

SambaNova didn’t specify pricing for the computers, but judging by the large number of government labs it claims to have in early deployment, potential customers don’t have to ask. Each system is customer-configured and delivered in a quarter-, half- or full-rack configuration.

The company is also introducing an “as-a-service” composable infrastructure model in which SambaNova installs and maintains the system on the customer’s site but charges only for usage with prices starting at $10,000 per month. The company has quietly put in place “a world-class customer engineering organization for pre- and post-sale technical support” on six continents, said Chief Product Officer Marshall Choy.

SambaNova said its system is designed to get customers up and running quickly, with installation times of as little as 45 minutes. It’s targeting existing workloads created with data science libraries such as PyTorch and TensorFlow in applications that include healthcare research, natural language processing and recommendation systems.

“You can go to Hugging Face, which has thousands of Transformers, download open-source models and run them in seconds,” Liang said. “We tap into the ecosystem where people are innovating above TensorFlow and PyTorch and not below.” Transformers are neural network models that are well-suited for natural language processing.

“We’re going to take your standard models and run them many times faster and at scale or we’ll take the same model and consume significantly larger data sets,” Liang said. Based on comparisons to Nvidia Corp.’s DGX A100, a purpose-build AI computer, SambaNova says it can run the deep learning recommendation models that Facebook Inc. released to open source last year seven times faster with higher accuracy rates. Bert-Large, a model for natural language processing developed by Google LLC, runs 1.4 times faster.

The company has a blue-chip investor pedigree. It was one of 14 AI-related startups that split a $117 million investment tranche by Intel Corp. early last year. In February it scored a massive $250 million Series C funding round led by a group of high-profile venture investors.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.