AI

AI

AI

AI

AI

AI

Gimlet Labs Inc. said today it has raised $80 million in early-stage funding to solve a bottleneck that’s holding back artificial intelligence inference.

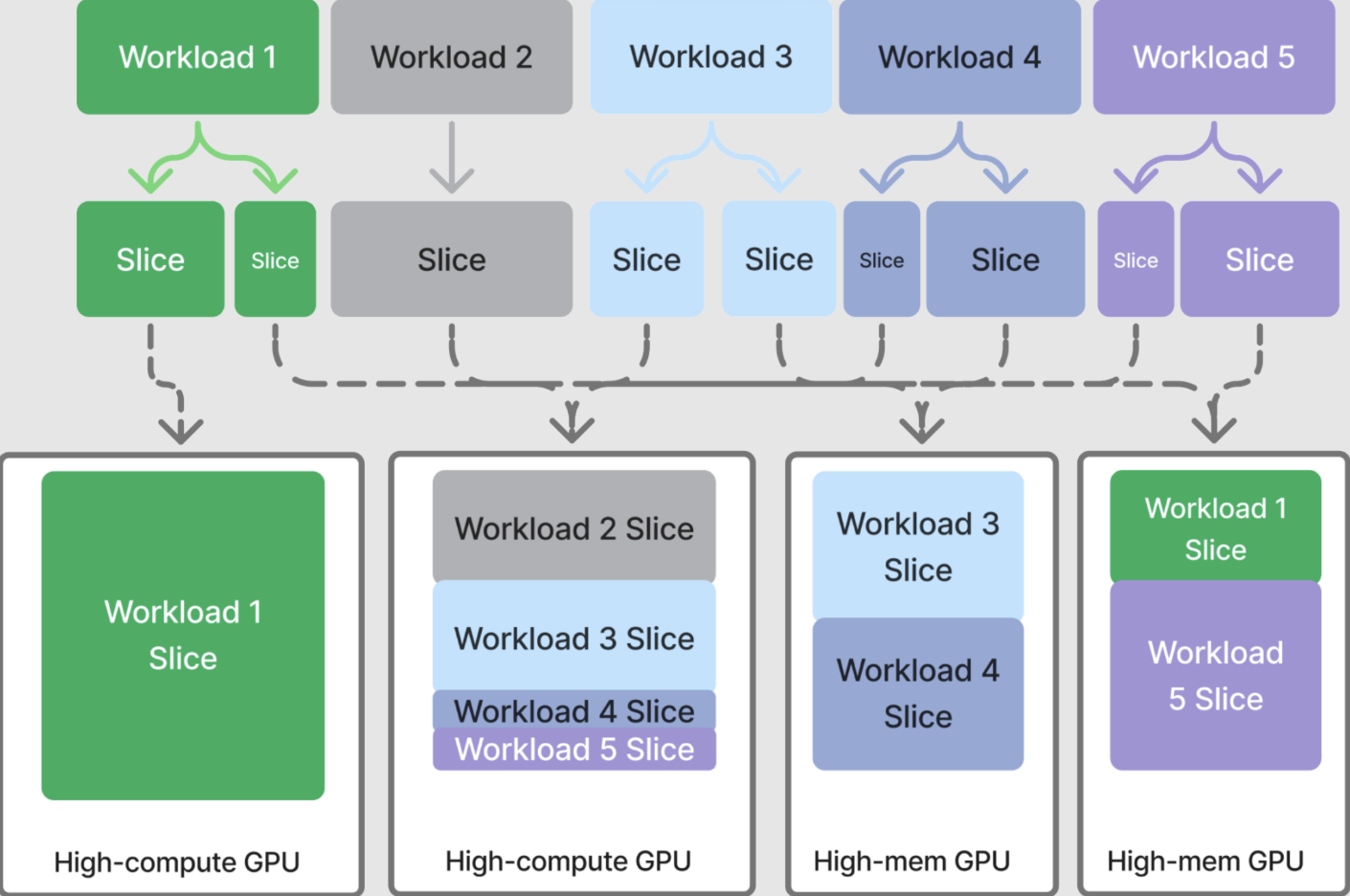

The startup, which has raised $92 million in total, has created what’s said to be the world’s first and only “multi-silicon inference cloud.” It differs from standard inference clouds, because it enables AI workloads to be run simultaneously across various kinds of chips. For instance, an AI application’s work can be split across traditional central processing units, high-performance graphics processing units, and other kinds of processors. Inference is the process of using a trained machine learning model to make predictions or decisions on new, unseen data, turning AI into action.

Menlo Ventures partner Tim Tully discussed in a blog post why multi-silicon inference is so useful. He explained that when an autonomous AI agent is assigned a task, it may “chain together dozens of model calls, retrieval steps and tool invocations across non-linear branching logic.” Each step in this chain is best performed by different hardware. For instance, prefill is compute-bound, decode is memory-bound and tool calls are network-bound.

“No single chip can handle all three efficiently. Instead, the answer is heterogeneous,” Tully said.

Compute-intensive batch inference is best done using GPUs, while latency-sensitive workloads can benefit from running on specialized static random-access memory-heavy processors such as Groq, Cerebras and d-Matrix, as these deliver exceptional speed. Tasks such as orchestration and tool use, on the other hand, generally run better on CPUs. “The multi-silicon fleet is ready – it’s just missing the software layer to make it work,” Tully said.

By splitting up AI tasks across multiple processors in this way, Gimlet Labs says, it can dramatically improve efficiency and reduce the time chips spend sitting idle, waiting for instructions. It reckons that it can speed up inference workloads by anywhere from three to 10 times for the same cost and power. It can even slice up AI models themselves, so that different parts of them run on different chips.

Gimlet Labs founder and Chief Executive Zain Asgar told TechCrunch in an interview that existing hardware generally runs only at between 15% and 30% efficiency. “You’re wasting hundreds of billions of dollars because you’re just leaving idle resources,” he said. “Our goal was basically to try to figure out how you can get AI workloads to be 10 times more efficient than ever, today.”

Constellation Research analyst Holger Mueller said Gimlet Labs has essentially built a directory that assigns AI workloads to the most appropriate chip architecture to ensure they can be done as efficiently as possible. “When we talk about the “right” architecture, that can mean the best hardware in terms of cost, energy speed or other parameters, whatever the user requires,” the analyst said. “This is just the kind of innovation data center operators want to see, and no surprise to see it come from the startup field. Gimlet’s challenge now is to win the confidence of users and show it’s able to run their AI workloads, faster, cheaper and with less energy, without breaking anything.”

The startup’s software is not aimed at regular rank-and-file developers. Instead, Gimlet Labs is going after the big boys who run the largest AI model labs and the most expansive data centers. Its partners include some of the biggest chipmakers, including Nvidia Corp., Advanced Micro Devices Inc., Intel Corp., Arm Holdings Plc and Cerebras Systems Inc. Asgar told TechCrunch that the company is already generating revenue of eight figures despite only launching its platform in October. In the last four months it has doubled its customer base, with clients including a major model maker and an “extremely large” cloud computing company, he said.

The Series A round was led by Menlo Ventures and saw participation from Factory, which led the company’s seed, as well as Eclipse, Prosperity7 and Triamtomic.

Today’s funding round is all about giving Gimlet Labs the resources it needs to scale and ensure high-speed, efficient multichip inference becomes the norm. With that in mind, the startup is planning to expand its team and grow its inference cloud to meet the rapidly growing demand for faster inference.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.