INFRA

INFRA

INFRA

INFRA

INFRA

INFRA

For a while, it was thought that generic central processing units had little role to play in the artificial intelligence revolution, but Nvidia Corp. begs to differ.

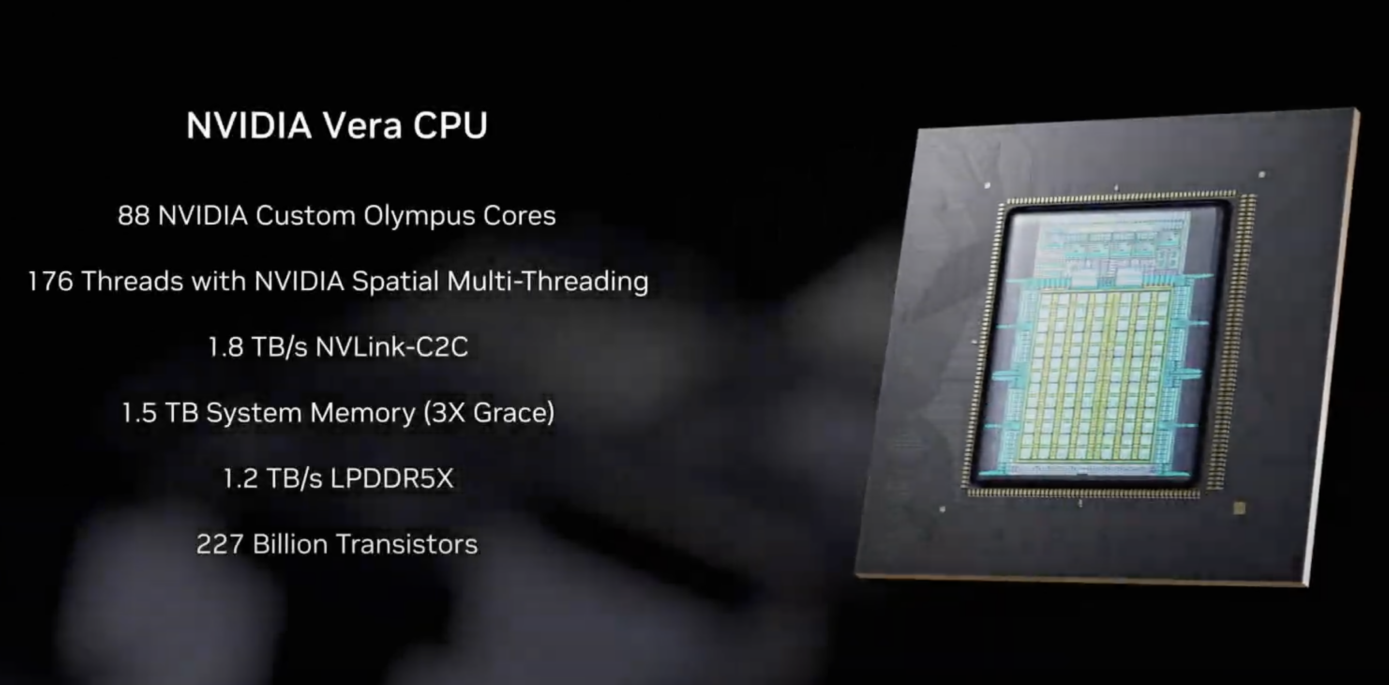

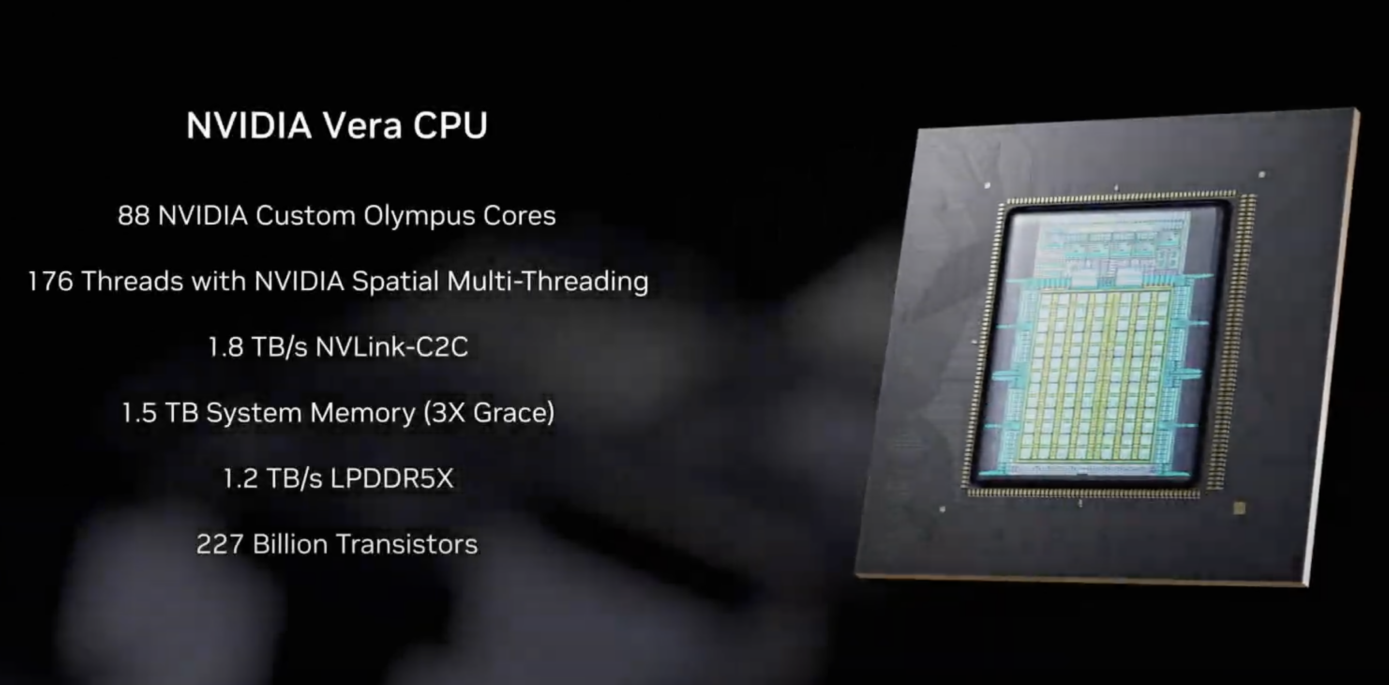

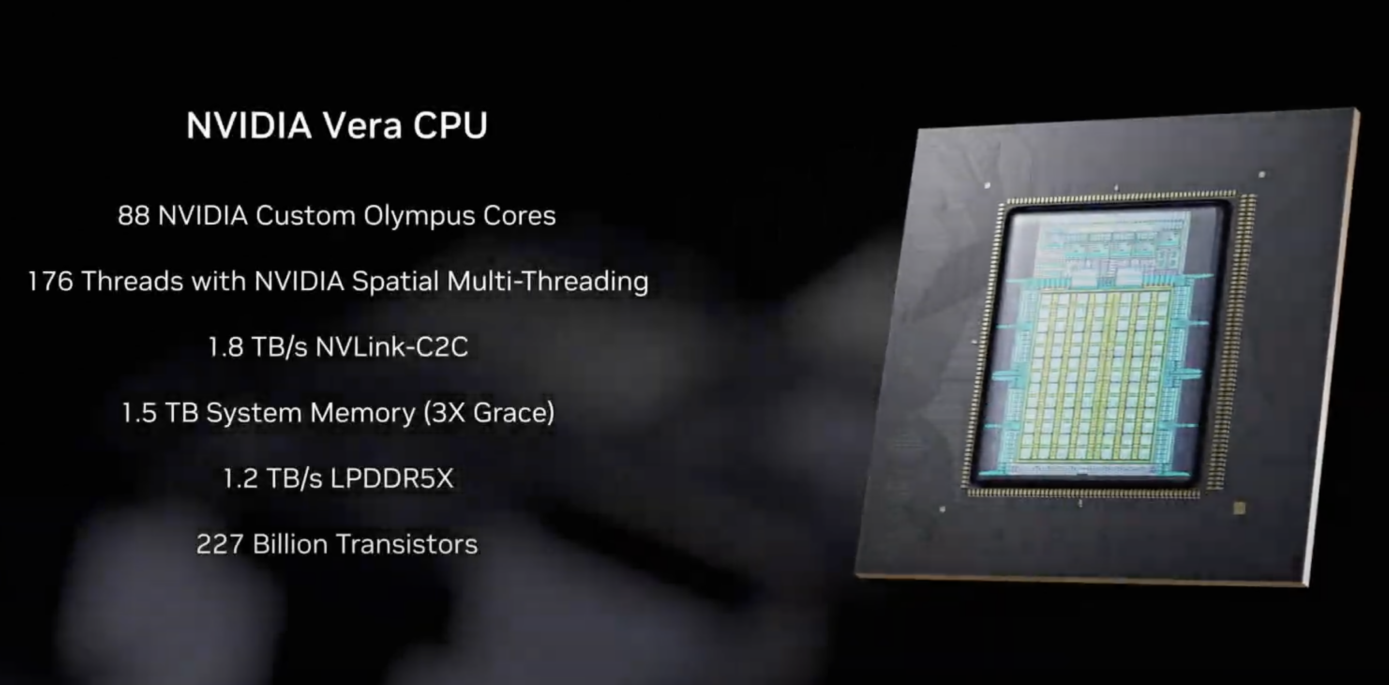

At its GTC 2026 developer conference today, it announced the all-new Vera CPU, said to be the first chip of its kind designed specifically for agentic AI workloads and reinforcement learning.

As AI evolves from simple chatbots towards autonomous AI agents that can reason, use third-party software tools, write and execute code on behalf of humans, the underlying infrastructure requirements are changing. According to Nvidia, AI agents need more than just the sheer power and performance of graphics processing units. They also require orchestration, data movement and validation logic, which are tasks best performed by traditional CPUs.

That’s why Nvidia developed the Vera CPU, which it says is 50% faster and twice as efficient as traditional x86-based CPUs when handling these complex operations.

“Vera is arriving at a turning point for AI,” said Nvidia Chief Executive Jensen Huang. “The CPU is no longer simply supporting the model; it’s driving it. With breakthrough performance and energy efficiency, Vera unlocks AI systems that think faster and scale further.”

Vera is the successor to Nvidia’s Grace CPU, and it has been designed to slot inside the vast “AI factories” that power today’s most powerful large language models. The new chip features 88 custom-designed Olympus cores that utilize Nvidia’s Spatial Multithreading technology to run two tasks simultaneously with more predictable performance than its predecessor. That’s a must-have for cloud infrastructure providers that need to run thousands of AI agents at once.

To feed these cores, Nvidia has equipped the Vera CPUs with a new low-power memory subsystem that uses LPDDR5X memory. It delivers a massive 1.2 terabytes-per-second of bandwidth, which is about twice that of the general-purpose CPUs, while using only half the amount of power.

Nvidia said the Vera chips excel at AI “thinking” tasks. By that, it’s referring to the compilers, analytics pipelines and orchestration services that tell GPUs what they should be doing next. The GPUs remain the workhorse of AI models, while the CPUs perform the management tasks. Early adopters have already reported substantial performance gains, with Vera delivering 5.5 times lower latency when running Apache Kafka workloads, according to the data streaming company Redpanda Data Inc.

Nvidia isn’t just selling bags of the new chips. It’s also offering a new Vera CPU rack system that integrates 256 liquid-cooled chips together to tackle the biggest AI workloads. According to Nvidia, it’s able to sustain more than 22,500 concurrent CPU environments, which means AI factories will be able to scale agentic services to tens of thousands of instances within a remarkably small physical footprint.

The Vera CPUs are also integrated in Nvidia’s new NVL72 platform, which is a liquid-cooled rack-scale system made up of 72 Rubin GPUs and 36 Vera CPUs connected over its high-speed NVLink 6 interconnects. The company said it’s able to provide up to 1.8 TB-per-second of coherent bandwidth between the CPU and GPUs, which is about seven times more than the latest PCIe Gen 6 standard. It’s fast enough that Nvidia promises the CPU will no longer be a bottleneck that causes GPUs to sit idle, waiting for data to perform training and inference tasks.

Nvidia is betting on broad industry adoption of the Vera CPU platform, and it has an extensive list of partners at launch. Hyperscale data center operators including Oracle Corp., Meta Platforms Inc. and Alibaba Group Holding Ltd. will be among the first to deploy the Vera CPU rack-scale systems, together with “neocloud” platforms such as CoreWeave Inc. and Nebius Group NV.

Meanwhile, Nvidia has a long list of hardware providers backing it too. These include Dell Technologies Inc., Hewlett Packard Enterprise Co., Supermicro Computer Inc. and Lenovo Group Ltd., which are all planning to launch Vera CPU-based servers in the coming months. These will range from specialized HGX Rubin systems for GPU-accelerated AI workloads, to dual-socket configurations for general data processing.

Nvidia said the Vera CPUs are in full production now, with the first Vera-based systems set to come available in the second half of the year.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.