INFRA

INFRA

INFRA

INFRA

INFRA

INFRA

Nvidia Corp. kicked off its annual GTC 2026 developer conference in San Jose today by announcing a number of new chips and computing platforms aimed at data center operators. But though most of the attention was focused on Nvidia’s newest graphics processing unit Rubin, it’s the all-new Groq 3 language processing unit that could have the biggest impact.

The chipmaker announced in December that it had paid to license Groq Inc.’s technology and hire its founder Jonathan Ross and President Sunny Madra as part of a $20 billion deal. Just three months later, the first chip resulting from that deal is on the table.

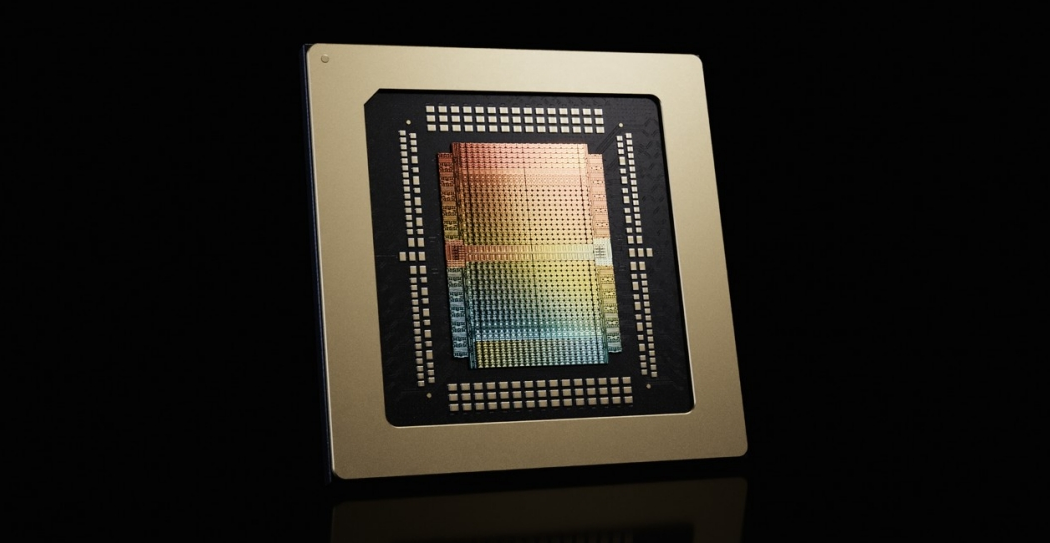

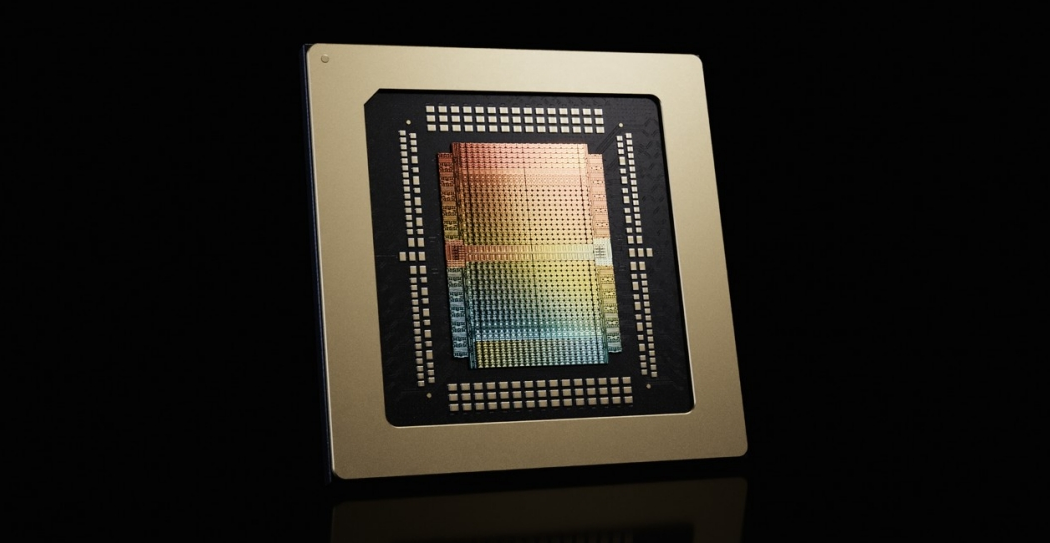

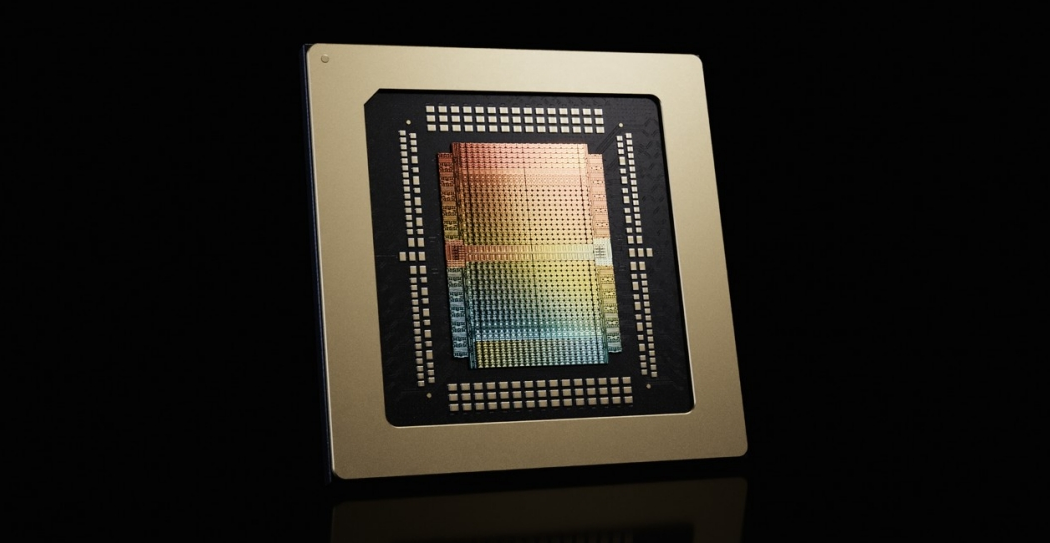

The startup, not to be confused with xAI Corp.’s large language model Grok, has developed processors that focus on artificial intelligence inference, or running AI models, rather than training them. That’s different from Nvidia’s GPUs, which are considered to be general-purpose chips because they can both train and run models.

Ian Buck, Nvidia’s vice president of hyperscale and high-performance computing, said that though the company’s GPUs offer greater memory, Groq 3’s memory is much faster. It’s designed to support low-latency workloads and the large context demands of agentic systems that automate work on behalf of humans.

The chip is being made available in dedicated Groq 3 LPX server racks that consist of 256 Groq 3 LPUs, offering 128 gigabytes of solid-state random access memory and 40 petabytes per second of bandwidth, enabling it to accelerate inference processing far beyond what any GPU is capable of.

Groq 3 LPX is designed to be used in tandem with Nvidia’s new Vera Rubin NVL72 rack, which integrates both Rubin GPUs and the company’s new Vera central processing units. Nvidia explained that the system is optimized to run trillion-parameter models and million-token context, pairing with Vera Rubin to maximize efficiency across power, memory and compute. The two systems combine to deliver 35-times higher throughput per megawatt of power and 10 times greater revenue opportunity, Buck said.

Nvidia sees Groq 3 acting as a kind of coprocessor for the Rubin GPUs, boosting performance at “every layer of the AI model on every token,” Buck said. This puts Nvidia in a position to handle multi-agent systems powered by models with trillions of parameters and context windows in excess of millions of tokens.

Buck said this is necessary because we’re moving toward a reality where multi-agent systems are continually communicating with one another, which means they need to be much more responsive. Though 100 tokens per second might seem reasonable for humans, such speeds would seem glacial for agentic systems, he added. That’s why Nvidia is aiming to support throughputs of up to 1,500 tokens per second for agentic communications.

Groq 3 LPX and Vera Rubin NVL72 are two of five massive new server racks announced by the company today. The company also unveiled a dedicated Vera CPU rack, as well as a new storage rack system called Bluefield-4 STX, which enhances storage performance compared to standard racks, and the Spectrum-6 SPX networking rack.

The new racks should help Nvidia to keep expanding its data center footprint and growing its revenue at a time when demand for more powerful compute performance continues to increase. In fiscal 2026, Nvidia’s data center revenue soared to $193.5 billion, up from $116.2 billion in the previous year. With hyperscale cloud providers such as Amazon Web Services Inc., Google LLC, Microsoft Corp. and Meta Platforms Inc. set to spend a combined $650 billion on data center buildouts this year, Nvidia is doing everything it can to claim a massive slice of that pie.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.