CLOUD

CLOUD

CLOUD

CLOUD

CLOUD

CLOUD

![]() As information technology organizations are increasingly called upon to contribute to the bottom line through digital business, underlying infrastructure must evolve from one that supports known workloads to one that can handle unpredictable workloads reliably and at scale.

As information technology organizations are increasingly called upon to contribute to the bottom line through digital business, underlying infrastructure must evolve from one that supports known workloads to one that can handle unpredictable workloads reliably and at scale.

That’s the conclusion of Wikibon Research Director Peter Burris (right) in a new research brief. The full report available to Premium subscribers to Wikibon, which is a sister company to SiliconANGLE. Burris calls this new architecture “plastic infrastructure,” an evolution of the elastic infrastructure that characterizes cloud-based operations.

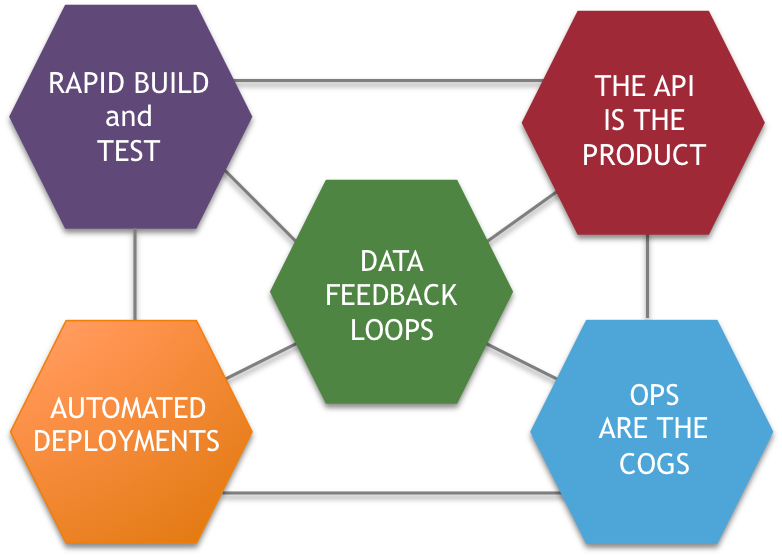

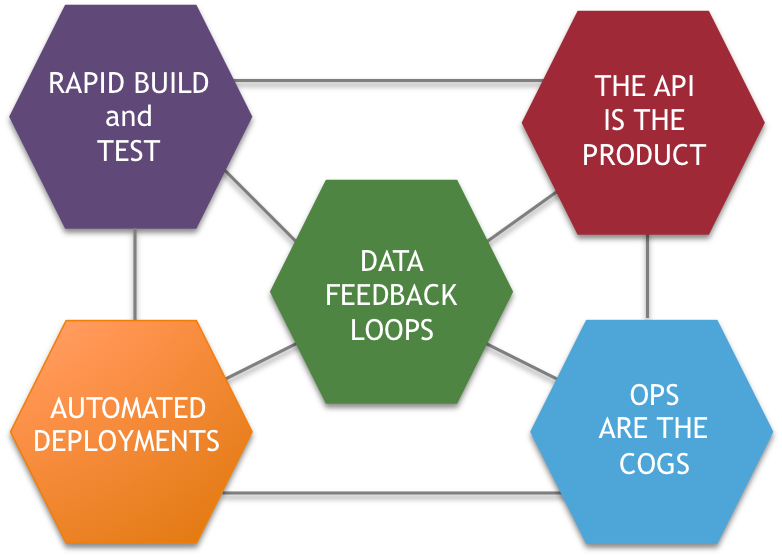

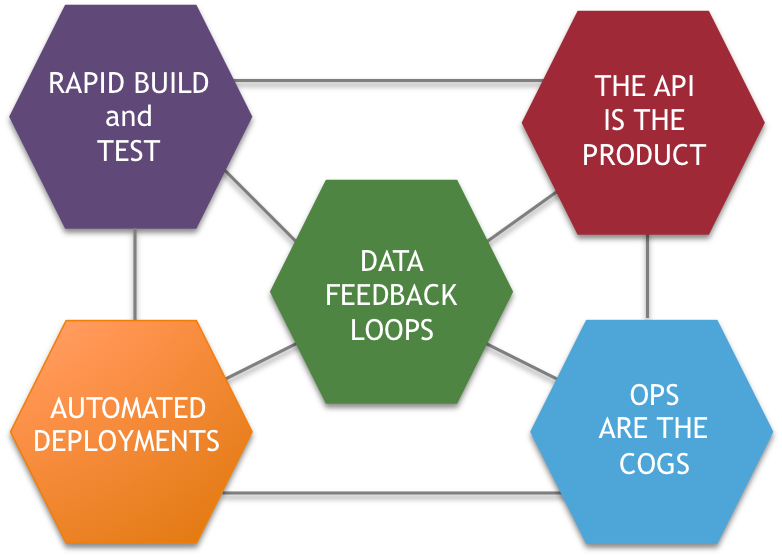

Plastic infrastructure is not only faster and cheaper than traditional centralized systems, but also handles wide variances in data location and uses agile development to speed the delivery of new customer-facing systems. Customer interactions in a fully digital world are inherently unpredictable, Burris writes. With the arrival of what he calls “the internet of things and people,” or IoT&P, increasing amounts of data will move to the edge of the network. Burris outlines five “pillars of a modern digital business platform,” including rapid development, use of application program interfaces and high degrees of automation (above).

But moving all this data into a central cloud is slow and unreliable. In addition, new applications such as machine learning and artificial intelligence, require huge amounts of data, even if most of it is not used. Storing and moving that data with traditional disk arrays and one-to-many networks is impractical. Flash storage technology and mesh networks, in which all devices are indirectly connected to each other, are essential to accommodate these new capacity demands.

Burris lays out four technologies that will improve infrastructure plasticity. One is flexible network architectures like “leaf and spine,” which reduce bottlenecks because payloads have fewer switches to traverse. Second is better technology for moving large amounts of data in bulk, as embodied in Wandisco Inc.’s Active Transactional Data Replication.

The third is blockchain, the distributed database that secures data as it traverses the network. And fourth is new infrastructure design that reduces the need to move all data to a single location and encourages more processing at the edge.

Burris is particularly bullish on the potential of DevOps to make software development faster and more modular. Containers and the tools to manage them “simplify the conversation between developer and operations personnel and help bridge the remaining technology gap between software-defined infrastructure and application development,” he writes. These technologies “are more likely than previous technologies, like [service-oriented architecture], to result in development of highly shareable, repeatable and infrastructure-agnostic application components.”

DevOps has been creeping into the enterprise at a disappointingly slow pace, but a more agile approach to development will be essential to delivering applications quickly. The arrival of improved modeling tools, analytics engines for acting on log file data and better data catalogs that can track data assets independently of application silos should help move DevOps into the mainstream.

In short, IT organizations must think outside the box about how they will adapt to fully digital interactions. “Change to digital business infrastructure increasingly is catalyzed by adjustments to data scope and not process scale,” Burris concludes. “The notion of plastic infrastructure complements established technologies and practices for elastic infrastructure.”

Learn more about how to become a Wikibon Premium subscriber here.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.