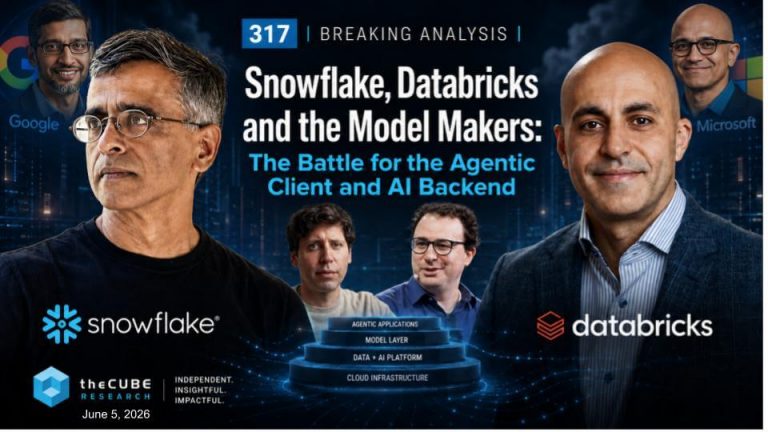

Snowflake, Databricks and the model makers: The battle for the agentic client and AI back end

Agentic artificial intelligence is being misread as a set of separate battles – for example, Snowflake Inc. versus Databricks Inc., copilots versus agents, model makers versus application vendors. We believe the larger fight is converging ...