POLICY

POLICY

POLICY

POLICY

POLICY

POLICY

Twitter Inc. announced Tuesday that it will weed out deceptive, doctored content, joining other social media behemoths that have recently said the same.

The company said in blog post that for the past several months it has been collecting feedback from its users as well as researchers regarding the threat that “synthetic and manipulated media” poses. Some 70% of respondents said taking no action was unacceptable, although 90% of respondents said seeing such content with a warning label would be acceptable.

Around half of the respondents didn’t much like the idea of Twitter just taking content down, stating that such a practice would “impact on free expression and censorship.” Nonetheless, if that content would cause someone significant harm, then 90% of respondents said it should be removed.

The threat of manipulated photos and videos circulating on social media has caused quite a stir of late. Only yesterday, YouTube announced its plan to weed out manipulated content on the run up to the 2020 elections.

The state of California imposed a ban on deepfake videos last fall, creating a bill that makes it illegal to manipulate and distribute videos of political candidates. California Assembly member Marc Berman, who wrote that bill, called deep fake technology “a powerful and dangerous new tool in the arsenal of those who want to wage misinformation campaigns to confuse voters.”

In January, Facebook Inc. joined the chorus, saying such technology was a rising concern. Prior to this, the company had come under pressure after a manipulated video of House Speaker Nancy Pelosi was shared on the platform. “Going forward, we will remove misleading manipulated media,” Facebook said.

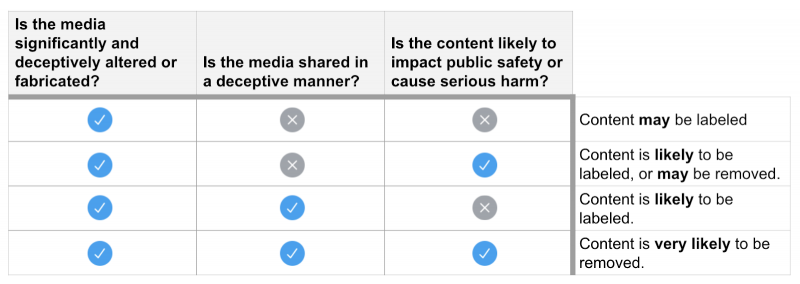

Twitter explained that when addressing such content, it will first look at how it has been manipulated — for example, if the content has been “substantially edited in a manner that fundamentally alters its composition, sequence, timing, or framing.” The audio will also be scrutinized.

The next thing Twitter will look at is whether the content has been shared in a deceptive manner with the intent to spread a falsity. On top of that, the context of the content will be reviewed, such as what information or links are provided with the video or image.

Lastly, the question will be asked if the content will affect public safety or cause serious harm. If it’s fabricated, deceptive and likely to cause harm it will be removed. If it isn’t likely to cause harm but is still deceptive, it might be labeled or taken down.

Once the content is labeled as being manipulated, it will show a warning when people try to retweet it or just “like” it. That content may come with more context and its visibility might be reduced.

“This will be a challenge and we will make errors along the way — we appreciate the patience,” said Twitter. “However, we’re committed to doing this right. Updating our rules in public and with democratic participation will continue to be core to our approach.”

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.