INFRA

INFRA

INFRA

INFRA

INFRA

INFRA

By our estimates, Amazon Web Services Inc. will generate about $9 billion in storage revenue this year and is now the second-largest supplier of enterprise storage behind Dell Technologies Inc.

We believe AWS storage revenue will surpass $11 billion in 2022 and continue to outpace on-premises storage growth by more than 1,000 basis points for the next three to four years. At its third annual Storage Day event on Sept. 2, AWS signaled a continued drive to think differently about data storage and transform the way customers migrate, manage and add value to their data over the next decade.

In this Breaking Analysis we’ll give you a brief overview of what we learned at AWS Storage Day, share our assessment of the big announcement of the day – a deal with NetApp Inc. to run its full ONTAP stack natively in the cloud as a managed service – and share some new data on how we see the market evolving. In addition, we’ll share the perspective of AWS executives on the giant cloud company’s storage strategy, how it thinks about hybrid and where it fits into the emerging data mesh conversation.

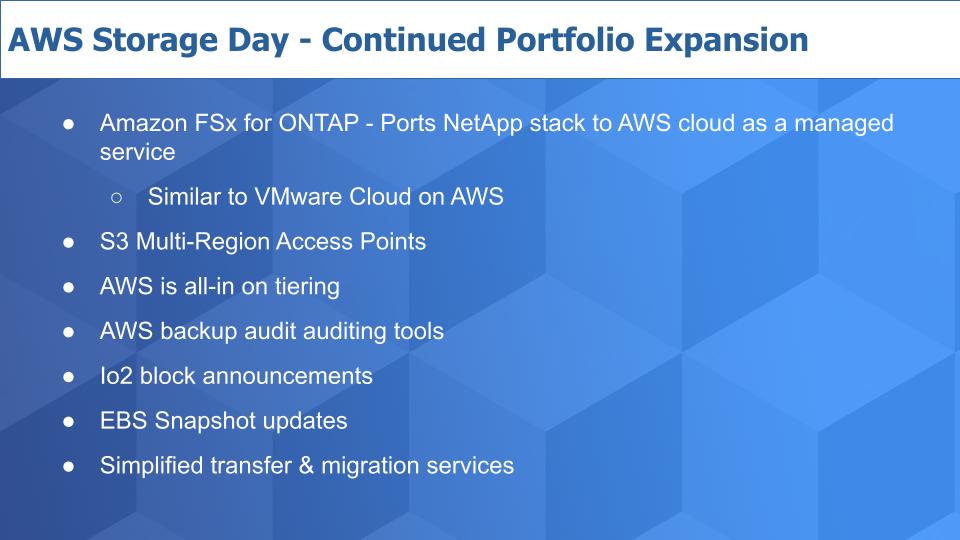

Let’s start with a quick overview of the announcements covered at Storage Day.

As with most AWS events, this one had a number of announcements, introduced at a pace that was predictably fast. Above is a quick list of most of them with some comments on each. (* Disclosure below.)

The big news is the announcement with NetApp. AWS and NetApp have engineered a solution that ports the full NetApp stack onto AWS and it will be delivered as a fully managed service. This is important because previously, customers either had to settle for a cloud-based file service that with less functionality than you could get with NetApp on-prem, or they had to lose the agility and elasticity of the cloud and pay-by-the-drink model.

Now customers can get access to a fully functional NetApp stack with services around data reduction, snaps, clones, multiprotocol support, replication and all the services ONTAP delivers, in the cloud as a managed service through the AWS console.

Chris Mellor posted a more in-depth assessment of the features and pricing on his blog if you’d like more detail.

Our estimate is that 80% of the data on-prem is stored in file format. We all know about S3 object storage, but the biggest market opportunity for AWS from a capacity standpoint is file storage. In some respects, this announcement reminds us of the VMware Cloud on AWS deal but applied to storage. It’s an excellent example of a legacy on-prem company leaning into the cloud and taking advantage of the massive capital spending buildout that AWS and the other big hyperscalers have “gifted” to the industry.

NetApp’s Anthony Lye told us this is bigger than the AWS-VMware deal. We’ll come back to that in a moment.

AWS also announced S3 Multi-Region Access Points. It’s a service that optimizes storage performance taking into account latency, network congestion and the location of data copies to deliver data via the best route to ensure the best performance.

AWS announced improvements to tiering including EFS Intelligent Tiering to cost optimize file storage. It also announced S3 tiering features where it will no longer charge for the monitoring and automation of small objects of less than 128K.

Remember AWS years ago hired a bunch of EMC engineers and those guys built a lot of tiering functionality into their boxes. We’ll come back to that later in this episode.

In addition, AWS announced backup monitoring tools to ensure backups are in compliance with regulations and corporate edicts. This frankly is table stakes and about time.

AWS also made a number of other announcements, including direct block storage application programming interfaces to enable 64-terabyte snaps from any block storage device, including on-prem storage with recovery to EBS Io2 block volumes. The company also announced simplified data migration tools and what it calls Managed Workflows to automate pre-processing of data.

The firehose of announcements is de rigueur for Amazonians at an event like this. But below we take a look at the broader picture of what’s happening in the storage business.

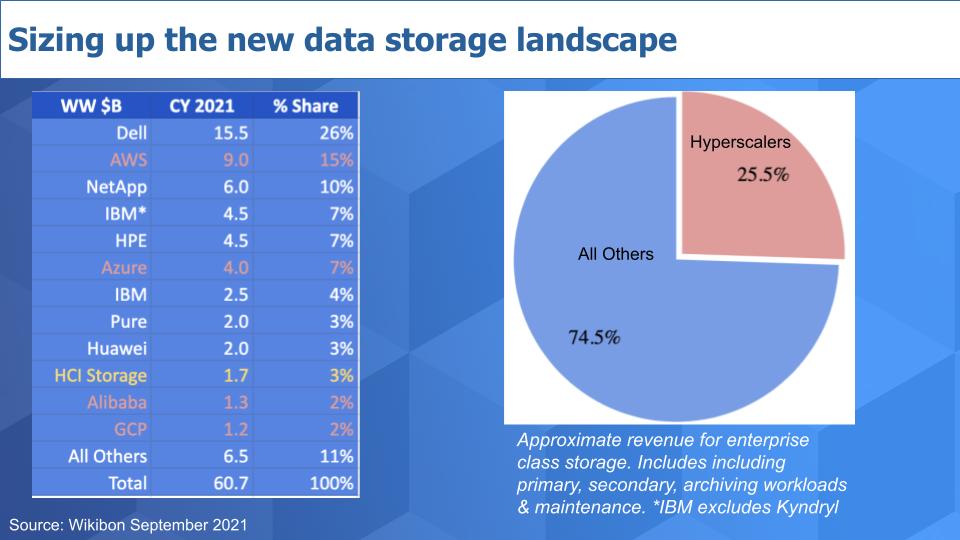

As we reported in previous Breaking Analysis segments, AWS’ storage revenue is on a path to $10 billion. The chart above puts this in context. It shows our estimates for worldwide enterprise storage revenue in the calendar year 2021. The data is meant to include all storage revenue including primary, secondary, archival storage and related maintenance revenue.

Dell is the leader in this $60 billion market, with AWS now hot on its tail with 15% share as we’ve defined the space.

In the pre-cloud days, customers would tell us, “Our storage strategy is we buy EMC for block and NetApp for file.” Although remnants of this past continue, the market is changing, as you can see above.

The companies highlighted in red represent the growing hyperscaler presence and you can see in the pie on the right they now account for around 25% of the market. And they’re growing much faster than the on-prem vendors – well over 1000 basis points when combined.

A couple of other things to note in the data: We’re excluding Kyndryl from IBM Corp.’s figures but including our estimates of storage software, such as Spectrum Protect, that is sold as part of the IBM cloud. By the way, pre-Kyndryl spin, we believe IBM’s storage business would approach the size of NetApp’s.

In the yellow, we’ve highlighted the portion of hyperconverged that comprises storage. This includes VMware Inc., Nutanix Inc., Cisco Systems Inc. and others, including Hewlett Packard Enterprise Co., which we’ve included in HPE’s numbers, not in the hyperconverged infrastructure or HCI line item. VMware and Nutanix are the largest HCI players, but in total the storage piece of that market is around $1.7 billion.

The point is, the way we traditionally understood this market is changing quite dramatically. Traditional on-prem is vying for budgets with cloud storage services that are rapidly gaining presence in the market. And we’re seeing the on-prem piece, including HCI, evolve into as-a-service models with the likes of Pure Storage Inc. and Nutanix, HPE’s GreenLake, Dell’s APEX and other on-prem cloudlike models.

This trend will only accelerate going forward.

NetApp is the gold standard for file services. It has been the market leader for a long time, and other than Pure, which is considerably smaller, NetApp is the one company that consistently was able to beat EMC in the market. EMC developed its own NAS stack and it bought Isilon to compete with NetApp, with Isilon’s excellent global file system, but generally NetApp remains the best file storage company today. Although emerging disruptors such as Qumulo Inc., VAST Data Inc. and WekaIO Inc. would take issue with this statement, and rightly so as they have really promising technology – NetApp remains the king of the file hill.

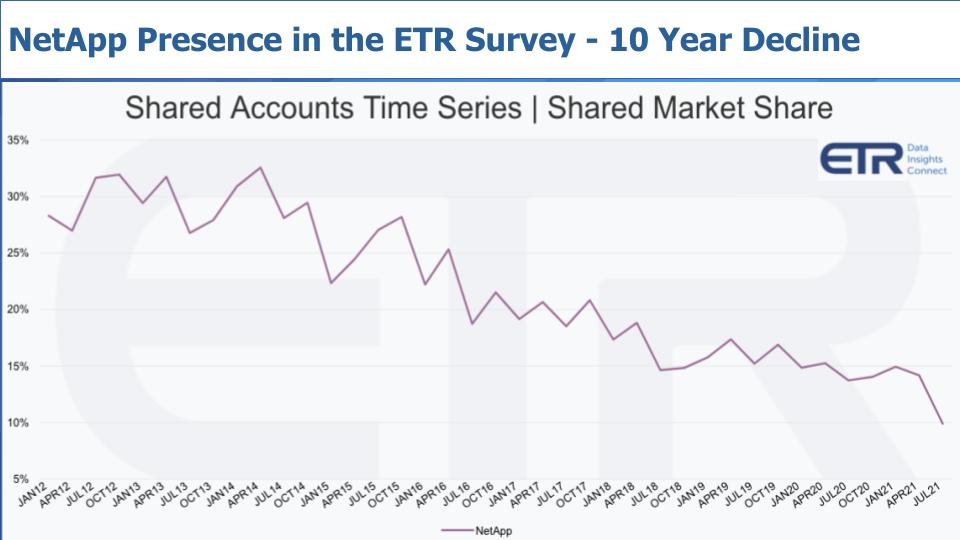

But NetApp has had some serious headwinds as the largest independent storage player, as seen in this Enterprise Technology Research chart below.

This data shows a nine-year view of NetApp’s presence in the ETR survey. Presence is referred to as Market Share, which measures the pervasiveness of responses in the ETR survey of over a thousand customers each quarter. The point is, while NetApp remains a leader, it has had a difficult time expanding its TAM because it had to defend its NAS castle. The company hit some major headwinds when it began migrating its base to ONTAP 8 and was late riding a number of new waves, including flash. But generally it has recovered and is focused now on the cloud opportunity, as evidenced by this deal with AWS.

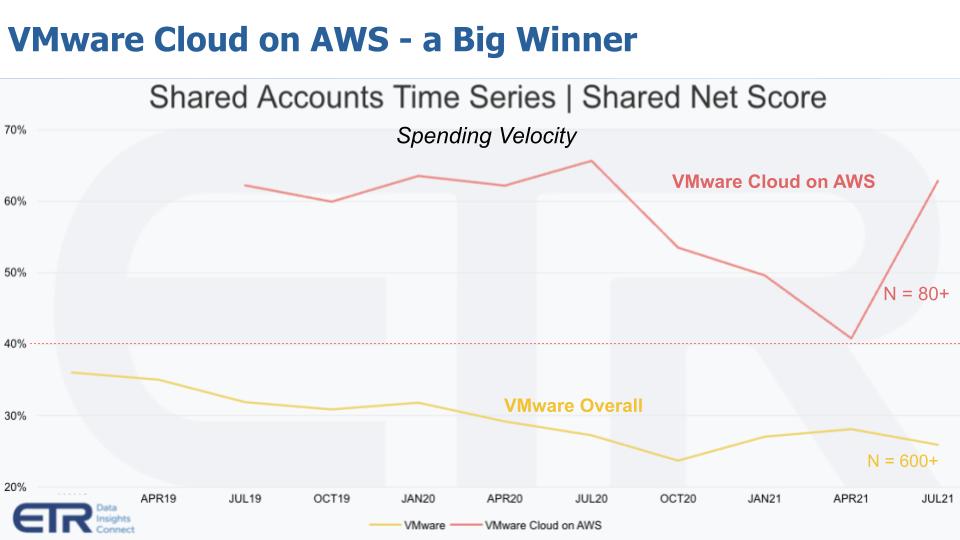

NetApp Vice President Anthony Lye believes this deal could be even bigger for the industry than VMware Cloud on AWS. You may be wondering, “How can that be?” VMware is the leader in the enterprise and has 500,000 customers. Its deal with AWS has been a tremendous success, as seen in this ETR chart below.

The data above shows spending momentum or Net Score from the point at which VMware Cloud on AWS was picked up in theETR surveys with a meaningful N. The red solid line is VMware Cloud on AWS. Today its N is more than 80 responses in the survey. The yellow line is there for context. It represents VMware overall, which has a huge presence in the survey – more than 600 N. The red dotted line is the magic 40% mark.

Anything above that mark we consider elevated, and although we saw some deceleration during the height of COVID, VMware Cloud on AWS has been consistently showing well in the survey– always above the 40% mark and frequently holding above 60%.

So could this NetApp deal be a bigger deal than VMware Cloud on AWS? Well, probably not, in our view given the tight relationship between VMware and AWS and VMware’s size. But we like the strategy of NetApp going cloud-native on AWS and AWS’ commitment to deliver this as a managed service.

Now where it could get interesting is across clouds. In other words, if NetApp can take a page out of Snowflake Inc. and build an abstraction layer that hides the underlying complexity of not only the AWS cloud, but also Google Cloud Platform and Microsoft Azure, where you log into the NetApp data cloud and it optimizes your on-prem, AWS, Azure and/or GCP file storage, we see that as a winning strategy and NetApp just made a major coup toward that vision.

Politically it may not sit well with AWS, but so what. NetApp has to go multicloud to expand its total available market.

When the VMware deal was announced, many people felt it was a one-way street where all the benefit would eventually go to AWS. In reality this has been a near-term win-win-win for AWS, VMware and importantly, its customers. Long-term it will clearly be a win for AWS, which gets access to VMware’s large customer base, but also we think it will serve VMware well because it gives the company a clear cloud strategy – especially if it can go cross-cloud and eventually to the edge.

We think similar benefits will accrue to NetApp and its customers. While so many of NetApp’s competitors make defensive statements about the public cloud, NetApp is going all in and leveraging the massive infrastructure of AWS. Will the NetApp-AWS deal be as big as VMware’s? Probably not… but it’s big. NetApp just leapfrogged the competition because of the deep engineering commitment AWS has made, and the cultural mindset of NetApp to go on the offense. This isn’t a marketplace deal – it’s a native managed service, and that’s huge.

We’re going to close with a few thoughts on AWS’ storage strategy. We’ll share some thoughts on hybrid, its challenges related to capturing mission-critical workloads and where AWS fits into the emerging data mesh conversation — one of our favorite subjects.

Let’s talk about AWS’ storage strategy overall. As with other services, AWS’ approach is to give builders access to tools at a very granular level. That means lots of APIs and access to primitives that are essentially building blocks. While this may require greater developer skills, it allows AWS to get to market quickly and add functionality faster.

Enterprises, however, will pay up for solutions and so this leaves some nice white space for partners and competitors.

Mai-Lan Tomsen Bukovec is an AWS vice president and we spoke with her on theCUBE, SiliconANGLE Media’s video studio. We asked her to describe AWS’ storage strategy. Here’s a clip of what she said:

So you can see by Mai-Lan’s statements how Amazon thinks outside the box mentality. At the end of the day customers want rock-solid storage that’s cheap and lightning-fast. They always have and they always will. But what we hear from AWS is that it thinks about delivering those capabilities in the broader context of an application or business. Not that traditional suppliers don’t think about that as well, but the cloud services mentality is different than dropping a box off at a loading dock, turning it over to a professional services organization and moving on to the next opportunity.

We also had a chance to speak with Wayne Duso, another AWS vice president. Now, Wayne Duso is a longtime tech athlete. For years he was responsible for building storage arrays at EMC. AWS, as we mentioned earlier, hired a number of EMC engineers years ago. We asked Wayne what’s the difference in mentality when you’re building boxes versus services. Below is a clip of what he said:

So the big difference is no constraints in the box – but lots of opportunities to blend with other services.

Now all that said, there are cases where the box will win, particularly where latency is king. We’ll come back to that.

Here’s our take, then let’s hear from Tomsen Bukovec again. The cloud is expanding. It’s moving to the edge. AWS looks at the data center as another edge node and it’s bringing its infrastructure as code mentality to the edge and to data centers. So if AWS is truly customer-centric, which we believe it is, it will naturally have to accommodate on-prem use cases – and it is doing just that. Here’s how Tomsen Bukovec explained the way AWS is thinking about hybrid:

So look – this is exactly a case where the box or appliance wins. Latency matters. And AWS gets that. This is where Matt Baker of Dell is right. It’s not a zero-sum game. This is especially accurate as it pertains to the cloud-versus-on-prem discussion. But a budget dollar is a budget dollar and a dollar can’t go to two places. So the battle will come down to who has the best solution, who has the best relationship and who can deliver the most rock solid storage at the lowest cost and highest performance.

Let’s examine mission-critical workloads for a moment.

We’re seeing AWS go after these applications. We’re talking here about Oracle Corp., SAP SE, SQL Server and DB2 work — high-volume transactions, mission-critical work, the family jewels of the corporation. Now there’s no doubt that AWS is picking up a lot of low-hanging fruit with business-critical workloads, which can definitely be thought of by those customers as mission critical. Email is often mission-critical.

But the really hardcore mission-critical database work isn’t going to the cloud without a fight. AWS has made improvements to block storage to remove one of the challenges but generally we see this as a very long road ahead for AWS and other cloud suppliers. Oracle is the king of mission-critical work, along with IBM mainframes, and that infrastructure generally is not easily moved to the cloud. It’s too risky, too expensive and the business case just isn’t there. The one possible exception is Oracle’s efforts to put specialized infrastructure into its cloud to support such workloads and minimize migration risks. This is where Oracle is winning in the market.

But it’s all in the definition. No doubt plenty of business-critical work that’s important and considered mission-critical by the customer will move to AWS. But for the really large transaction systems, even AWS is struggling to move its most important transaction systems off Oracle. We’re skeptical that dynamic will change but we’ll keep an open mind. It’s just that today, as we define the most critical workloads, we don’t see a lot of movement to the hyperscale clouds.

We’re going to close with some thoughts on data mesh. We’ve written extensively about this and interviewed and collaborated with Zhamak Dhegani, who coined the term. And we’ve announced a media collaboration with the data mesh community and believe it’s a strong direction for the industry.

We wanted to understand how AWS thinks about data mesh and where it fits in the conversation. Here’s what Mai-Lan Tomsen Bukovec had to say about that in the clip below.

It’s very true that AWS has customers that are implementing data mesh. JP Morgan Chase is a firm that is doing so as we’ve reported. HelloFresh has initiated a data mesh architecture in the cloud. And we think the point is the issues and challenges around data mesh are more organizational and process-related and less focused on the technology platform.

Data by its very nature is decentralized. So when Mai-Lan talks about customers building on centralized storage – that is a centralized logical view, but not necessarily physically centralized.

This is an important point, as JPMC pointed out in its excellent overview: The data mesh must accommodate data products and services that are in the cloud and also on-prem. Data mesh must be inclusive. Data mesh looks at the data store as a node on the mesh, and should not be confined by the technology, be it a data warehouse, a data hub, a data mart, a data lake or an S3 bucket.

So we would say this: Although people think of the cloud as a centralized walled garden, and in many respects it is, that very same cloud is expanding into a massively distributed architecture. And that fits the data mesh architectural model.

The bottom line is that the storage industry as we’ve known it for decades is evolving rapidly into a data business. Services will win over boxes. Data products and data services will be the focus of monetization efforts. Underlying storage infrastructure and file systems will be broadly accessible and distributed. Storage will become a less visible and the technical issues will become an operational detail rather than a central focus of an information technology organization. Federated governance and self-service experience will become the next big challenges organizations face in the coming data era.

And data mesh will only keep gaining momentum, in our view. As we stress, the big challenges of data mesh are less technical and more cultural. We’re excited to see how data mesh plays out. And we’re thrilled to be a media partner of that community.

Remember we publish each week on Wikibon and SiliconANGLE. These episodes are all available as podcasts wherever you listen. Email david.vellante@siliconangle.com, DM @dvellante on Twitter and comment on our LinkedIn posts.

Also, check out this ETR Tutorial we created, which explains the spending methodology in more detail. Note: ETR is a separate company from Wikibon and SiliconANGLE. If you would like to cite or republish any of the company’s data, or inquire about its services, please contact ETR at legal@etr.ai.

(* Disclosure: TheCUBE, owned by SiliconANGLE Media like Wikibon, is a paid media partner for the AWS Storage Day. Neither AWS, the sponsor of theCUBE’s event coverage, nor other sponsors have editorial control over content on Wikibon, theCUBE or SiliconANGLE.)

Here’s the full video analysis:

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.