INFRA

INFRA

INFRA

INFRA

INFRA

INFRA

Cerebras Systems Inc., a maker of supersized artificial intelligence chips, today disclosed the financial terms of its upcoming public offering.

The company hopes to raise as much as $3.5 billion by selling 28 million shares for $115 to $125 apiece. Cerebras’ underwriters, the banks entrusted with coordinating its listing, have the option to buy an additional 4 million shares. The value of the offering could increase even further if Cerebras boosts its price target, which fast-growing tech firms often do when there’s strong investor demand.

The chipmaker’s sales jumped 76% in 2025, to $290.3 million. Moreover, it turned a $87.9 million profit after losing $485 million a year earlier.

Cerebras’ improving financial performance is likely one of the factors beyond its ambitious valuation target. The public offering terms the company is seeking would give it a market capitalization of $26.6 billion at the top end, a $3.6 billion increase from February. That month, Cerebras raised $1 billion in funding at a $23 billion valuation.

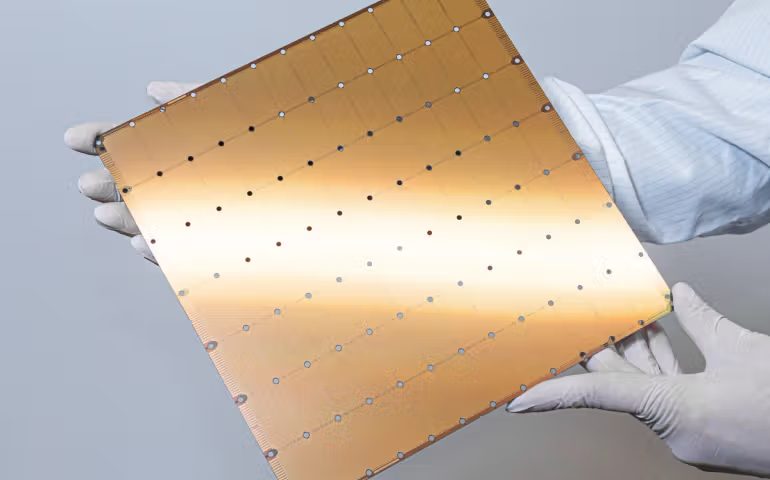

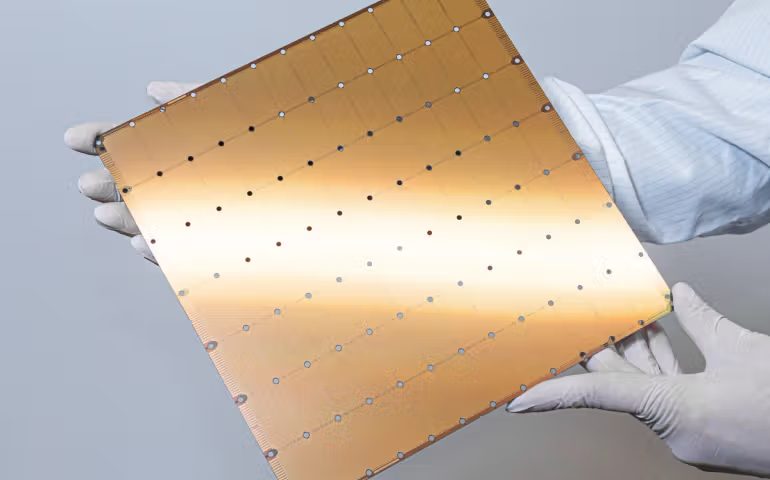

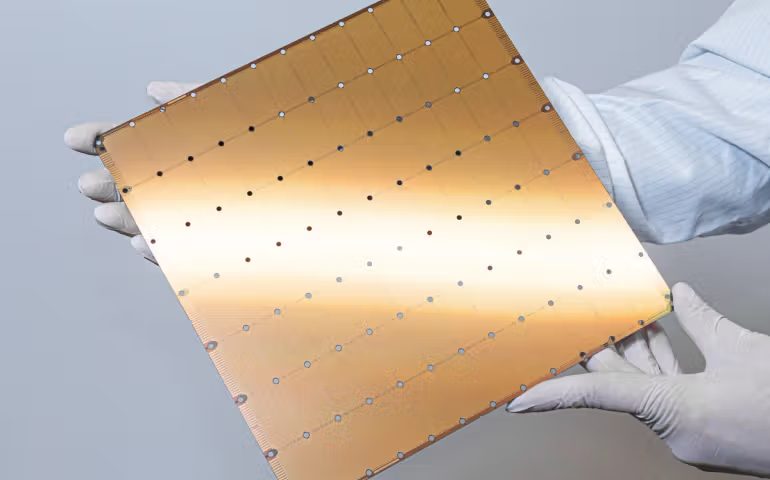

The company sells a wafer-size AI chip called the WSE-3 that is several times the size of Nvidia Corp.’s Blackwell B200 graphics processing unit. One of its main selling points is a 44-gigabyte pool of SRAM. SRAM is a memory variety that features significantly more transistors per square millimeter than standard server DRAM. As a result, it’s considerably faster and several orders of magnitude more expensive.

Processors include a quartz crystal that moves millions of times per second in response to an electric current. Each movement corresponds to a clock cycle, the basic unit of time in chips. Cerebras says WSE-3’s 900,000 cores can access the onboard SRAM pool with latency of one clock cycle, which is significantly faster than what a standard graphics card offers. The result is faster performance on inference, the process of providing responses to queries.

Cerebras ships the WSE-3 as part of a 1.8-ton appliance called the CS-3. Customers can link together multiple CS-3 systems into a cluster with the help of auxiliary devices that are likewise provided by the startup.

There’s a specialized storage system, MemoryX, that is optimized to hold activations. Those are intermediate data points that an AI model generates while processing a prompt and discards immediately after the calculation is complete. A switch called SwarmX manages the task of moving data between the MemoryX and CS-3 systems in a cluster. Additionally, the switch compresses some of the data to optimize storage hardware utilization.

Cerebras filed to go public last month after inking a broad chip supply deal with OpenAI Group PBC. Under the agreement, it will provide the ChatGPT developer with as much as 2 gigawatts of computing capacity through 2030. Cerebras expects the contract, which is reportedly worth over $20 billion, to account for a substantial portion of its revenue in the coming years.

The public cloud market could emerge as another important revenue source. A few weeks after announcing the OpenAI deal, Cerebras teamed up with Amazon Web Services Inc. to make the WSE-3 chip available in the cloud giant’s platform. AWS’ adoption of the chip may prompt other infrastructure-as-a-service providers to follow suit, which could translate into significant sales opportunities for Cerebras.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.