INFRA

INFRA

INFRA

INFRA

INFRA

INFRA

It’s another week in which the funding frenzy in artificial intelligence continues unabated, as World Labs Inc. and Ineffable Intelligence Inc. each raised $1 billion — the latter even before it has a product. OpenAI Group PBC is reportedly raising $100 billion on an $850 billion valuation, and at least a half-dozen other startups raised as much as $300 million.

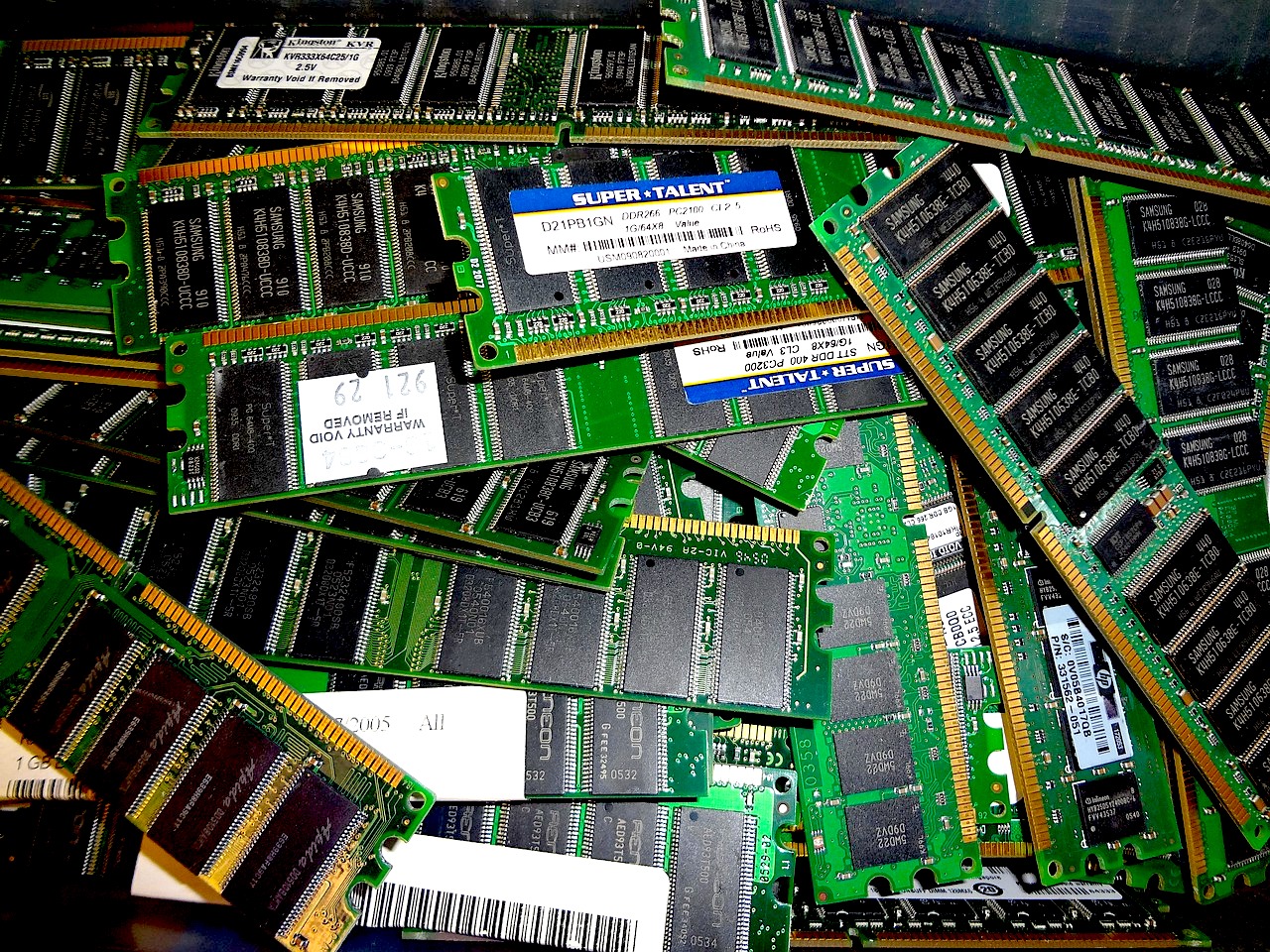

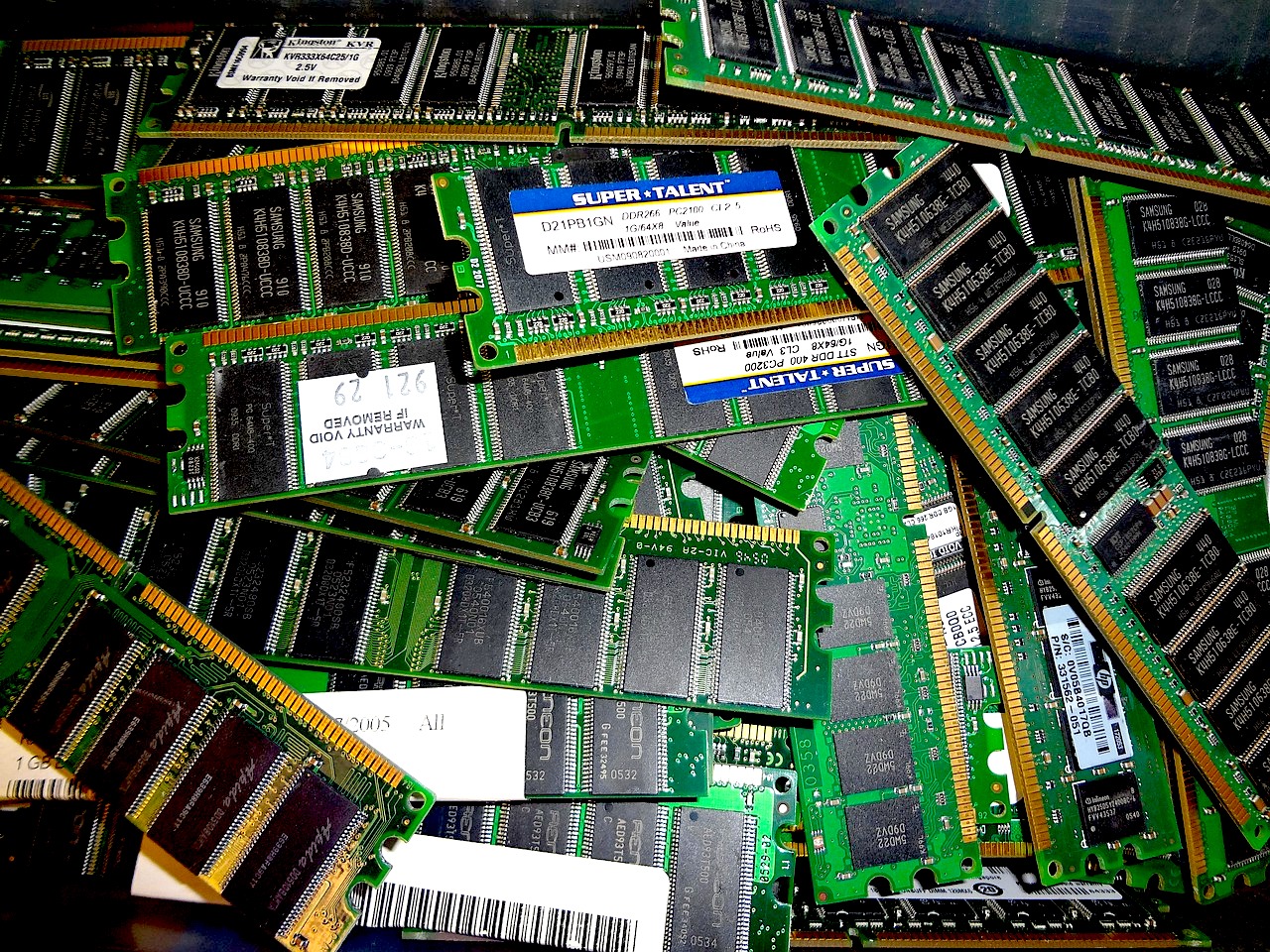

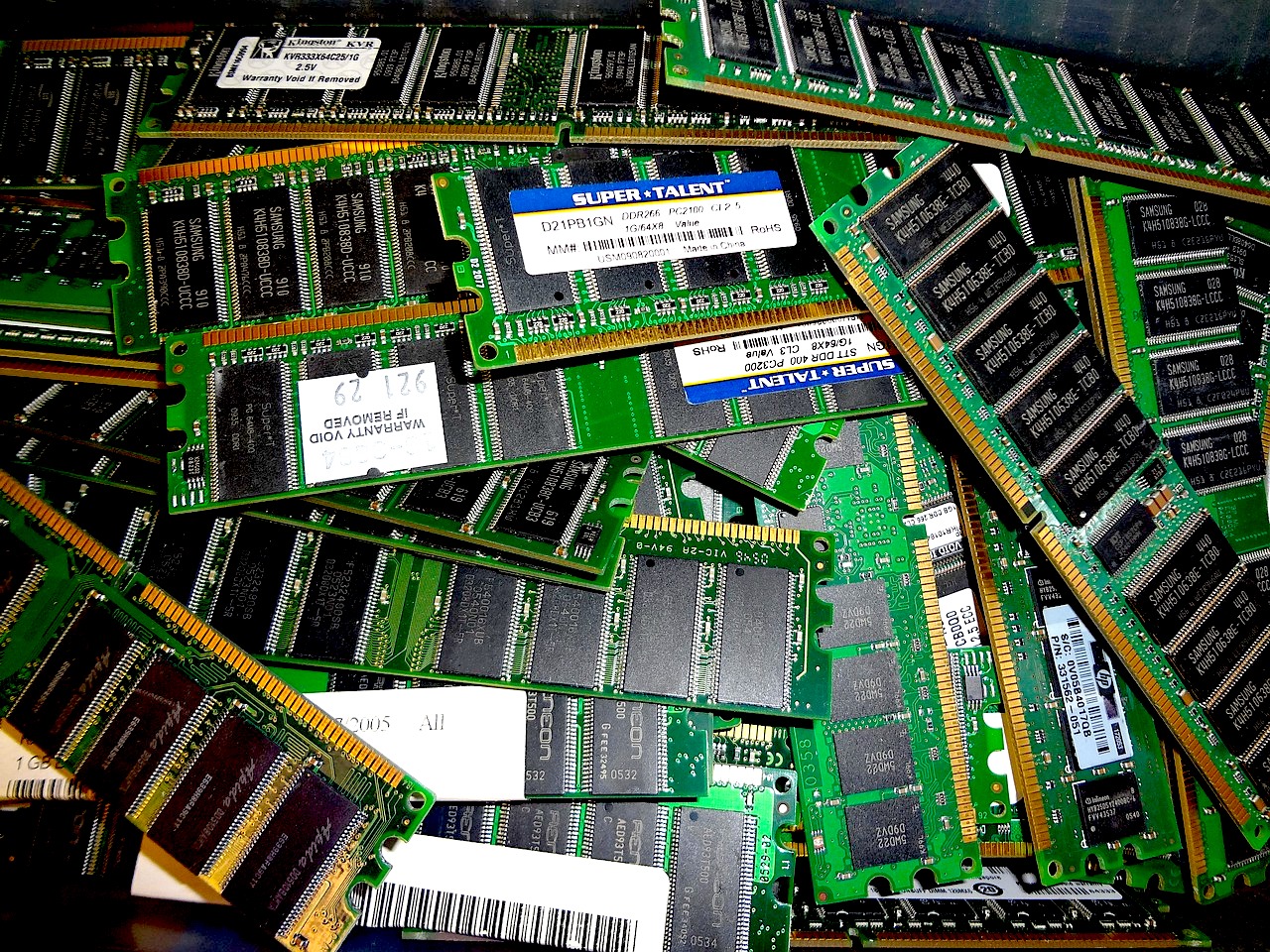

On the surface, that looks like exuberance. Underneath it, something far more structural is happening. All that capital is chasing one constraint: memory.

The memory semiconductor industry bottomed at roughly $85 billion to $90 billion in revenue in 2023. By 2027, it is tracking toward $800 billion to $850 billion.

That’s a nine-fold expansion in four years. No segment in chip history has ever scaled that fast at that size. By 2026, memory alone is projected to reach $550 million to $570 billion — roughly 55% to 58% of the entire semiconductor market. Historically, memory has hovered in a 25% to 30% band.

Memory revenue in 2026 will roughly equal what the entire global semiconductor industry generated in 2022 — logic, analog, everything included.

This is not a cyclical bounce. This is structural inversion.

Look at the headlines:

Everyone is building agents.

Everyone is training models.

Everyone is racing toward “superintelligence.”

But here’s the part that doesn’t get enough attention: AI is memory-bound.

A typical AI server uses roughly eight times more memory than a traditional server. AI server memory spend is projected to jump from $35 billion to $40 billion in 2025 to $175 billion to $190 billion by 2027 — roughly a fivefold increase in two years. The server has become a memory appliance. Memory’s share of a standard server’s bill of materials has surged from roughly 30% two years ago to 65% to 70% today. Compute has gone from majority to minority.

That structural inversion has never happened before.

When Meta buys millions of GPUs, it is also implicitly buying a tidal wave of high-bandwidth memory and advanced dynamic random-access memory. When OpenAI and Anthropic scale agents that require persistent context and ever-growing KV caches, they are driving linear memory demand.

The AI contest between OpenAI’s push for an open control plane (“Frontier”) and Anthropic’s more vertically integrated agent strategy may dominate headlines — but both models run on the same scarce substrate: high-bandwidth memory.

From 1986 to 2020, semiconductor average selling prices oscillated in a tight 31- to 44-cent band. Volume drove growth.

That era is over. ASPs have nearly doubled in five years and are projected to reach 91 cents in 2026 and trend toward $1.22 by 2030. Unit growth is roughly 4% annually. Revenue is compounding at 14%. The delta is pricing.

DRAM pricing is on track to rise 275% to 300% from 2025 through 2027 — more than triple the 2017-2018 supercycle increase, and on a base roughly three times larger. Conventional DRAM ASPs are converging toward $1.20 to $1.30 per gigabit — approaching HBM3E territory.

The premium for the most advanced memory in the world is compressing from the bottom up, because conventional DDR5 is so scarce that its price is rising to meet HBM. Every HBM wafer uses roughly three times the silicon area per gigabyte compared to conventional DRAM. Roughly 100,000 to 120,000 wafer starts per month shifting to HBM effectively removes 300,000 to 350,000 equivalent wafers of conventional output.

That’s the hidden mechanism driving DDR5 pricing into HBM territory.

Even the most aggressive capital programs won’t resolve this quickly.

Micron’s $200 billion U.S. investment plan doesn’t bring meaningful new DRAM supply online until mid-2027. Samsung’s and SK Hynix’s new fabs won’t ramp meaningfully until the second half of 2027 or later. Total DRAM wafer starts are growing roughly 6% to 8% year over year exiting 2026. Inventory has fallen to roughly three to four weeks at major producers. Customers are already negotiating 2028 allocations.

It’s February 2026. That is not a normal semiconductor cycle.

The financial impact is spreading across the stack.

Mobile NAND demand is projected to be flat year over year in 2026 for the first time in history. Smartphones specs are headed south. PC shipments are softening because manufacturers cannot absorb pricing.

At the same time, enterprise leaders — per theCUBE Research’s 2026 predictions — are under pressure to generate a return from their massive AI investments.

Information technology budgets may be rising nominally, but inflation in infrastructure costs is eroding real value.

Memory is the inflation driver.

For Samsung Electronics Co. Ltd., SK Hynix Inc. and Micron Technology Inc., margins are approaching record territory.

SK Hynix’s conventional DRAM operating margins are estimated in the high 70% range. Samsung sits in the low 70s. NAND margins are also pushing record levels.

Three traditional signs of a cycle peak — normalized inventory, peak year-over-year pricing and stock inflection — are not yet aligned. By current assessment, profits may not peak before late 2027. The bigger story, though, is strategic. For decades, logic — CPUs, GPUs, accelerators — dominated the narrative and the valuation multiples.

In the AI era, memory is the choke point.

The companies controlling advanced DRAM and HBM capacity sit at the fulcrum of the AI economy. GPU vendors, hyperscalers, AI startups — even the frothiest billion-dollar raises — ultimately depend on the same constrained layer. When the server becomes a memory appliance, economic leverage shifts. And that may be the most important structural change of this cycle.

With Nvidia, Salesforce Inc., Dell Technologies Inc., Workday Inc. and others reporting next week, listen closely to:

The AI gold rush is real. But underneath the model wars, the agent frameworks, the billion-dollar seed rounds and the superintelligence pledges, one thing is clear. The true bottleneck — and the true pricing power — sits in memory.

This is not just a supercycle.

It’s a repricing of computing itself.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.