AI

AI

AI

AI

AI

AI

Amazon Web Services Inc. has inked a multiyear deal to supply Meta Platforms Inc. with cloud infrastructure.

Bloomberg reported today that the agreement is worth billions of dollars.

The deal centers on AWS’ Graviton family of internally developed central processing units. According to the company, Meta will purchase access to tens of millions of Graviton cores with the option to add more down the line. It will use the chips to power artificial intelligence agents.

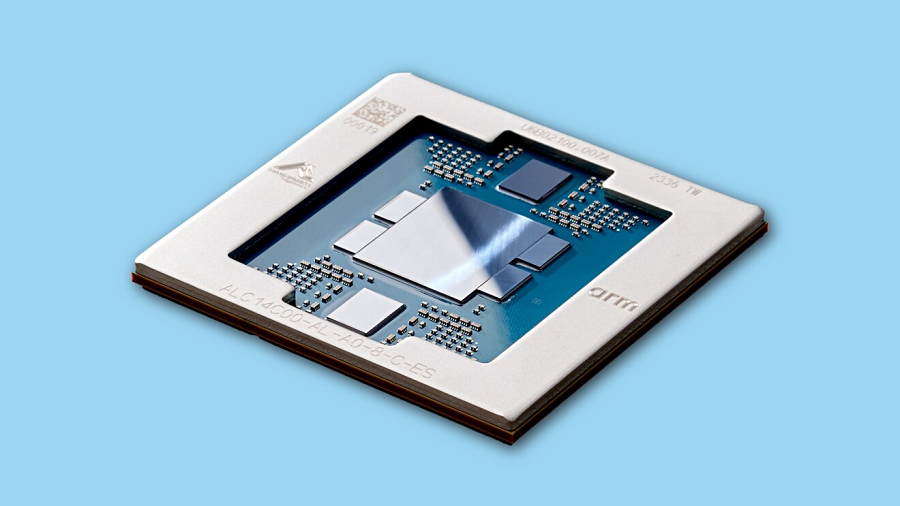

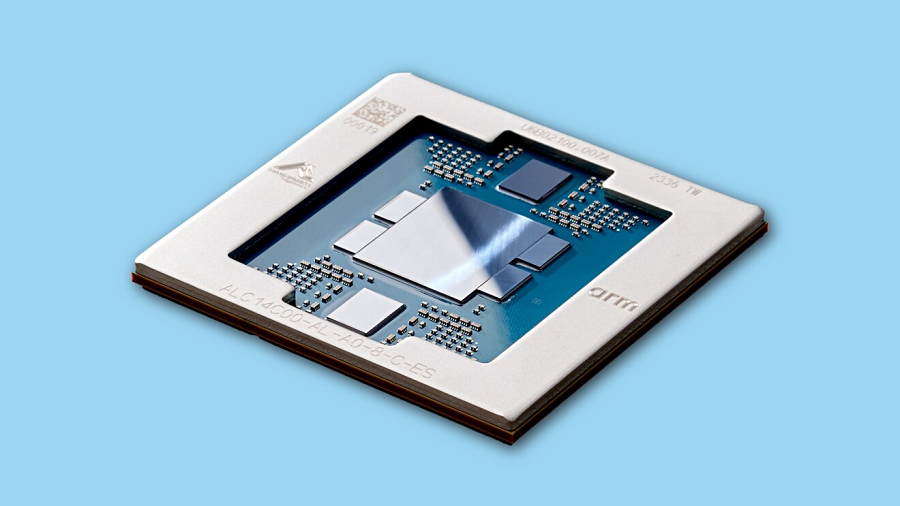

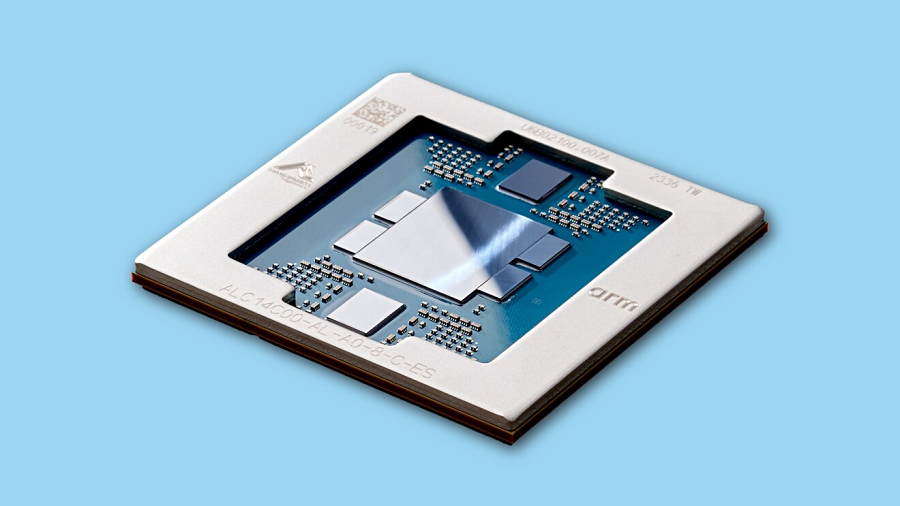

AWS debuted the newest addition to the Graviton chip line, the Graviton5, last December. It features 192 cores made using a three-nanometer manufacturing process. The cores implement Arm Holdings plc’s ubiquitous instruction set architecture, or ISA.

A chip’s ISA defines the language in which it expresses computations. The “words” that make up the language are simple computing operations such as arithmetic calculations. Arm’s ISA also includes matrix and vector extensions, computations optimized for AI workloads.

AWS says that Graviton5 is 25% faster than its previous custom CPU. One of the contributors to the chip’s speed is that its L3 cache is five times larger. An L3 cache is a memory pool that keeps bits immediately next to a processor’s cores. Shortening the distance between two sets of circuits reduces the amount of time it takes data to travel between them, which speeds up processing.

CPUs perform a wide range of tasks in AI clusters. They coordinate the graphics cards that perform the bulk of the calculations involved in running a neural network. Additionally, AI agents like those Meta plans to run on Graviton5 can use CPUs to power their tools. Those are the third-party applications an agent uses to automate tasks.

Graviton5 is designed to work with a collection of hardware and software modules called the AWS Nitro System. It offsets certain infrastructure management tasks from CPUs to specialized accelerators, which leaves more computing capacity for customer applications.

Public cloud operators often use a single set of infrastructure assets to power multiple customers’ workloads. Those workloads are isolated from one another to minimize cybersecurity risks. According to AWS, Graviton5 uses a module called the Nitro Isolation Engine to verify that different users’ workloads are indeed isolated from one another.

“AWS has been a trusted cloud partner for years, and expanding to Graviton allows us to run the CPU-intensive workloads behind agentic AI with the performance and efficiency we need at our scale,” said Meta head of infrastructure Santosh Janardhan.

The partnership is the second major CPU deal that the Facebook parent has disclosed in the past month. It earlier agreed to adopt Arm’s newly debuted AGI CPU, a 136-core processor optimized for AI servers. Furthermore, Meta plans to help the company design multiple future iterations of the chip.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.