AI

AI

AI

AI

AI

AI

Hewlett Packard Enterprise Co.’s recent announcement of an artificial intelligence cloud for large language models highlights a differentiated strategy that the company hopes will lead to sustained momentum in its high-performance computing business.

Although we think HPE has some distinct advantages with respect to its supercomputing intellectual property, the public cloud players have a substantial lead in AI with a point of view that generative AI such as OpenAI LP’s ChatGPT is fully dependent on the cloud and its massive compute capabilities. The question is: Can HPE bring unique capabilities and a focus to the table that will yield competitive advantage and ultimately, profits in the space?

In this Breaking Analysis, we unpack HPE’s LLM-as-a-service announcements from the company’s recent Discover conference and we’ll try to answer the question: Is HPE’s strategy a viable alternative to today’s public and private cloud gen AI deployment models, or is it ultimately destined to be a niche player in the market? To do so, we welcome to the program CUBE analyst Rob Strechay and Andy Thurai, vice president and principal analyst from Constellation Research Inc.

In 2014, prior to the split of HP and HPE, HP announced the Helion public cloud. Two years later it shut down the project and ceded the public cloud to Amazon Web Services Inc. At the time, HPE lacked the scale and differentiation to compete.

The company hopes this time around will be different. This past week at its Discover event, HPE entered the AI cloud market via an expansion of its GreenLake as-a-service platform. The company is offering large language models on-demand in a multitenant service, powered by HPE supercomputers.

HPE is partnering with a Germany-based startup called Aleph Alpha GmbH, a company specializing in large language models with a focus on explainability. HPE believes that this is critically important for its strategy of offering domain-specific AI applications. HPE’s first offering will provide access to Luminous, a pretrained LLM from Aleph Alpha what will allow companies to leverage their own data to train and tune custom models using proprietary information.

We asked Strechay and Thurai to unpack the announcement and provide their perspective. Here’s a summary of that conversation:

The core of the discussion centers on HPE’s plans to utilize Cray supercomputing infrastructure in an “as-a-service” model, aiming to make high-performance computing more accessible.

The following key points are noteworthy:

The analysts are cautiously optimistic about HPE’s announced strategy, noting that it could potentially revolutionize how large workloads and high-performance computing tasks are handled. However, both agree that the company needs to provide more specifics about its execution plan, particularly around MLOps, before any substantial conclusions can be drawn. Ultimately, it’s a matter of execution.

The conversation between Strechay and Thurai further delves into the specific workloads HPE plans to address with its new LLM-as-a-service offering, including climate modeling, bio-life sciences, healthcare and potentially financial modeling. The analysts also discuss HPE’s partnership with a lesser-known company, Aleph Alpha.

The following key points are noteworthy:

HPE’s new strategy offers promise in making supercomputing as-a-service a reality for significant sectors such as climate, healthcare and bio-life sciences. Its partnership with Aleph Alpha, though not with a mainstream company, signals a move toward demonstrating prowess in handling large AI workloads. While the direction seems promising, we still raise concerns about the absence of details around handling diverse AI, machine learning and deep learning workloads and the overall ecosystem approach.

I think in Europe, sustainability will significantly help them. I don’t think it’s as big an advantage in North America – Rob Strechay

Watch this clip of the analysts’ discussion, unpacking HPE’s AI Cloud and the prospects for success.

HPE’s fundamental belief is that the worlds of high-performance computing and AI are colliding in a way that confers competitive advantage to HPE. Indeed, HPE has a leadership position in HPC, as shown below.

HPE is No. 1 and No. 3 in terms of the world’s top five supercomputers with its Frontier and Lumi systems. Both leverage HPE’s Slingshot interconnect, which it believes is a critical differentiator.

It also believes that generative AI’s unique workload characteristics favor HPE’s supercomputing expertise. Here’s how Dr. Eng Lim Goh, HPE’s chief technology officer for AI, describes the difference between traditional cloud workloads and gen AI:

The traditional cloud service model is where you have many, many workloads running on many computer servers. But with a large language model, you have one workload running on many computer servers. And therefore, the scalability part is very different. This is where we bring in our supercomputing knowledge that we have for decades to be able to deal with this one big workload on many computer servers.

Here’s a summary of the analysts’ discussion:

Strechay and Thurai dive deeper into HPE’s legacy and potential within the large language model market, while analyzing the challenges they may face. Strechay draws on the company’s rich history in handling large applications, suggesting that this experience could give them a certain advantage. Thurai, however, seems skeptical about the company’s ability to leverage these resources and align them with the market’s needs.

The following additional points are noteworthy:

HPE’s vast experience and pedigree in managing extensive applications and longstanding relationships might provide it an advantage in the large language model market. However, potential challenges in data access for innovation workloads and the competitiveness of the market may pose hurdles to HPE’s success. Although the company boasts a powerful supercomputer and storage capacity, its ability to turn these assets into a compelling offering that outperforms rivals remains uncertain.

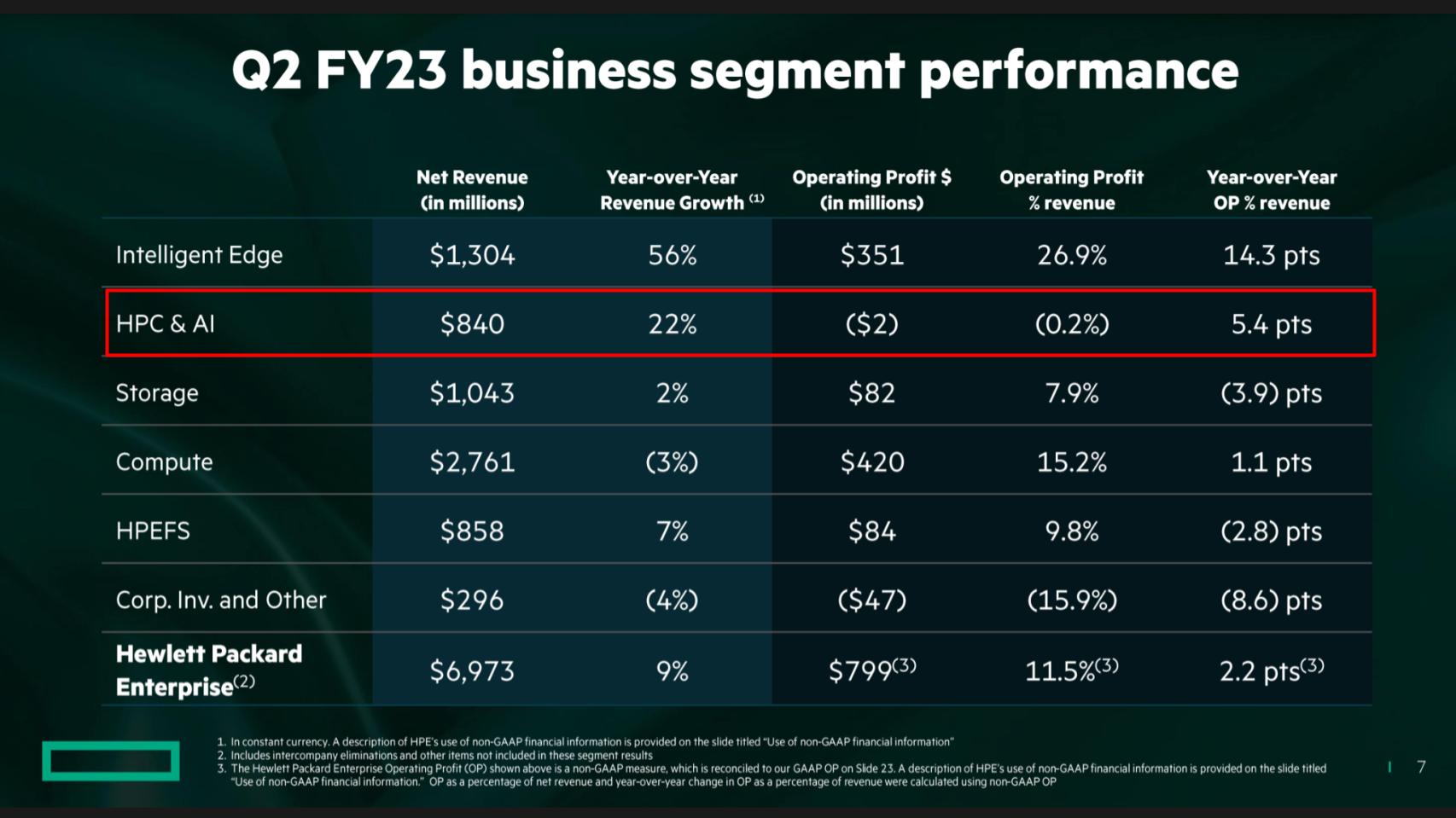

Above, we take a look at HPE’s lines of business and how its AI and HPC line of business perform. Remember HPE purchased Cray Inc. in 2019 and Silicon Graphics Inc. a few years before that to get into the HPC market.

Looking at HPE’s most recent quarter, you can see how it reports its business segments. HPC and AI is a multibillion-dollar business – and it’s growing – but it essentially is a break-even business. So it brings bragging rights but not profits. Intelligent Edge – aka Aruba — is the shining star right now with a $5 billion-plus run rate and 27% operating profit – so marginwise it’s HPE’s best business and throws off nearly as much profit as its server business.

Here’s how HPE Chief Executive Antonio Neri describes its advantage:

If you think about how public clouds are being architected, it’s a traditional network architecture at massive scale, with leaf and spine where generic or general-purpose workloads of sorts use that architecture to run workloads and connect to the data. When you go to this [LLM] architecture, which is an AI-native architecture, the network is completely different. You mentioned Slingshot. That network runs and operates totally different. Obviously, you need the network interface cards that connect with each GPU or CPU. And also, a bunch of accelerators that come with it. And there is silicon programmability with the contention software management. And that’s what Slingshot is all about, and it takes many, many years to develop. But if you look at public clouds today, generally speaking, they have not developed a network. They have been using companies like Arista, Cisco or Juniper and the like. We have that proprietary network. And so does Nvidia, by the way. But ours actually opens up multiple ecosystems and we can support any of them. So, it will take a lot of time and effort [for clouds to catch up]. And then, also remember, you’re now dealing with a whole different compute stack, which is direct liquid cooling, and that requires a whole different set of understanding.

There’s a lot to unpack in terms of what Antonio stated, including the network, Slingshot interconnect, the data services ecosystem and liquid cooling. We asked the question: “Is this a flip on ‘Jassy’s Law’ – i.e. there’s no compression algorithm for experience? Or does HPE have blind spots?”

The following points summarize the analysts’ take:

The key issue is whether HPE’s strategy of focusing on classic HPC workloads can be profitable. Although HPE’s network and interconnect give the company potential advantages, these may be short-lived, as commercial components are available off-the-shelf. Expertise with liquid cooling in data centers is nice but the real questions will come down to HPE’s ability to attract customer data to its platform versus those of competitors.

The next question we want to explore is: Does HPE’s service have the potential to go mainstream or is it destined for niche status?

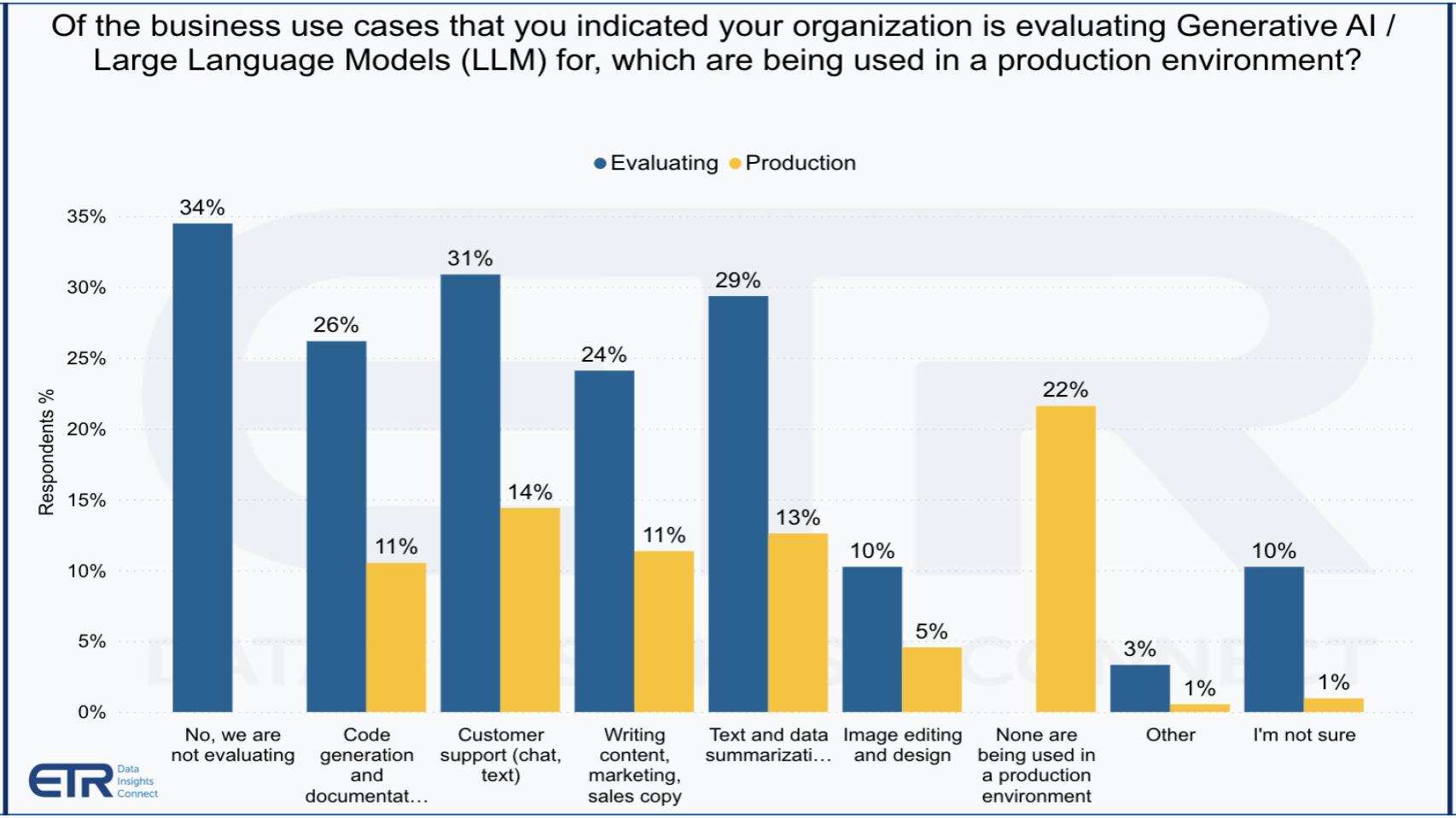

Above we show some Enterprise Technology Research data asking organizations how they’re pursuing generative AI and LLMs and what use cases they’re evaluating or actively deploying in production. Note that 34% of the organizations say they’re not evaluating, which is surprisingly high in our view. But for those moving forward, the use cases are what you’d expect, chatbots, code generation, writing marketing copy, summarizing text and more.

HPE has a different point of view. It’s focusing on very specific domains where companies have their own proprietary data and want to train that data but don’t want to incur the expense of acquiring and managing their own supercomputing infrastructure. At the same time, HPE believes because it has unique IP, it can be more reliable and cost-effective than the public cloud players, while still offering the advantages of a public cloud.

We asked the analysts: Is HPE on to something here in that these mainstream use cases are not where the money is for HPE? And is there gold in the hills with HPE’s strategy?

Although generally we’re taking a wait-and-see, “show me” approach with HPE’s LLM strategy, the following points are notable:

HPE’s strategy caters to a specific sector of the AI market – those dealing with HPC workloads. This niche could offer profitable opportunities, given the specialized needs and complexities involved. However, their success relies on effectively communicating their value proposition and the alignment of market trends.

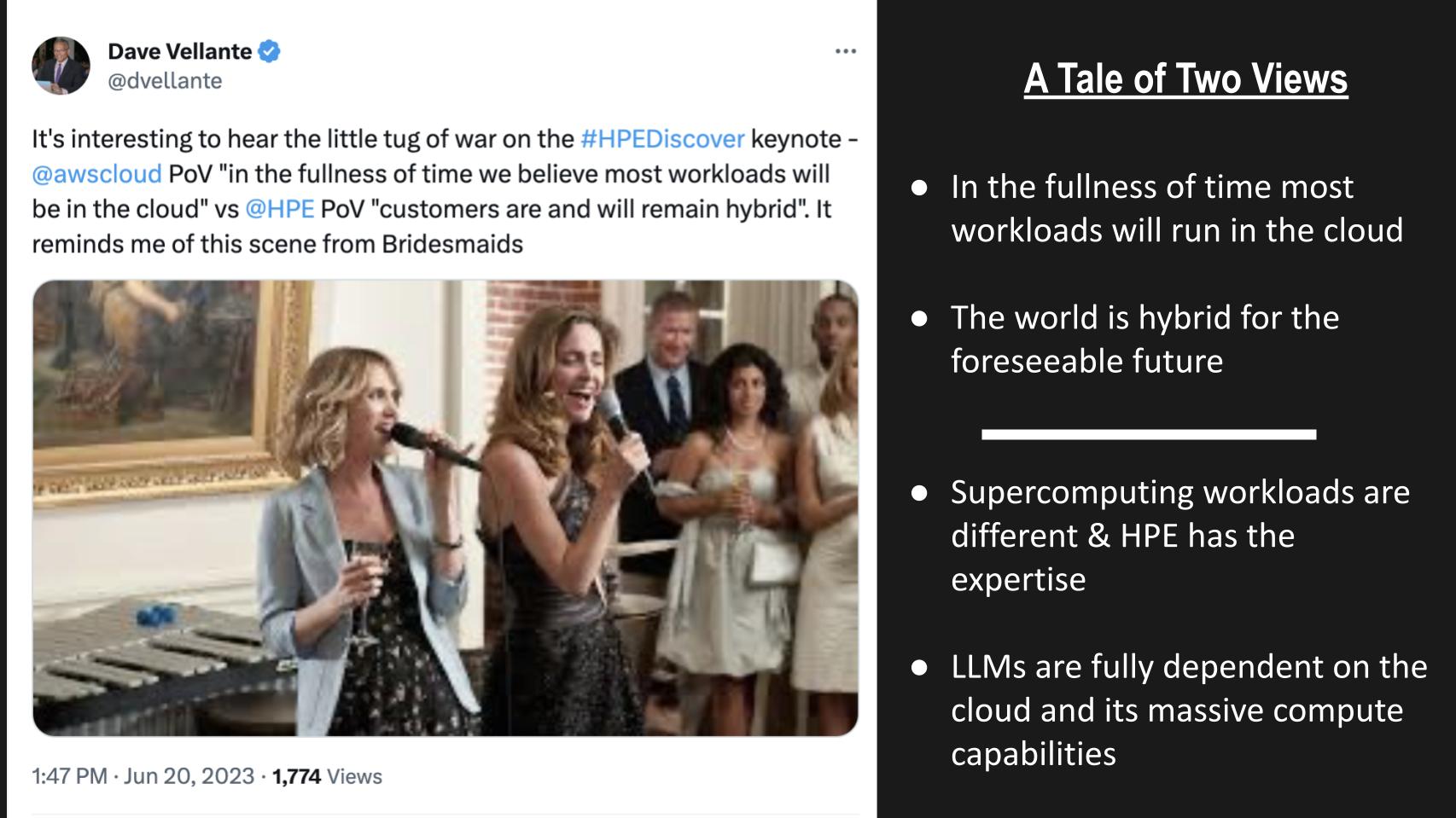

At Discover on the main stage we heard two distinct points of view:

Matt Wood of AWS was on the main stage with Neri, and much to our surprise, Wood said something to the effect of “over time, we still believe most of the workloads are going to go to the public cloud.” He actually said that in front of HPE’s audience.

Then, Neri basically countered that with (and we’re paraphrasing with tongue in cheek), “The world’s hybrid, dude. And it’s going to stay that way.”

Remember that scene in “Bridesmaids?” where the two bridesmaids are duel-singing for attention of the bride? Well, we heard this divergent theme with respect to LLMs this week. HPE put forth the notion that supercomputing workloads are different from cloud workloads and HPE has the expertise to make it happen more reliably, sustainable and effectively. Then on Bloomberg, we heard AWS CEO Adam Selipsky put forth the premise that LLMs are fully dependent on the public cloud and its massive compute capabilities.

At the end of “Bridesmaids,” the two rivals became good friends, so perhaps there’s room for both points of view. Although we believe the market in the public cloud for LLMs will be meaningfully larger, we don’t currently have a good enough sense of the delta to put a figure on it.

Here’s a summary of the analyst conversation:

We believe HPE’s focus of leveraging its strengths, particularly in supercomputing, is sound. However, achieving success in its chosen AI market niche will likely be a long-term process and will require greater recognition from customers that HPE is a player in AI. To do so, the company will have to leverage its distribution channel to attract key partners that are known for their AI prowess.

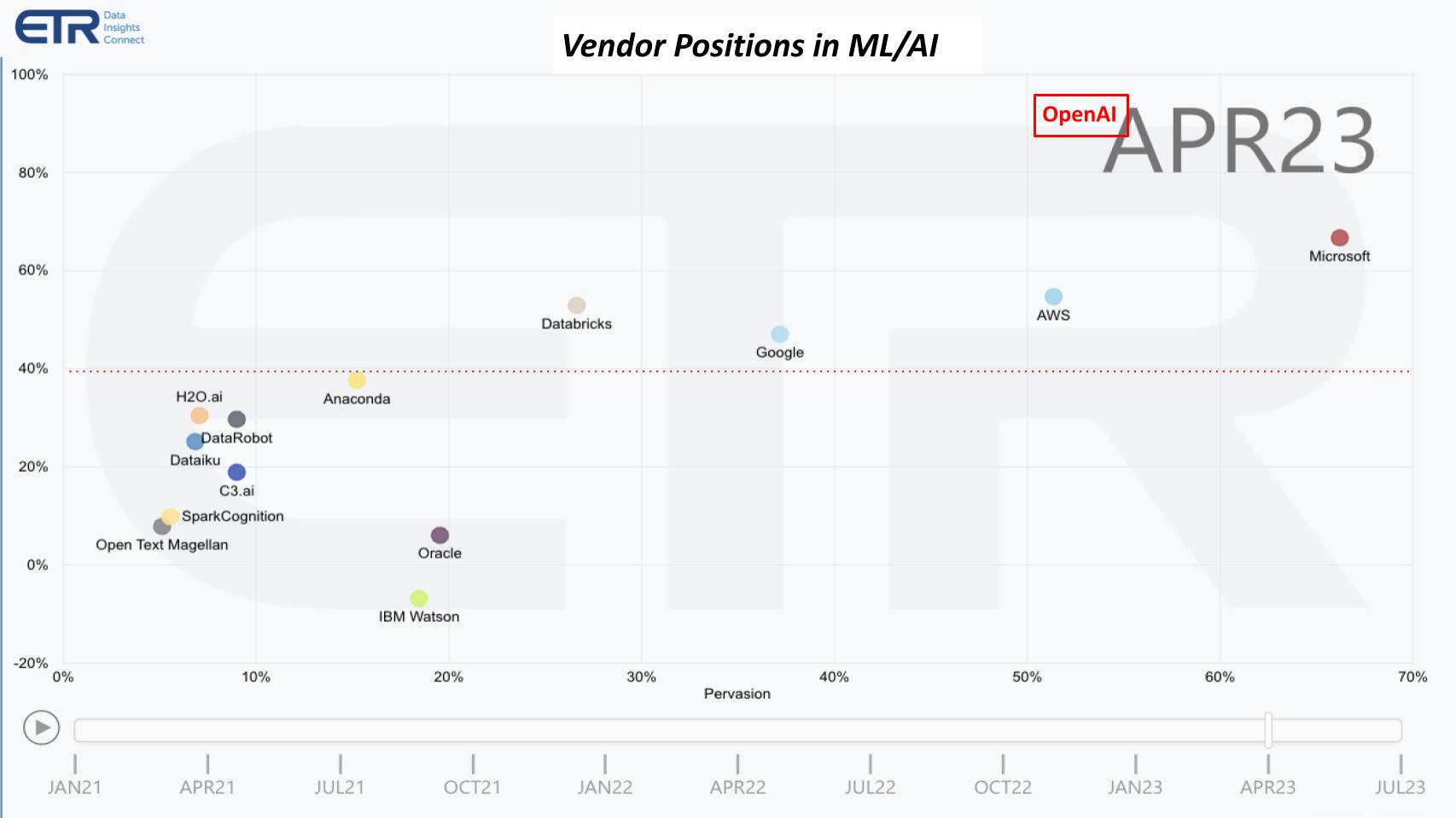

Despite extensive use of AI in its portfolio of offerings, HPE is not known as a player in AI. Let’s take a look at where the ETR data shows which firms are getting the share of wallet in the ML/AI market. Importantly, HPE has an opportunity to partner and accelerate its mindshare among tech decision makers.

The chart above shows Net Score or spending momentum on the vertical axis and the pervasiveness or presence in the ETR data set for ML/AI players. Right off the bat, focus on the Big Three public cloud players, Microsoft Corp., AWS and Google LLC – they dominate the conversation. They are pervasive and all show above the magic 40% red dotted line, an indicator of strong momentum.

Databricks Inc. also stands out as clearly a player in the mix.

OpenAI is also notable. We got a peek at the July ETR data and it won’t surprise you that OpenAI is setting new records — beyond even where we saw Snowflake Inc. at its peak Net Score. And OpenAI, as you’ll see next month in the ETR data, has gone mainstream, even in core IT shops.

It’s no surprise that you don’t see HPE in this mix, but over time, if the company’s aspirations are to come true, like Oracle Corp. and IBM Corp. you would want to see HPE on this chart.

Here are the key points from the analyst discussion:

HPE’s strategy in the AI market differs from many competitors by focusing on handling the largest and most complex models, leveraging its high-performance computing, networking and storage strengths. The effectiveness of this approach remains to be seen and will likely take another year or so to evaluate.

One indicator to watch is how integrated HPE’s solution really is in the GreenLake console. Is it a separate console? Is it really on top of the Aruba Central platform or is it a separate installation?

We close by discussing the competitive advantages and challenges HPE faces with some critical areas we’re watching.

Here’s a summary of our wrap up:

Generally, we were happy that HPE avoided discussions around quantum computing at HPE Discover. Although this may surprise some, it may make sense given that quantum is not yet ready for real-world applications.

HPE’s competitive edge in the AI and HPC market may lie in its infrastructure software and approach to handling large and complex models. It also has potential advantages in the deployment and inferencing aspects of AI and could stand to benefit from a future focus on sustainability. However, significant hurdles, including the need to strengthen its AI ecosystem and convincing customers to move their data, remain.

On balance, we give high marks to HPE for including LLM-as-a-service inside of GreenLake. In addition, HPE under Neri has a clear path of differentiation, which over time should pay dividends. HPE’s AI cloud offering will not be available for six months and it’s unclear how truly integrated it will be, so that is something we’ll be watching as an indicator of maturity. As well, it’s one thing to label the HPC business as AI but another thing entirely to generate profitability from the initiative.

That will be the ultimate arbiter of success.

Watch this clip of the analysts discussing the keys to watch in the future for HPE’s LLM play.

Thanks to Alex Myerson and Ken Shifman on production, podcasts and media workflows for Breaking Analysis. Special thanks to Kristen Martin and Cheryl Knight, who help us keep our community informed and get the word out, and to Rob Hof, our editor in chief at SiliconANGLE.

Remember we publish each week on Wikibon and SiliconANGLE. These episodes are all available as podcasts wherever you listen.

Email david.vellante@siliconangle.com, DM @dvellante on Twitter and comment on our LinkedIn posts.

Also, check out this ETR Tutorial we created, which explains the spending methodology in more detail. Note: ETR is a separate company from Wikibon and SiliconANGLE. If you would like to cite or republish any of the company’s data, or inquire about its services, please contact ETR at legal@etr.ai.

Here’s the full video analysis:

All statements made regarding companies or securities are strictly beliefs, points of view and opinions held by SiliconANGLE Media, Enterprise Technology Research, other guests on theCUBE and guest writers. Such statements are not recommendations by these individuals to buy, sell or hold any security. The content presented does not constitute investment advice and should not be used as the basis for any investment decision. You and only you are responsible for your investment decisions.

Disclosure: Many of the companies cited in Breaking Analysis are sponsors of theCUBE and/or clients of Wikibon. None of these firms or other companies have any editorial control over or advanced viewing of what’s published in Breaking Analysis.

Support our mission to keep content open and free by engaging with theCUBE community. Join theCUBE’s Alumni Trust Network, where technology leaders connect, share intelligence and create opportunities.

Founded by tech visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media brands that reach 15+ million elite tech professionals. Our new proprietary theCUBE AI Video Cloud is breaking ground in audience interaction, leveraging theCUBEai.com neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.